- #1

liamket

- 1

- 0

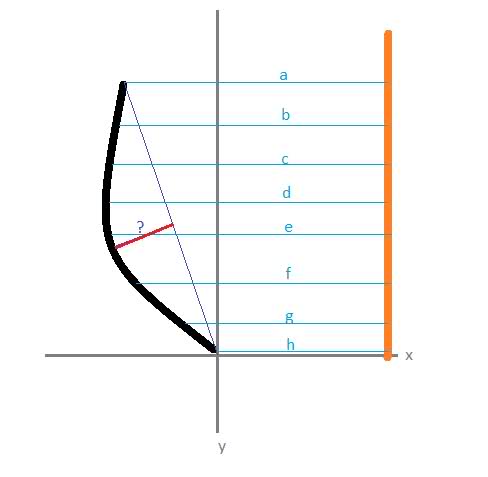

I do not expect there to be one answer to this question... it is something which can probably be done several ways. However, I am trying to build a tool for my workshop and would love some insight into the necessary math to make it work. I've pasted a very rough sketch of some of the basic concepts below (it's also a little extreme, but only to show what makes this whole thing difficult)

.

Essentially I will have a vertical row of sensors (probably about 20 of them) which will be calibrated and will read the distance to the surface at their respective heights. What I need to figure out is how to calculate the depth of the dip. What makes it hard is that the top and bottom points are not always going to be equal in distance from the sensors. Somehow i feel the y-axis needs to be tilted to a standard. Who knows.

This is likely very complicated... but i intend to eventually write a program which can calculate this and display it on a digital display.

thoughts?

.

Essentially I will have a vertical row of sensors (probably about 20 of them) which will be calibrated and will read the distance to the surface at their respective heights. What I need to figure out is how to calculate the depth of the dip. What makes it hard is that the top and bottom points are not always going to be equal in distance from the sensors. Somehow i feel the y-axis needs to be tilted to a standard. Who knows.

This is likely very complicated... but i intend to eventually write a program which can calculate this and display it on a digital display.

thoughts?