- #1

MatthijsRog

- 14

- 1

Hi all,

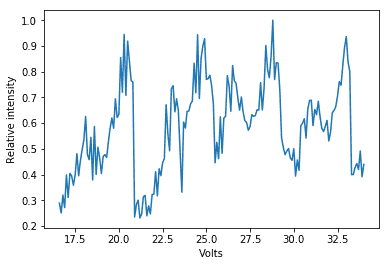

I performed a resonance experiment over the past two weeks, in which I collected the intensity of a Fabry-Perot cavity whilst adjusting the mirror distance with a piezo-element (the specific setup of the experiment is fairly detached from the question I will ask). My raw data is included in the figure:

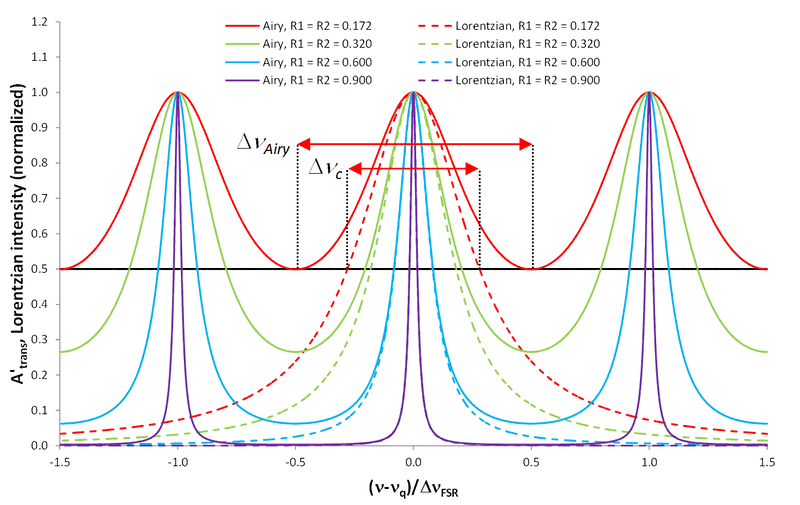

The results should theoretically form an Airy-distribution as shown in the following figure:

Mind that the dashed lines are NOT Airy-distributions. The Airy distribution is also given by

In the case of my experiment, R1 and R2 are known while the argument of the sine is a linear function of the x-axis.

While the resonance peaks are clearly visible in my data (they are even more or less at constant separation), I'm having trouble fitting a function over it. Because of the small "sub-peak" coming shortly before each major peak, the Scipy.optimize.curve_fit method keeps putting the Airy-distribution's peaks somewhere between the two.

Mind that I'm trying to perform the fit for specified R1 and R2 while trying out different linear functions (f(x) = ax + b) as the argument for the sine.

My question is this: how could I best go about fitting an Airy distribution over my data, staying as accurate and scientific as possible?

I could obviously make the fit by simply trying out different linear functions. But I could only get accurate on the horizontal positions of the peaks, not on the widths of the peaks.

However, I don't know how to manipulate my data to make it workable for a computer while staying scientific.

What would be the best to do in my case? Simply do it by hand?

I performed a resonance experiment over the past two weeks, in which I collected the intensity of a Fabry-Perot cavity whilst adjusting the mirror distance with a piezo-element (the specific setup of the experiment is fairly detached from the question I will ask). My raw data is included in the figure:

The results should theoretically form an Airy-distribution as shown in the following figure:

Mind that the dashed lines are NOT Airy-distributions. The Airy distribution is also given by

In the case of my experiment, R1 and R2 are known while the argument of the sine is a linear function of the x-axis.

While the resonance peaks are clearly visible in my data (they are even more or less at constant separation), I'm having trouble fitting a function over it. Because of the small "sub-peak" coming shortly before each major peak, the Scipy.optimize.curve_fit method keeps putting the Airy-distribution's peaks somewhere between the two.

Mind that I'm trying to perform the fit for specified R1 and R2 while trying out different linear functions (f(x) = ax + b) as the argument for the sine.

My question is this: how could I best go about fitting an Airy distribution over my data, staying as accurate and scientific as possible?

I could obviously make the fit by simply trying out different linear functions. But I could only get accurate on the horizontal positions of the peaks, not on the widths of the peaks.

However, I don't know how to manipulate my data to make it workable for a computer while staying scientific.

What would be the best to do in my case? Simply do it by hand?