- #1

David932

- 7

- 0

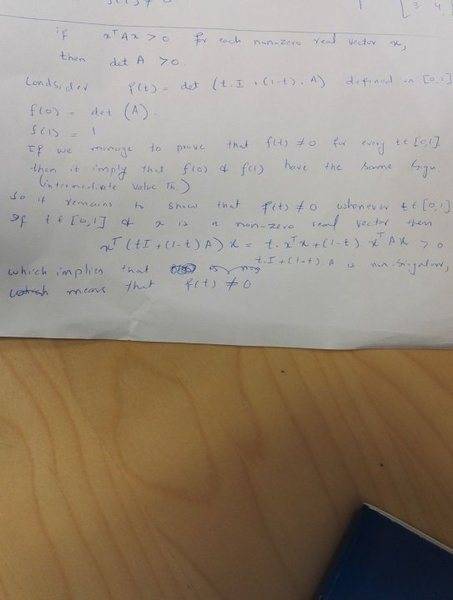

1. Problem statement : suppose we have a Hermitian 3 x 3 Matrix A and X is any non-zero column vector. If

X(dagger) A X > 0 then it implies that determinant (A) > 0.

I tried to prove this statement and my attempt is attached as an image. Please can anyone guide me in a step by step way to approach this problem. I am not sure and not very clear about my way of approach about the problem.

P.S. I am new to the forum and I apologize if my post has flaws. I tried my best to formulate the problem and my attempt at it clearly but still I am not very proficient in this formatting stuff. I wrote it in MSword too and then posted it but the whole text appeared struck out and then it was removed.

X(dagger) A X > 0 then it implies that determinant (A) > 0.

I tried to prove this statement and my attempt is attached as an image. Please can anyone guide me in a step by step way to approach this problem. I am not sure and not very clear about my way of approach about the problem.

P.S. I am new to the forum and I apologize if my post has flaws. I tried my best to formulate the problem and my attempt at it clearly but still I am not very proficient in this formatting stuff. I wrote it in MSword too and then posted it but the whole text appeared struck out and then it was removed.

Last edited by a moderator: