kingwinner

- 1,266

- 0

Time Series: "Residuals" of ARMA model

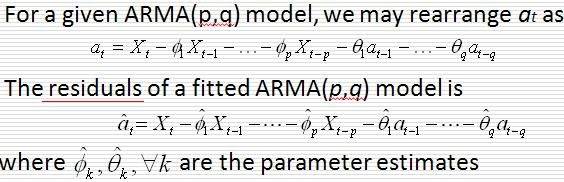

To check whether the white noise {at} are uncorrelated, we usually look at the residuals (which are sample estimates of the white noise {at}) and residual plots. But I just don't understand the meaning of "residuals" in the context of ARMA model...

In the above definition, the residual "at hat" is in terms of the white noise terms

at-1, at-2, ..., at-q. But we know that the white noise terms are unobservable (residuals are observable, white noise terms are not), and there is no way we can know the exact values of at-1, at-2, ..., at-q, right? Now if we don't know everything on the right hand side, how can we calculate the residuals "at hat"? I just don't understand how residuals of ARMA model can be calculated based on this definition.

I tried searching the internet, but couldn't find much.

Hopefully someone can explain. Thank you!

To check whether the white noise {at} are uncorrelated, we usually look at the residuals (which are sample estimates of the white noise {at}) and residual plots. But I just don't understand the meaning of "residuals" in the context of ARMA model...

In the above definition, the residual "at hat" is in terms of the white noise terms

at-1, at-2, ..., at-q. But we know that the white noise terms are unobservable (residuals are observable, white noise terms are not), and there is no way we can know the exact values of at-1, at-2, ..., at-q, right? Now if we don't know everything on the right hand side, how can we calculate the residuals "at hat"? I just don't understand how residuals of ARMA model can be calculated based on this definition.

I tried searching the internet, but couldn't find much.

Hopefully someone can explain. Thank you!