Discussion Overview

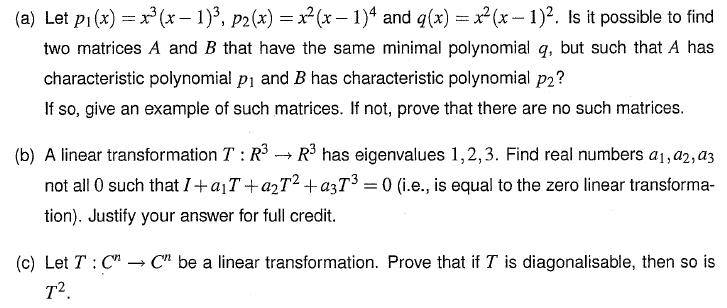

The discussion revolves around characteristic polynomials and minimal polynomials, focusing on two main questions: one involving multiple parts and another requiring a proof. Participants are seeking clarification and assistance with their understanding and proofs related to linear transformations and eigenvectors.

Discussion Character

- Homework-related

- Technical explanation

- Conceptual clarification

Main Points Raised

- One participant requests help with two questions, indicating they are struggling with concepts related to characteristic and minimal polynomials.

- Another participant asks for the original poster to share their attempts to solve the questions, suggesting a collaborative approach.

- A participant provides a proof attempt for the second question, expressing confusion about the lecturer's conclusion and seeking further clarification.

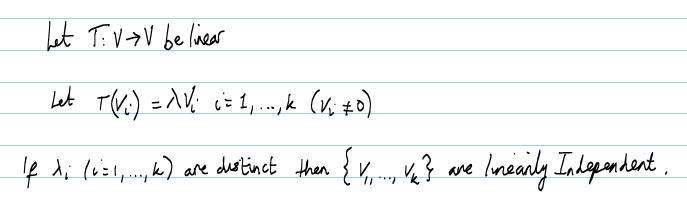

- One participant suggests using induction to prove the second question, outlining a method involving eigenvalues and linear independence.

- Another participant corrects a previous statement about the base case of induction, indicating a need for precision in the proof process.

- A different participant references the rational canonical form and the Cayley-Hamilton theorem as tools to address parts of the first question, suggesting these concepts may simplify the problem.

- Another participant reassures the original poster that their proof is straightforward and encourages them to review relevant definitions and concepts to enhance their understanding.

Areas of Agreement / Disagreement

There is no clear consensus on the solutions to the questions posed. Participants express varying levels of understanding and approaches to the problems, indicating that multiple competing views and methods remain in the discussion.

Contextual Notes

Participants reference specific mathematical concepts such as linear independence, eigenvectors, and the Cayley-Hamilton theorem, but there are unresolved assumptions and steps in the proofs that may affect the clarity of the discussion.