Math Amateur

Gold Member

MHB

- 3,920

- 48

I am reading "Abstract Algebra: Structures and Applications" by Stephen Lovett ...

I am currently focused on Chapter 8: Galois Theory, Section 1: Automorphisms of Field Extensions ... ...

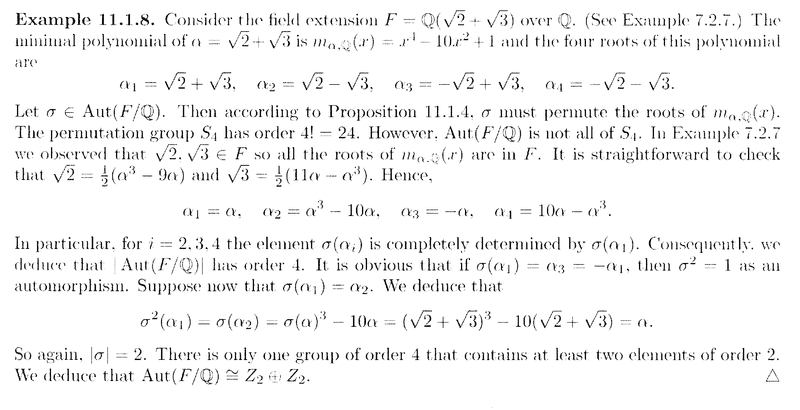

I need help with Example 11.1.8 on page 559 ... ...Example 11.1.8 reads as follows:

My questions regarding the above example from Lovett are as follows:

Question 1In the above text from Lovett we read the following:" ... ... The minimal polynomial of ##\alpha = \sqrt{2} + \sqrt{3}## is ##m_{ \alpha , \mathbb{Q} } (x) = x^4 - 10x^2 + 1## and the four roots of this polynomial are##\alpha_1 = \sqrt{2} + \sqrt{3}, \ \ \alpha_2 = \sqrt{2} - \sqrt{3}, \ \ \alpha_3 = - \sqrt{2} + \sqrt{3}, \ \ \alpha_4 = - \sqrt{2} - \sqrt{3} ##

... ... ... ... "

Can someone please explain why, exactly, these are roots of the minimum polynomial ##m_{ \alpha , \mathbb{Q} } (x) = x^4 - 10x^2 + 1## ... ... and further, how we would go about methodically determining these roots to begin with ... ...

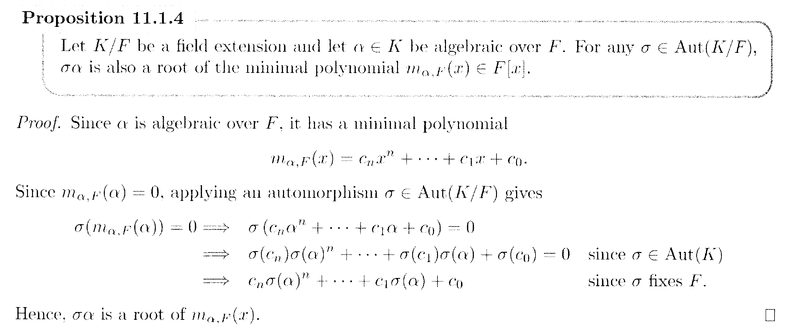

Question 2In the above text from Lovett we read the following:" ... ... Let ##\sigma \in \text{ Aut}(F/ \mathbb{Q} )##. Then according to Proposition 11.1.4, ##\sigma## must permute the roots of ##m_{ \alpha , \mathbb{Q} } (x)## ... ... "Can someone explain what this means ... how exactly does ##\sigma## permute the roots of ##m_{ \alpha , \mathbb{Q} } (x)## ... ... and how does Proposition 11.1.4 assure this, exactly ... ...

NOTE: The above question refers to Proposition 11.1.4 so I am providing that proposition and its proof ... ... as follows:

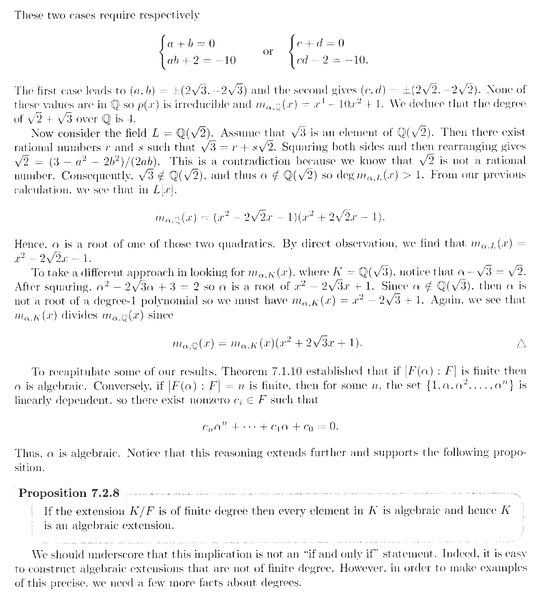

Question 3In the above text from Lovett we read the following:" ... ... In Example 7.2.7 we observed that ##\sqrt{2}, \sqrt{3} \in F## so all the roots of ##m_{ \alpha , \mathbb{Q} } (x)## are in ##F## ... ... "Can someone please explain in simple terms exactly why and how we know that ##\sqrt{2}, \sqrt{3} \in F## ... ... ?

NOTE: Lovett mentions Example 7.2.7 so I am providing the text of this example ... as follows:

I hope that someone can help with the above three questions ...

Any help will be much appreciated ... ...

Peter

I am currently focused on Chapter 8: Galois Theory, Section 1: Automorphisms of Field Extensions ... ...

I need help with Example 11.1.8 on page 559 ... ...Example 11.1.8 reads as follows:

My questions regarding the above example from Lovett are as follows:

Question 1In the above text from Lovett we read the following:" ... ... The minimal polynomial of ##\alpha = \sqrt{2} + \sqrt{3}## is ##m_{ \alpha , \mathbb{Q} } (x) = x^4 - 10x^2 + 1## and the four roots of this polynomial are##\alpha_1 = \sqrt{2} + \sqrt{3}, \ \ \alpha_2 = \sqrt{2} - \sqrt{3}, \ \ \alpha_3 = - \sqrt{2} + \sqrt{3}, \ \ \alpha_4 = - \sqrt{2} - \sqrt{3} ##

... ... ... ... "

Can someone please explain why, exactly, these are roots of the minimum polynomial ##m_{ \alpha , \mathbb{Q} } (x) = x^4 - 10x^2 + 1## ... ... and further, how we would go about methodically determining these roots to begin with ... ...

Question 2In the above text from Lovett we read the following:" ... ... Let ##\sigma \in \text{ Aut}(F/ \mathbb{Q} )##. Then according to Proposition 11.1.4, ##\sigma## must permute the roots of ##m_{ \alpha , \mathbb{Q} } (x)## ... ... "Can someone explain what this means ... how exactly does ##\sigma## permute the roots of ##m_{ \alpha , \mathbb{Q} } (x)## ... ... and how does Proposition 11.1.4 assure this, exactly ... ...

NOTE: The above question refers to Proposition 11.1.4 so I am providing that proposition and its proof ... ... as follows:

Question 3In the above text from Lovett we read the following:" ... ... In Example 7.2.7 we observed that ##\sqrt{2}, \sqrt{3} \in F## so all the roots of ##m_{ \alpha , \mathbb{Q} } (x)## are in ##F## ... ... "Can someone please explain in simple terms exactly why and how we know that ##\sqrt{2}, \sqrt{3} \in F## ... ... ?

NOTE: Lovett mentions Example 7.2.7 so I am providing the text of this example ... as follows:

I hope that someone can help with the above three questions ...

Any help will be much appreciated ... ...

Peter

Attachments

Last edited: