Discussion Overview

The discussion centers around the derivation of the variance in particle number within the context of Fermi-Dirac statistics, specifically exploring the relationship between the mean particle number and the grand-canonical partition function. The scope includes theoretical aspects of statistical mechanics and mathematical reasoning related to these concepts.

Discussion Character

- Technical explanation

- Mathematical reasoning

Main Points Raised

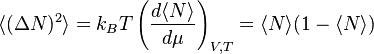

- One participant references an equation from Wikipedia regarding Fermi-Dirac statistics and seeks clarification on its derivation.

- Another participant suggests expressing the mean particle number in terms of the grand-canonical partition function.

- A third participant outlines the derivation of the variance in particle number, starting with the definition of the variance and relating it to the grand-canonical partition function.

- This participant provides formulas for the mean particle number and its square, indicating that these can be derived from the grand-canonical partition function.

- They emphasize the importance of taking derivatives at constant volume and temperature for the derivations to hold.

- A later reply expresses gratitude for the provided explanation and formulas.

Areas of Agreement / Disagreement

The discussion does not appear to have any explicit disagreements, but it does not reach a consensus on the derivation process, as participants are still exploring the topic and providing different aspects of the derivation.

Contextual Notes

There are limitations regarding the assumptions made about the conditions under which the derivatives are taken, as well as the dependence on the definitions of the grand-canonical partition function and the Gibbs factors.