Discussion Overview

The discussion revolves around the mathematical statement that 0.999... is equal to 1. Participants explore the implications of this equality, the reasoning behind it, and potential objections to the concept. The scope includes mathematical reasoning and conceptual clarification.

Discussion Character

- Debate/contested

- Mathematical reasoning

Main Points Raised

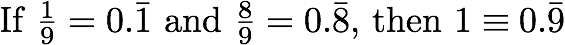

- One participant asserts that 0.999... equals 1, explaining it as an infinite sum of a geometric series that converges to 1.

- The same participant argues that there is no positive distance between 0.999... and 1, suggesting they represent the same number.

- Concerns are raised about the existence of two different expressions for the same number, which the participant addresses by comparing it to other equivalent expressions.

- Another participant points out that the original question posed may differ from the title, indicating a potential misunderstanding.

- A reference to an existing thread is made, suggesting that the topic has been discussed previously.

Areas of Agreement / Disagreement

Participants do not reach a consensus, as some support the equality of 0.999... and 1, while others raise questions and concerns about the implications of this statement.

Contextual Notes

Some arguments depend on the definitions of numbers and the properties of the real number system, particularly regarding the concept of infinitesimals and convergence of series.