Discussion Overview

The thread discusses various examples of ChatGPT's performance, highlighting both successful and unsuccessful outputs. Participants share their experiences with the AI's responses to mathematical problems, programming tasks, and creative prompts, exploring the implications of its word prediction capabilities and logical reasoning.

Discussion Character

- Exploratory

- Technical explanation

- Debate/contested

- Mathematical reasoning

- Experimental/applied

Main Points Raised

- Some participants note that ChatGPT produces a mix of good and bad results, with specific examples illustrating its inconsistencies in mathematical calculations.

- One participant describes a successful instance where ChatGPT identified a bug in Python code and suggested a rewrite, although it incorrectly stated the absence of a return statement.

- Another participant shares an example where ChatGPT misunderstood a question related to Feynman diagrams, suggesting that its interpretation was influenced by common meanings of terms rather than specific scientific contexts.

- Concerns are raised about ChatGPT's ability to handle complex subjects like science and engineering compared to more textual fields like law.

- Some participants express skepticism about ChatGPT's reasoning, suggesting it sometimes provides random answers in hopes of being correct.

- Examples of ChatGPT's performance on multiple-choice questions are shared, with mixed evaluations of its reasoning quality.

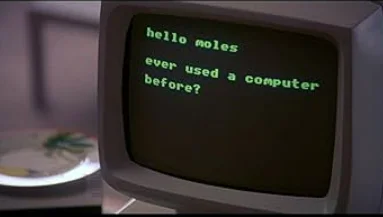

- Creative outputs, such as rephrasing historical texts in a whimsical style, are discussed, with varying opinions on the quality of the results.

- A participant mentions ChatGPT's struggles with solving elastic collision problems, illustrating its limitations in applying physics concepts accurately.

Areas of Agreement / Disagreement

Participants express a range of opinions on ChatGPT's performance, with no clear consensus on its capabilities. Some examples are praised, while others are criticized, indicating ongoing debate about its reliability and effectiveness in different contexts.

Contextual Notes

Limitations in ChatGPT's reasoning and understanding of context are highlighted, particularly in technical subjects. Participants note that its responses may be influenced by the commonality of terms rather than their specific scientific meanings.

Who May Find This Useful

This discussion may be of interest to users exploring AI capabilities in problem-solving, programming, and creative writing, as well as those evaluating the reliability of AI in technical fields.

*

*