Lajka

- 68

- 0

Hi again,

I don't want it to seem like I'm spamming topics here, but I was hoping I could get help with this dillema, too.

So, let's say that, in affine 2-dimensional space, we have some two, non-orthogonal, independent vectors, and we also pick some point for an origin O. This clearly forms a basis and a coordinate system for that space, thus making it a [tex]R^{2}[/tex] linear vector space.

Now, every vector [tex]v[/tex] can then be written as [tex]v = \sum^{2}_{k=1} v_{k}e_{k}[/tex], where [tex]v_k[/tex]s are the coordinates with respect to this non-orthogonal basis [tex]{ e_{k} }[/tex]

My question is, how to construct the dot product here?

Let me explain my confusion.

It's clear that the coordinates for [tex]e_{1}[/tex] will be [tex][1 0]^{t}[/tex], and for [tex]e_{2}[/tex] will be [tex][0 1]^{t}[/tex]. So, if I just multiply coordinates and sum them, I will get zero! This can't be true, because dot product must not depend of the choice of the basis. The dot product of these two vectors must be non-zero.

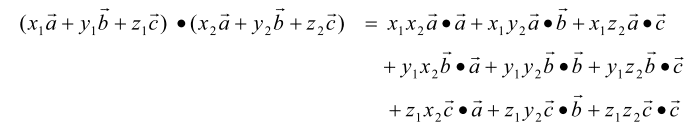

Then I found this http://fatman.cchem.berkeley.edu/xray/VectorSpaces.pdf" , and I liked it. Especially this

and

However, here's the problem. This matrix M, which is called the metric tensor in the paper, uses the lengths and angles between our non-orthogonal basis vectors to calculate its elements. But the concept of the length (norm) and the angle is something I am yet to define later using the dot product! How can he use that? I don't what lengths and angles are yet!

Look at this

How do I know how much is ab or ba? Or a^2 for that matter, the norm? I'm TRYING to define the inner product here, and they're asking how much is ab! I don't know yet! All I know is that, in this case, [tex]a = (1 0 0)^{T}[/tex], and [tex]b = (0 1 0)^{T}[/tex], because they're basis vectors, and these are their coordinates w.r.t. to themselves! And that doesn't mean anything to me.

Somebody has to tell me how much is ab, or bc, or any other combination, right? How can I tell that by myself? I don't understand, maybe to take a ruler in my hands and measure them on my paper, where I supposedly draw them?

For orthogonal basis vectors, they usually do, they tell you do something like " oh, yeah, [tex]e_{k}e_{j} = \delta_{kj}[/tex]", but how do THEY know this? How do we even pick orthogonal vectors for our basis, in the very beginning? How do we know they're orthogonal? We can't use coordinates for these are the basis vectors (we defined coordinates with respect to them, so it's useless!), we don't have lengths and angles, this all comes after we define the dot product.

If we want to find out if my basis vectors are orthogonal, we have to do the dot product. If we want to define dot product, we have to find metric tensor M. If we want to find M, we need to find the angles between our basis vectors. If we want to find the angles, we need to know the dot product!

I'm in a loop here, please help me escape.

The long story short: I'm trying to define a dot product in my vector space, using my two basis vectors I picked arbitrarily in affine space, along with some origin O. But in order to do that, I'm asked to tell, in the middle of the process, how much is the dot vector between my basis vectors. I don't know, that's the point!

So, where am I wrong here?

I don't want it to seem like I'm spamming topics here, but I was hoping I could get help with this dillema, too.

So, let's say that, in affine 2-dimensional space, we have some two, non-orthogonal, independent vectors, and we also pick some point for an origin O. This clearly forms a basis and a coordinate system for that space, thus making it a [tex]R^{2}[/tex] linear vector space.

Now, every vector [tex]v[/tex] can then be written as [tex]v = \sum^{2}_{k=1} v_{k}e_{k}[/tex], where [tex]v_k[/tex]s are the coordinates with respect to this non-orthogonal basis [tex]{ e_{k} }[/tex]

My question is, how to construct the dot product here?

Let me explain my confusion.

It's clear that the coordinates for [tex]e_{1}[/tex] will be [tex][1 0]^{t}[/tex], and for [tex]e_{2}[/tex] will be [tex][0 1]^{t}[/tex]. So, if I just multiply coordinates and sum them, I will get zero! This can't be true, because dot product must not depend of the choice of the basis. The dot product of these two vectors must be non-zero.

Then I found this http://fatman.cchem.berkeley.edu/xray/VectorSpaces.pdf" , and I liked it. Especially this

and

However, here's the problem. This matrix M, which is called the metric tensor in the paper, uses the lengths and angles between our non-orthogonal basis vectors to calculate its elements. But the concept of the length (norm) and the angle is something I am yet to define later using the dot product! How can he use that? I don't what lengths and angles are yet!

Look at this

How do I know how much is ab or ba? Or a^2 for that matter, the norm? I'm TRYING to define the inner product here, and they're asking how much is ab! I don't know yet! All I know is that, in this case, [tex]a = (1 0 0)^{T}[/tex], and [tex]b = (0 1 0)^{T}[/tex], because they're basis vectors, and these are their coordinates w.r.t. to themselves! And that doesn't mean anything to me.

Somebody has to tell me how much is ab, or bc, or any other combination, right? How can I tell that by myself? I don't understand, maybe to take a ruler in my hands and measure them on my paper, where I supposedly draw them?

For orthogonal basis vectors, they usually do, they tell you do something like " oh, yeah, [tex]e_{k}e_{j} = \delta_{kj}[/tex]", but how do THEY know this? How do we even pick orthogonal vectors for our basis, in the very beginning? How do we know they're orthogonal? We can't use coordinates for these are the basis vectors (we defined coordinates with respect to them, so it's useless!), we don't have lengths and angles, this all comes after we define the dot product.

If we want to find out if my basis vectors are orthogonal, we have to do the dot product. If we want to define dot product, we have to find metric tensor M. If we want to find M, we need to find the angles between our basis vectors. If we want to find the angles, we need to know the dot product!

I'm in a loop here, please help me escape.

The long story short: I'm trying to define a dot product in my vector space, using my two basis vectors I picked arbitrarily in affine space, along with some origin O. But in order to do that, I'm asked to tell, in the middle of the process, how much is the dot vector between my basis vectors. I don't know, that's the point!

So, where am I wrong here?

Last edited by a moderator: