Happiness

- 686

- 30

From the following definition, it seems that the uncertainty principle is an epistemological statement.

"Heisenberg's uncertainty principle, is any of a variety of mathematical inequalities[1] asserting a fundamental limit to the precision with which certain pairs of physical properties of a particle, known as complementary variables, such as position x and momentum p, can be known."

That is, we cannot know the position and momentum of a particle to infinite precision. But nothing is said about the ontology of the particle. So a particle could have a precise position ##x=0## and a precise momentum ##p=0## as long as the value of both variables cannot be known to us simultaneously.

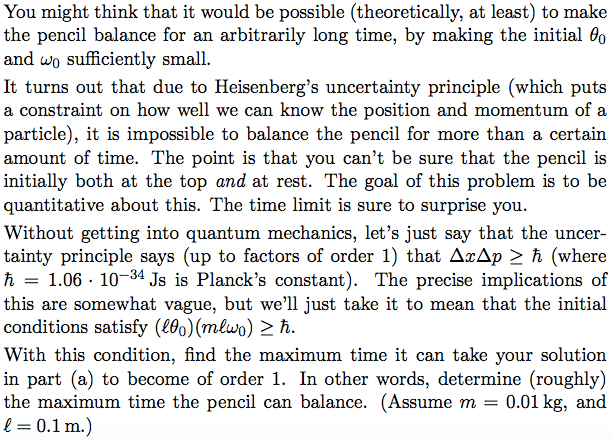

But the following exercise seems to suggest that the uncertainty principle is an ontological statement, that is, a particle cannot have a precise position and a precise momentum simultaneously.

So is the uncertainty principle an epistemological statement or an ontological one?

"Heisenberg's uncertainty principle, is any of a variety of mathematical inequalities[1] asserting a fundamental limit to the precision with which certain pairs of physical properties of a particle, known as complementary variables, such as position x and momentum p, can be known."

That is, we cannot know the position and momentum of a particle to infinite precision. But nothing is said about the ontology of the particle. So a particle could have a precise position ##x=0## and a precise momentum ##p=0## as long as the value of both variables cannot be known to us simultaneously.

But the following exercise seems to suggest that the uncertainty principle is an ontological statement, that is, a particle cannot have a precise position and a precise momentum simultaneously.

So is the uncertainty principle an epistemological statement or an ontological one?