SUMMARY

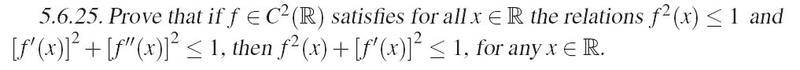

The discussion centers on the problem of differentiability, specifically analyzing the behavior of a function f(x) that oscillates between -1 and 1. The key inequality f'(x)^2 + f''(x)^2 ≤ 1 indicates that the first and second derivatives cannot both be large simultaneously, which leads to contradictions if f(x) approaches the bounds of its oscillation. The challenge lies in rigorously demonstrating how the first derivative f'(x) must decrease to zero without violating the established constraints.

PREREQUISITES

- Understanding of calculus concepts, particularly derivatives and their properties.

- Familiarity with the behavior of oscillating functions.

- Knowledge of inequalities involving derivatives.

- Experience with geometric interpretations of mathematical problems.

NEXT STEPS

- Study the implications of the inequality f'(x)^2 + f''(x)^2 ≤ 1 in more depth.

- Explore geometric interpretations of differentiability in oscillating functions.

- Investigate advanced calculus techniques for proving properties of derivatives.

- Learn about theorems related to the behavior of functions constrained by their derivatives.

USEFUL FOR

Mathematics students, calculus instructors, and researchers in mathematical analysis who are interested in the properties of differentiable functions and their oscillatory behavior.