Okay guys just want to follow up on all that was discussed here. I got my project to work without letting any of the magic blue smoke out

.

I tried diving into the NP junction theory stuff but that avenue was too complicated, and I really didn't want to have to buy some complicated flux capacitor / linear regulator 9000HD. So instead I spent a few hours going through experiments with a multimeter, breadboard, and some LEDs until I had tested all of my assumptions.

Here's a couple things I noticed doing my experiments:

-My LEDs must have all been from the same batch, as they were all very close in terms of brightness and voltage drop across them (we're talking [itex]\pm[/itex]0.01 V). I tested them in varying arrangements from 2, 3, all the way up to 6 in series, and checked the voltage across each. I also strung all of them together across a 2V parallel arrangement and didn't notice any dim/bright ones.

-The most interesting result from my tests was that each LED received an equal voltage drop ([itex]\pm[/itex]0.01 V) regardless of the number of LEDs whether it was 3, 4, 5, or 6 in series. They all got the same voltage.

-With an 11 V supply and 6 LEDs in series, each LED got 1.75 V and the resistor got 0.08 V. I was surprised this circuit actually worked. It was very dim, but the LEDs were working. (Current was measured at 0.5 mA).

So those results were very interesting and helped me understand a little better how the voltage got divided up through the series string of LEDs. I had originally assumed each LED would automatically just have 2V across it, and if that couldn't be supplied, they wouldn't work. Well that was sort of correct, but its more like they're just very dim if the voltage is too low.

I think the biggest lightbulb that went off (pun fully intended) in my head was when I was falling asleep thinking about how the current in the circuit was determined - whether it was determined by the LEDs or the resistor. So then I realized it's sort of like a step input with some oscillation during the settling time. The voltages between the LEDs and the resistor affect each other, as does the current through the resistor and thus the current through the LEDs. I tried coming up with an equation to describe all these relations, but just decided to solve numerically.

So the first thing I did was I took the current-voltage curve from the data sheet and entered a couple points in excel and used a trendline to find a function for it. Then I was able to use that function to calculate the current drawn by an LED at a certain voltage.

So what I did was input the supply voltage, set a resistance (say 150 Ω) then start my iterations using the voltage across the resistor as 2 V. Then the current across the resistor was calculated using V=IR (ignoring the LEDs for this part). Knowing from my experiments above that the LEDs get the remainder of the voltage divided evenly between them, and having the LED current-voltage function, I calculated the current the LEDs were trying to draw at the calculated voltage. So I then had two current values, one from the resistor calculation and one from the LED calculation, and at first they were way off. So I iterated on the voltage over the resistor (adjusted up or down) until the two values for current were less than 0.1 mA different.

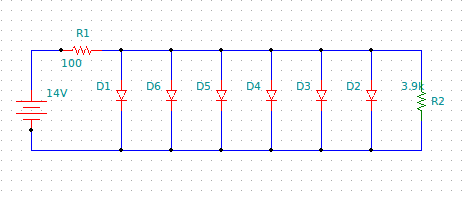

I couldn't think of any reason why that wouldn't be valid. So I did that iteration for each 14 V and 16 V until I got reasonable numbers for current (~20 mA @ 14 V and ~30 mA @ 16 V) using the same resistance value. Then I checked the power dissipated over the resistance and determined how many resistors to put in parallel to get the right total resistance and a low power dissipation over each resistor (~50 mW per resistor).

Those of you paying close attention know I originally was using the voltage range 12-14 V but am now saying 14-16 V. Well I decided to go to the connector and measured voltage using my multimeter with the bike off, but key on, and with the bike idling (12 V then 14 V). Then I revved it and noted it spiked around 16 V. I decided I won't ever really care if the LEDs are dim when the bike is off (but key on). So I designed for 14-16V.

Now on to the next project.