mcastillo356

Gold Member

- 666

- 365

- TL;DR

- Can't assume a premise of the reasoning

Hi, PF, want to know how can I go from a certain error formula for linearization I understand, to another I do not

Error formula for linearization I understand:

If ##f''(t)## exists for all ##t## in an interval containing ##a## and ##x##, then there exists some point ##s## between ##a## and ##x## such that the error ##E(x)=f(x)-L(x)## in the linear approximation ##f(x)\approx{L(x)=f(a)+f'(a)(x-a)}## satisfies

##E(x)=\dfrac{f''(s)}{2}(x-a)^2##

(...)

Quote I don't understand:

The error in the linearization of ##f(x)## about ##x=a## can be interpreted in terms of differentials (...) as follows: if ##\Delta x=dx=x-a##, then the change in ##f(x)## as we pass from ##x=a## to ##x=a+\Delta x## is ##f(a+\Delta x)-f(a)=\Delta y##, and the corresponding change in the linearization ##L(x)## is ##f'(a)(x-a)=f'(a)dx##, which is just the value at ##x=a## of the differential ##dy=f'(x)dx##. Thus,

##E(x)=\Delta y-dy##

The error ##E(x)## is small compared with ##\Delta x## as ##\Delta x## approaches 0, as seen in Figure.

Attempt to understand ##E(x)=\Delta y-dy##:

In any approximation, the error is defined by

error=true value-approximate value

If the linearization of ##f## about ##a## is used to approximate ##f(x)## near ##x=a##, that is,

##f(x)\approx{L(x)=f(a)+f'(a)(x-a)}##

then the error ##E(x)## in this approximation is

##E(x)=f(x)-L(x)=f(x)-f(a)-f'(a)(x-a)##

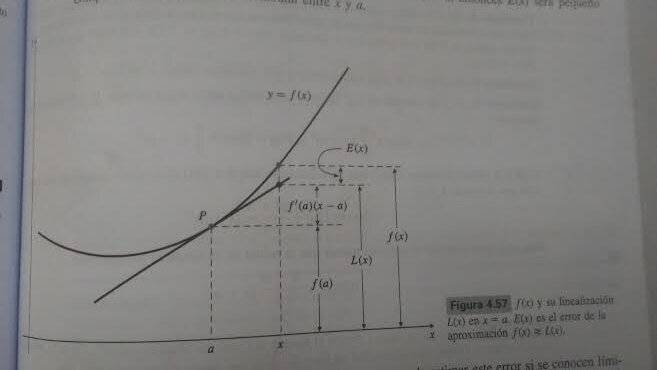

It is the vertical distance at ##x## between the graph of ##f## and the tangent line to that graph at ##x=a##, as shown in Figure. Observe that if ##x## is "near" ##a##, then ##E(x)## is small compared to the horizontal distance between ##x## and ##a##

##\displaystyle\lim_{\Delta x \to{0}}{\dfrac{\Delta y -dy}{\Delta x}}=\displaystyle\lim_{\Delta x \to{0}}{\left({\dfrac{\Delta y}{\Delta x}-\dfrac{dy}{dx}}\right)}=\dfrac{dy}{dx}-\dfrac{dy}{dx}=0##

Well, actually is a fake attempt: either of the limits tend to zero when ##\Delta x\rightarrow{0}##, but I'm confused by the premise of reasoning:

##\Delta x=dx=x-a##. Is there any explanation for a dummy like me? I fear the answer might need non standard analysis. But the premise sounds like "if apple=pear=apple".

Error formula for linearization I understand:

If ##f''(t)## exists for all ##t## in an interval containing ##a## and ##x##, then there exists some point ##s## between ##a## and ##x## such that the error ##E(x)=f(x)-L(x)## in the linear approximation ##f(x)\approx{L(x)=f(a)+f'(a)(x-a)}## satisfies

##E(x)=\dfrac{f''(s)}{2}(x-a)^2##

(...)

Quote I don't understand:

The error in the linearization of ##f(x)## about ##x=a## can be interpreted in terms of differentials (...) as follows: if ##\Delta x=dx=x-a##, then the change in ##f(x)## as we pass from ##x=a## to ##x=a+\Delta x## is ##f(a+\Delta x)-f(a)=\Delta y##, and the corresponding change in the linearization ##L(x)## is ##f'(a)(x-a)=f'(a)dx##, which is just the value at ##x=a## of the differential ##dy=f'(x)dx##. Thus,

##E(x)=\Delta y-dy##

The error ##E(x)## is small compared with ##\Delta x## as ##\Delta x## approaches 0, as seen in Figure.

Attempt to understand ##E(x)=\Delta y-dy##:

In any approximation, the error is defined by

error=true value-approximate value

If the linearization of ##f## about ##a## is used to approximate ##f(x)## near ##x=a##, that is,

##f(x)\approx{L(x)=f(a)+f'(a)(x-a)}##

then the error ##E(x)## in this approximation is

##E(x)=f(x)-L(x)=f(x)-f(a)-f'(a)(x-a)##

It is the vertical distance at ##x## between the graph of ##f## and the tangent line to that graph at ##x=a##, as shown in Figure. Observe that if ##x## is "near" ##a##, then ##E(x)## is small compared to the horizontal distance between ##x## and ##a##

##\displaystyle\lim_{\Delta x \to{0}}{\dfrac{\Delta y -dy}{\Delta x}}=\displaystyle\lim_{\Delta x \to{0}}{\left({\dfrac{\Delta y}{\Delta x}-\dfrac{dy}{dx}}\right)}=\dfrac{dy}{dx}-\dfrac{dy}{dx}=0##

Well, actually is a fake attempt: either of the limits tend to zero when ##\Delta x\rightarrow{0}##, but I'm confused by the premise of reasoning:

##\Delta x=dx=x-a##. Is there any explanation for a dummy like me? I fear the answer might need non standard analysis. But the premise sounds like "if apple=pear=apple".