Valour549

- 57

- 4

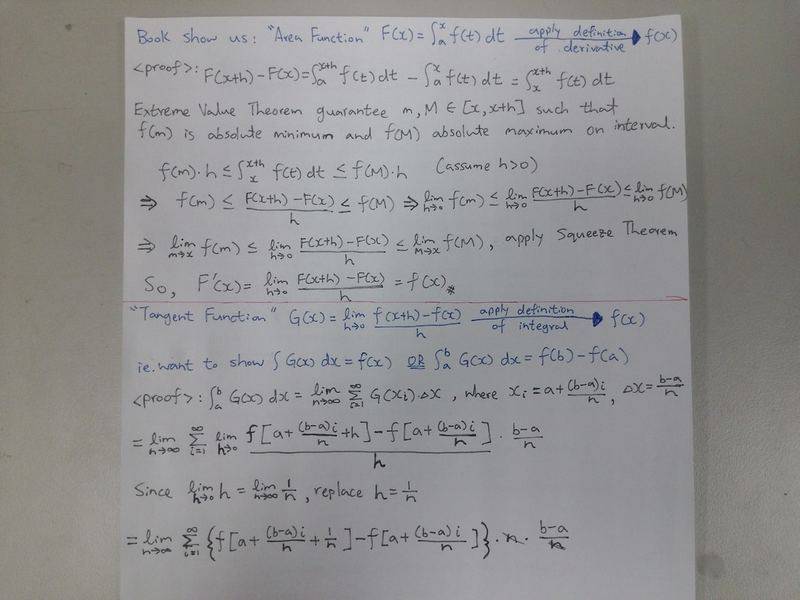

The proofs of the Fundamental Theorem of Calculus in the textbook I'm reading and those that I have found online, basically show us:

1) That when we apply the definition of the derivative to the integral of f (say F) below, we get f back.

[tex]F(x) = \int_a^x f(t) dt[/tex]

2) That any definite integral of f can be found using any of its anti-derivatives F and taking the difference when evaluated at the upper and lower limits.

However, there is no proof that actually starts from the derivative of f (say G), applies the definition of the definite integral to it, and arrives back at f. In any case this is my attempt at it (starting below the red line). Normally when evaluating a definite integral using Riemann sum, the rules of the sigma notation are invoked allowing the breaking down into smaller sums. But with the absence of an actual function in this case I'm stuck and need some help.

1) That when we apply the definition of the derivative to the integral of f (say F) below, we get f back.

[tex]F(x) = \int_a^x f(t) dt[/tex]

2) That any definite integral of f can be found using any of its anti-derivatives F and taking the difference when evaluated at the upper and lower limits.

However, there is no proof that actually starts from the derivative of f (say G), applies the definition of the definite integral to it, and arrives back at f. In any case this is my attempt at it (starting below the red line). Normally when evaluating a definite integral using Riemann sum, the rules of the sigma notation are invoked allowing the breaking down into smaller sums. But with the absence of an actual function in this case I'm stuck and need some help.