Cade

- 90

- 0

This isn't a homework question per se, I'm just wondering about something in my textbook (Halliday & Resnick) that isn't clear to me:

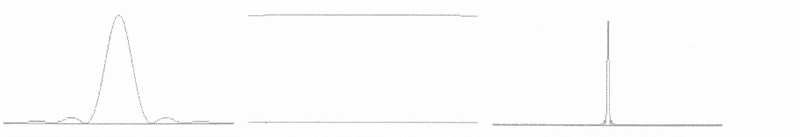

This figure shows intensity as a function of angle (0 degrees is the center) for three experiments that use single slits of different width.

The one on the right has the slit width far larger than the light's wavelength so the peak width is very small, the one in the middle has the slit width far less than the light's wavelength so the peak width is very large, and the one on the left has the slit width a bit larger than the light's wavelength, so the peak width is... sort of normalish.

When I say that the slit width is far larger than the light's wavelength, I mean by a factor of 100. When I say that the slit width is far less than the light's wavelength, I also mean by a factor of 100.

1) I'm curious about the right-most image. If the slit is very wide and the light coming in was a wave parallel to the slit, it would hardly diffract light through its center, allowing most of the wave to pass through in the same direction. But if the intensity on screen is very narrow when it isn't diffracted, does this mean that the light in this example is from a thin beam (perhaps a laser)?

2) Would I be correct in saying that if the light in this example were a wave passing through a very wide slit, the intensity would be high and even, with diffraction starting at the edges where it diffracted off the sides of the slit?

3) If the slit is very narrow, the wave or beam would come out in a hemispherical shape (Huygens-Fresnel principle). This would give it a wide center diffraction maximum. For this case, because the slit is extremely narrow, I think it doesn't matter how "wide" the light coming in is. Is this correct?

This figure shows intensity as a function of angle (0 degrees is the center) for three experiments that use single slits of different width.

The one on the right has the slit width far larger than the light's wavelength so the peak width is very small, the one in the middle has the slit width far less than the light's wavelength so the peak width is very large, and the one on the left has the slit width a bit larger than the light's wavelength, so the peak width is... sort of normalish.

When I say that the slit width is far larger than the light's wavelength, I mean by a factor of 100. When I say that the slit width is far less than the light's wavelength, I also mean by a factor of 100.

1) I'm curious about the right-most image. If the slit is very wide and the light coming in was a wave parallel to the slit, it would hardly diffract light through its center, allowing most of the wave to pass through in the same direction. But if the intensity on screen is very narrow when it isn't diffracted, does this mean that the light in this example is from a thin beam (perhaps a laser)?

2) Would I be correct in saying that if the light in this example were a wave passing through a very wide slit, the intensity would be high and even, with diffraction starting at the edges where it diffracted off the sides of the slit?

3) If the slit is very narrow, the wave or beam would come out in a hemispherical shape (Huygens-Fresnel principle). This would give it a wide center diffraction maximum. For this case, because the slit is extremely narrow, I think it doesn't matter how "wide" the light coming in is. Is this correct?

Last edited: