Math Amateur

Gold Member

MHB

- 3,920

- 48

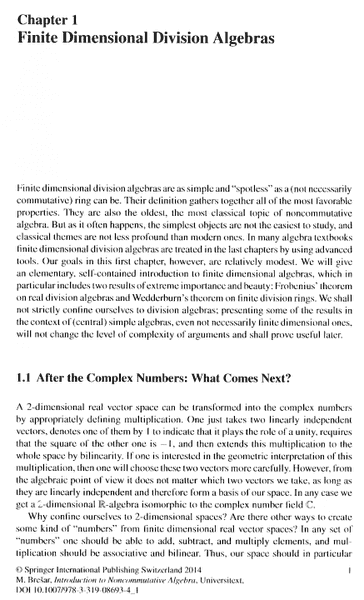

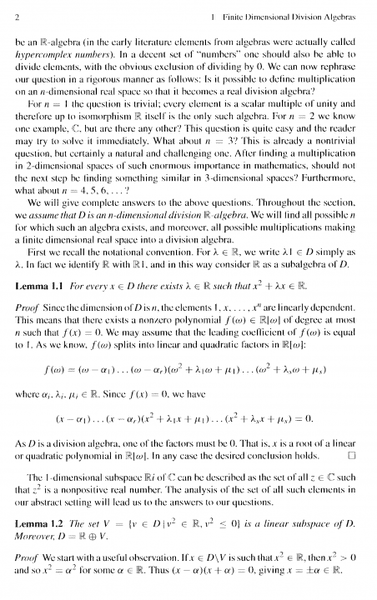

I am reading Matej Bresar's book, "Introduction to Noncommutative Algebra" and am currently focussed on Chapter 1: Finite Dimensional Division Algebras ... ...

I need help with the an aspect of the proof of Lemma 1.1 ... ...

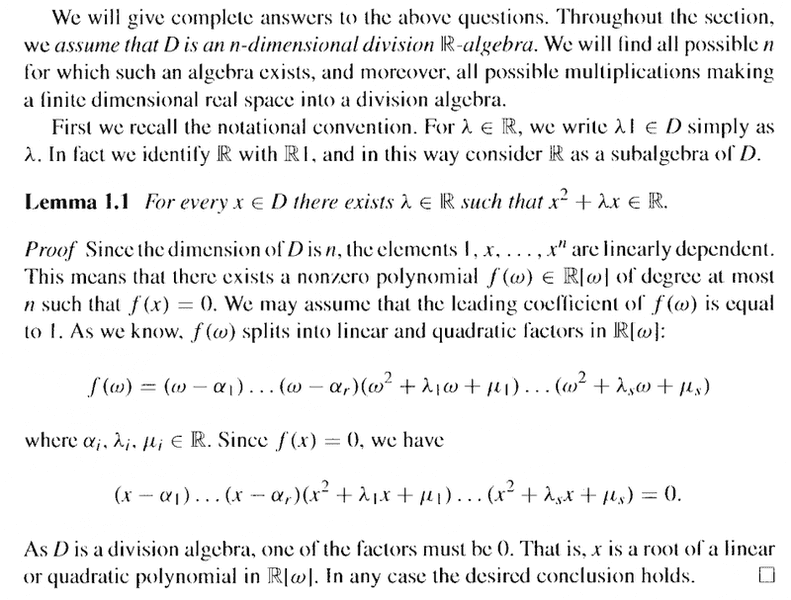

Lemma 1.1 reads as follows:

In the above text, at the start of the proof of Lemma 1.1, Bresar writes the following:

" ... ... Since the dimension of ##D## is ##n##, the elements ##1, x, \ ... \ ... \ , x^n## are linearly dependent. This means that there exists a non-zero polynomial ##f( \omega ) \in \mathbb{R} [ \omega ]## of degree at most ##n## such that ##f(x) = 0## ... ... "My question is as follows:

How exactly (rigorously and formally) does the elements ##1, x, \ ... \ ... \ , x^n## being linearly dependent allow us to conclude that there exists a non-zero polynomial ##f( \omega ) \in \mathbb{R} [ \omega ]## of degree at most ##n## such that ##f(x) = 0## ... ?Help will be much appreciated ...

Peter

=====================================================In order for readers of the above post to appreciate the context of the post I am providing pages 1-2 of Bresar ... as follows ...

I need help with the an aspect of the proof of Lemma 1.1 ... ...

Lemma 1.1 reads as follows:

In the above text, at the start of the proof of Lemma 1.1, Bresar writes the following:

" ... ... Since the dimension of ##D## is ##n##, the elements ##1, x, \ ... \ ... \ , x^n## are linearly dependent. This means that there exists a non-zero polynomial ##f( \omega ) \in \mathbb{R} [ \omega ]## of degree at most ##n## such that ##f(x) = 0## ... ... "My question is as follows:

How exactly (rigorously and formally) does the elements ##1, x, \ ... \ ... \ , x^n## being linearly dependent allow us to conclude that there exists a non-zero polynomial ##f( \omega ) \in \mathbb{R} [ \omega ]## of degree at most ##n## such that ##f(x) = 0## ... ?Help will be much appreciated ...

Peter

=====================================================In order for readers of the above post to appreciate the context of the post I am providing pages 1-2 of Bresar ... as follows ...