leroyjenkens

- 621

- 49

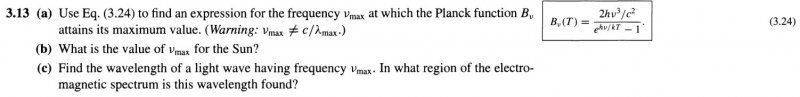

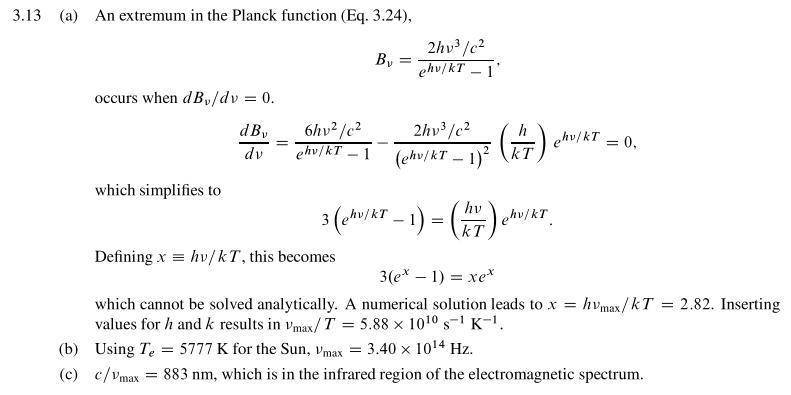

I'll include two pictures; the first one is the question from the book and the second one is the answer from the solution manual. I have no idea how they got that answer. For part a, the solution says that you can't solve it analytically, so you have to solve it numerically. What does that mean? You just make up numbers to put in there? There is no value for T to put in and no value for Vmax to put in, yet they somehow get 2.82 as the answer. To get that answer, for part a, they must have put in a value for Vmax, which is what we're trying to solve for, and put in a value for T, which has no value yet.