evinda

Gold Member

MHB

- 3,741

- 0

Hello! (Wave)

There are given three vectors $V_1, V_2, V_3$ of dimension $m$, the elements of which are real numbers. I want to write an algorithm that determines if there are three numbers, one of each of the matrices $V_1, V_2$ and $V_3$, that have sum equal to $0$.

Is it right so far?

What could I do in order to achieve time complexity $O(m^2)$? (Thinking)

There are given three vectors $V_1, V_2, V_3$ of dimension $m$, the elements of which are real numbers. I want to write an algorithm that determines if there are three numbers, one of each of the matrices $V_1, V_2$ and $V_3$, that have sum equal to $0$.

- Can you write an $O(m^3)$ algorithm?

- Can you write an $O(m^2 \log m)$ algorithm?

- Can you write an $O(m^2)$ algorithm?

- Can you find a quicker algorithm that $O(m^2)$ ?

-

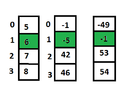

Code:Algorithm(V1,V2,V3){ // K contains all the possible sums of the vectors V1, V2, V3 l=0; while (l!=1){ for (i=0; i<m; i++){ for (j=0; j<m; j++){ for (k=0; k<m; k++){ if (K[i,j,k]==1) l=1; } } } } if (l==0) printf("No."); else printf("Yes."); }

Code:Algorithm(V1,V2,V3){ // K contains all the possible sum of the vectors V1, V2 s=0; while (m!=1){ for (i=0; i<m; i++){ for (j=0; j<m; j++){ l=Binarysearch(C,-K[i,j],0,n-1); if (l==1) s=1; } } } if (m==0) printf("No."); else printf("Yes."); }

Is it right so far?

What could I do in order to achieve time complexity $O(m^2)$? (Thinking)

Last edited: