Discussion Overview

The discussion revolves around the mathematical treatment of variations in the context of functional derivatives, specifically addressing the validity of moving variation signs outside of integral signs in variational calculus. Participants explore the implications of notation and the dependencies of variables within integrals.

Discussion Character

- Technical explanation

- Debate/contested

- Mathematical reasoning

Main Points Raised

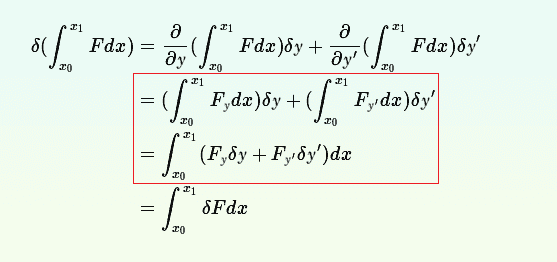

- Some participants assert that moving the variation signs ##\delta y## and ##\delta y'## outside the integral is incorrect, as they are functions of ##x##.

- Others propose that the notation can be misleading, conflating variables and functions, and suggest that the derivation should be approached with care regarding dependencies.

- A participant discusses the derivation of a functional and its variations, emphasizing the need to treat variations as independent when applying functional derivatives.

- Another participant suggests evaluating specific examples to clarify the implications of treating variables as independent versus dependent within integrals.

- There is mention of the Gateaux differential and its role in understanding functional derivatives, indicating a more rigorous approach to the derivation process.

Areas of Agreement / Disagreement

Participants generally disagree on the validity of moving variation signs outside the integral. There is no consensus on the correct treatment of the notation and dependencies involved in the derivation.

Contextual Notes

Participants note that the notation used can lead to confusion, particularly in distinguishing between variables and functions. The discussion highlights the complexity of functional derivatives and the need for careful handling of dependencies in variational calculus.