kshitij

- 218

- 27

- Homework Statement

- What are the roots of the equation x=1+1/x?

- Relevant Equations

- x=(-b±√(b²-4ac))/(2a),

when ax^2+bx+c=0

On simplifying the given equation we get, x^2-x-1=0 and using the quadratic formula we get x=(1+√5)/2 and x=(1-√5)/2

Now, as the formula suggests, there are two possible values for x which satisfies the given equation.

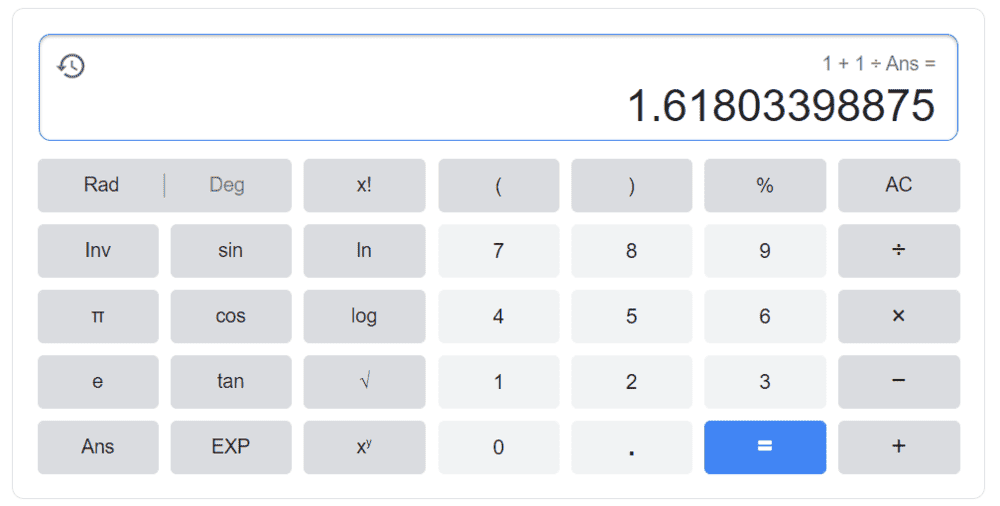

But now, if we follow a process in any general calculator by entering

1+1/Ans

into the calculator repeatedly, we always reach this particular number as shown,

Now obviously we cannot do this process for when the value of Ans is set to 0.

But other than that, no matter what the value of answer we choose,

for all real numbers if we repeatedly do this process on any calculator,

we always reach the above mentioned number.

This still somehow makes sense to me because if we are repeating our input into the equation 1+1/Ans

what we are actually doing looks somewhat like this,

Ans=1+1/Ans

here Ans is variable

So we eventually did end up with our starting equation whose roots were,

(1+√5)/2 and (1-√5)/2

And the number which the calculator shows is also equal to (1+√5)/2 But my question is why do we end up always at (1+√5)/2 and and never on (1-√5)/2?

x=(1-√5)/2 also satisfies the original equation so why does the calculator never show us the other root of this equation?

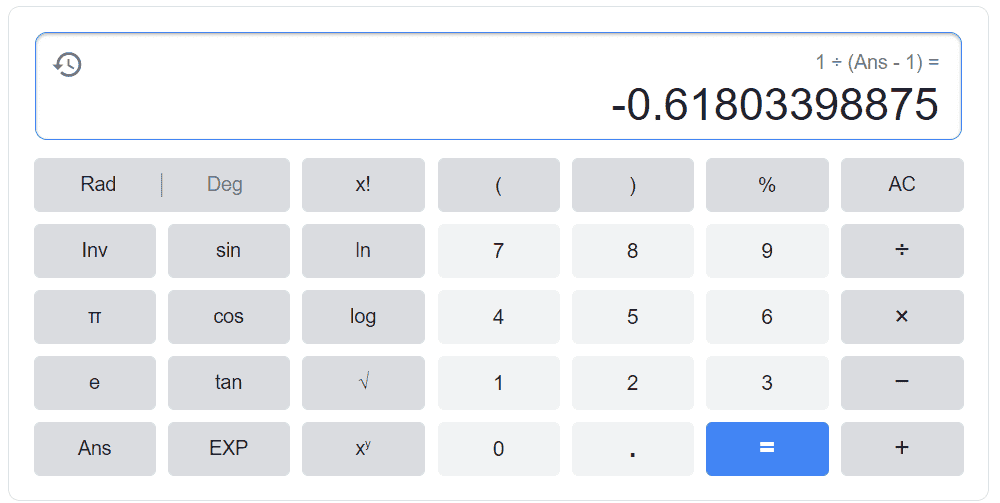

Now this got me thinking and I tried to manipulate the original equation to something like this (don't ask me why I did this cause even I don't know that, I was just playing around with random inputs like these when the result for this input surprised me),

x=1/(x-1)

This equation is exactly same as our original equation with the only difference being that in the original equation we cannot have the value of x as 0 and in the modified version, x should not be equal to 1. So now let's do another process (repeatedly) in the calculator,

1/(Ans-1)

again by the same logic we can see that the process which we are doing, actually looks like the equation,

Ans=1/(Ans-1)

Only difference this time is that now we cannot set the value of answer as 1 or 2.

Other than that, for all real values of Ans, this time we reach this particular number,

And this number is exactly equal to the other value of x in our original equation which is (1-√5)/2

So that is my question, why does the calculator have a favorite root for a particular function when more than one values satisfy the equation?

If both the roots are equivalent, then half of the times we should have reached (1+√5)/2 and other half of the times (1-√5)/2 randomly.

Also this isn't some usual homework question, I didn't knew whom to ask this question so I posted it here. I hope it doesn't break any rules.

Now, as the formula suggests, there are two possible values for x which satisfies the given equation.

But now, if we follow a process in any general calculator by entering

1+1/Ans

into the calculator repeatedly, we always reach this particular number as shown,

Now obviously we cannot do this process for when the value of Ans is set to 0.

But other than that, no matter what the value of answer we choose,

for all real numbers if we repeatedly do this process on any calculator,

we always reach the above mentioned number.

This still somehow makes sense to me because if we are repeating our input into the equation 1+1/Ans

what we are actually doing looks somewhat like this,

Ans=1+1/Ans

here Ans is variable

So we eventually did end up with our starting equation whose roots were,

(1+√5)/2 and (1-√5)/2

And the number which the calculator shows is also equal to (1+√5)/2 But my question is why do we end up always at (1+√5)/2 and and never on (1-√5)/2?

x=(1-√5)/2 also satisfies the original equation so why does the calculator never show us the other root of this equation?

Now this got me thinking and I tried to manipulate the original equation to something like this (don't ask me why I did this cause even I don't know that, I was just playing around with random inputs like these when the result for this input surprised me),

x=1/(x-1)

This equation is exactly same as our original equation with the only difference being that in the original equation we cannot have the value of x as 0 and in the modified version, x should not be equal to 1. So now let's do another process (repeatedly) in the calculator,

1/(Ans-1)

again by the same logic we can see that the process which we are doing, actually looks like the equation,

Ans=1/(Ans-1)

Only difference this time is that now we cannot set the value of answer as 1 or 2.

Other than that, for all real values of Ans, this time we reach this particular number,

And this number is exactly equal to the other value of x in our original equation which is (1-√5)/2

So that is my question, why does the calculator have a favorite root for a particular function when more than one values satisfy the equation?

If both the roots are equivalent, then half of the times we should have reached (1+√5)/2 and other half of the times (1-√5)/2 randomly.

Also this isn't some usual homework question, I didn't knew whom to ask this question so I posted it here. I hope it doesn't break any rules.