DoobleD

- 259

- 20

Third question about wave optics in two days, hope it's not too much.

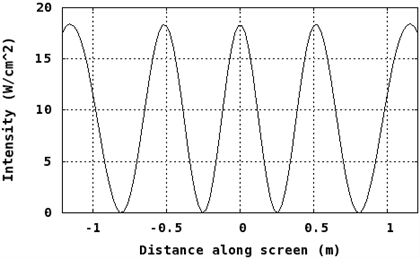

When we look at the intensity of light on the screen in a double-slit interference experiment (assuming negligible diffraction), we find something like this :

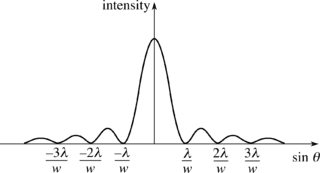

When we look at the intensity of light on screen in a single-slit diffraction experiment, we find something like :

In the first case, the intensity maxima is constant. In the second case, it decreases. Why this difference ?

In the first case, the intensity maxima is constant. In the second case, it decreases. Why this difference ?

My best guess would be that when dealing with the double-slit interference in textbooks, we always assume a short distance to the center of the screen, thus having maxima pretty much the same. While with diffraction even at those short distances maxima falls rapidly. Would that be correct ?

I also wonder if a forever constant maxima wouldn't violate conservation of energy ? Light can only be emitted for a finite period of time, so, the amount of energy reaching the screen must be finite. Therefore, light intensity on the screen must vanishes at some point right ? Even with the double-slit interference without diffraction. Is this reasoning correct ?

When we look at the intensity of light on the screen in a double-slit interference experiment (assuming negligible diffraction), we find something like this :

When we look at the intensity of light on screen in a single-slit diffraction experiment, we find something like :

My best guess would be that when dealing with the double-slit interference in textbooks, we always assume a short distance to the center of the screen, thus having maxima pretty much the same. While with diffraction even at those short distances maxima falls rapidly. Would that be correct ?

I also wonder if a forever constant maxima wouldn't violate conservation of energy ? Light can only be emitted for a finite period of time, so, the amount of energy reaching the screen must be finite. Therefore, light intensity on the screen must vanishes at some point right ? Even with the double-slit interference without diffraction. Is this reasoning correct ?