Hello.

$$ \textbf{Notation and mathematics used} $$

This denotes the real part of the complex number:

$$\Re \Big[ e^{j(a)} \Big] = cos(a) $$

The property below is about how exponents are summed when they are multiplied by a common base, this allows one to remove the frequency aspect from the rotating phasor and express it as a complex number with magnitude and phase:

$$

Ae^{j(\omega t + \phi)} = e^{j(\omega t)} \cdot A e^{j(\phi)}

$$

An alternate way to express complex numbers in polar form:

$$

A e^{j(\alpha)} = A \angle{\alpha}$$

$$ \textbf{Sinusoidal steady state: Impedence} $$

Impedence is the complex number that captures the attenuation between voltage peak and current peak, and the phase difference. In electrical engineering, these peak values are RMS values, for sinusoids of the form:

$$ A_{p} \cos( \omega t + \phi ) $$

the RMS value is always:

$$ \dfrac{A_{p}}{\sqrt{2} } $$

$$ \textbf{Sinusoidal steady state: The phasor transform} $$

The operation that extracts, from a sinusoid, a complex number that captures the:

1. The RMS magnitude of that sinusoid.

2. The phase shift of that sinusoid.

$$ A_{p} \cos( \omega t + \phi )\,\,\,\, \text{V} = \Re \Big[ A_{p} e^{j( \omega t + \phi) } \Big] = \Re \Big[ e^{j( \omega t) } \cdot \underline{A_{p}e^{j(\phi)}} \Big] \rightarrow \dfrac{A_{p}}{\sqrt{2}} e^{j(\phi )} \,\,\,\, \text{V} $$

The complex number that captures the phase and RMS magnitude of the sinusoid: ##A_{p} \cos( \omega t + \phi )\,\,\,\, \text{V} ## is:

$$ \dfrac{A_{p}}{\sqrt{2}} e^{j(\phi )} \,\,\,\, \text{V} = \dfrac{A_{p}}{\sqrt{2}} \angle{ \phi} \,\,\, \text{V} $$

The phasor is independent of the frequency, as you saw above we extracted the frequency out of the complex phasor.

The limitation of phasor analysis is it relies on circuits where the frequencies of the sources are all of the same. If the sinusoids are not at the same frequency then we would have to use superposition. Impedence is the division of the complex numbers that are representing the voltage and current.

$$

Z = X \angle{ \phi } \,\,\,\,\,\, \Omega= \dfrac{ \dfrac{V_{p} }{\sqrt{2} } \angle{ \phi_{1} } \,\,\,\, \text{V} }{ \dfrac{A_{p} }{ \sqrt{2} } \angle{\phi_{2} } \,\,\,\, \text{A} }

$$

$$\textbf{Derivatives and integral of phasors} $$

In the time domain, differentiation of a sinusoid is equivalent to multiplication of its phasors by ## j \omega ##. Time integration, is equivalent to the multiplication of its phasor by ## \dfrac{1}{ j \omega} ##

$$ \dfrac{ d^{n}}{d^{n}t} A_{p} \cos( \omega t + \phi) \,\,\,\, \text{V} \iff { (j \omega)}^{n} \dfrac{A_{p}}{\sqrt{2}} \angle{ \phi}\,\,\,\, \text{V} $$

$$ \displaystyle \int A_{p} \cos( \omega t + \phi) \,\, \,\, \text{V} \,\,\, \text{dt} \iff \dfrac{1}{j \omega} \dfrac{A_{p}}{\sqrt{2}} \angle{ \phi} \,\,\,\, \text{V} $$To derive the impedence for R, L, and C, we use their simple voltage and current relationships, and assume we are forcing a voltage function of the form:

## v(t) = V_{p} \cos(\omega t) ##

$$

I_{C}(t) = Cv'(t) \,\,\,\,\,\,\,\,\, I_{L}(t) = \dfrac{1}{L} \displaystyle \int_{-\infty}^{t} v(t) \,\,\,\,\, \text{dt}

$$

Using what we have gathered from the above sections:

$$

V_{c}(t) =\Re \Big[ A_{p} e^{j( \omega t ) } \Big] \rightarrow \dfrac{A_{p}}{\sqrt{2}} \angle{0} \,\,\,\,\text{V} \,\,\,\,\, I_{c}(t) = C \cdot \dfrac{d}{dt} \Re \Big[ A_{p} e^{j( \omega t ) } \Big] \rightarrow ?

$$

$$

I_{c}(t) = C \cdot \dfrac{d}{dt} \Re \Big[ A_{p} e^{j( \omega t ) } \Big] = j \omega C \Re \Big[ A_{p} e^{j( \omega t ) } \Big] \rightarrow j \dfrac{A_{p}\omega C}{\sqrt{2}} \angle{0} = e^{j(\frac{\pi}{2})} \cdot \dfrac{A_{p}\omega C}{\sqrt{2}} \angle{0} = \dfrac{A_{p}\omega C}{\sqrt{2}} \angle{\frac{\pi}{2} } \,\,\,\, \text{A}

$$

The impdence, is the ratio of these two complex numbers:

$$

Z_{c} = \dfrac{\dfrac{A_{p}}{\sqrt{2}} \angle{0} \,\,\,\,\text{V} }{ \dfrac{A_{p}\omega C}{\sqrt{2}} \angle{\frac{\pi}{2} } \,\,\,\, \text{A}} =\dfrac{A_{p}}{\sqrt{2}} \cdot \dfrac{ \sqrt{2} }{ A_{p} \omega C} \angle{0 - \frac{\pi}{2} } = \dfrac{1}{ \omega C} \angle{ - \frac{\pi}{2} } = - j \dfrac{1}{\omega C} \,\,\,\,\, \Omega

$$

The impedence for the inductor can also be derived in the same manner. Sinusoidal steady state impedence analysis requires voltage and current to be sinusoids. The method for deriving impedences is this:

1. Force a voltage function which is a sinusoid of the form ## A_{p} \cos(\omega t + \phi) ##

2. Solve for the complex number that represents the current for the inductor.

3. Divide voltage phasor (the complex number) by current phasor, and you will get your frequency dependent impedence function for the inductor.

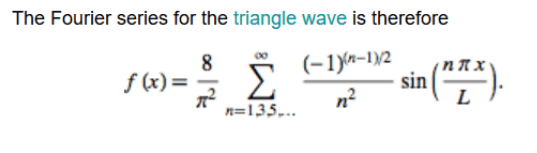

$$ \textbf{Fourier series and linear circuits: Circuits driven by non sinusoidal periodic waveforms} $$

Since the impedence and phasor transform methods apply only for pure sinusoids, the Fourier series is useful to analyse circuits which are affected upon by

non sinusoidal functions, which can be transformed to a Fourier series representation, we prefer to use the amplitude phase format of the Fourier series.

1. The first step is to express the excitation ## f(t) ## as a Fourier series.

2. Transform the circuit from the time domain to the frequency (phasor) domain.

3. Find the response to DC (zero frequency or mean value of your Fourier series) and then find the responses to all the AC components.

4. Use superposition to sum up all DC and AC responses, adding them up.

$$\textbf{Amplitude phase format of the Fourier series} $$

$$

f(t) = \dfrac{ a_{0}}{2} + \displaystyle \sum_{n=1}^{n \to \infty} \Big|\textbf{A}_{n} \Big| \cos( n \omega_{0} t - \phi ) $$ Where $$\,\,\,\,\,\, \Big|\textbf{A}_{n} \Big| = \sqrt{a_{n}^2 + b_{n}^{2} } \,\,\,\,\,\, \phi = \arctan{ \Big( \dfrac{b_{n}}{a_{n}} \Big)}$$

$$ \textbf{Easy example demonstrating use of superposition} $$

You have a single capacitor of $$ 22 \mu \text{F} $$ acted upon by three sources:

$$

v_{0}(t) = 1 \,\,\,\, \text{V} \,\,\,\,\,\,\,\,\, v_{1}(t) = 5 \cos( 10t + 20) \text{V} \,\,\,\,\,\,\,\,\, v_{2}(t) = 10 \cos(20t + 30)

$$

We will have to use superposition for three currents:

$$

I_{0} = 0 \,\,\,\,\, I_{1} = \dfrac{ \dfrac{5}{\sqrt{2} } \angle{20} }{\dfrac{1}{10 \cdot 22 \mu \text{F} } \angle{90} } \,\,\,\, \text{A} = 777.8 \angle{-70} \,\,\,\,\, \mu \text{A} \,\,\,\,\,\,\, I_{2} = \dfrac{ \dfrac{10}{\sqrt{2}} \angle{30} }{ \dfrac{1}{ 20 \cdot 22 \mu \text{F} } \angle{90} } = 3110 \angle{-60} \,\,\,\, \mu \text{A}

$$

These are the phasors that represent current for the DC, first harmonic, and third harmonic, they cannot be added up as they arise from different frequencies. In the time domain, however, they can be summed up as a single current function:

$$

i(t) = 777.8 \cos(10t -70) + 3110 \cos(20t -60) \,\,\,\,\,\,\, \mu \text{A}

$$

Inserting a waveform as expressed as a Fourier series:

$$

v(t) = \textbf{V}_{0} + \displaystyle \sum_{n=1}^{n \to \infty} \Big|\textbf{V}_{n} \Big| \cos(n\omega_{0} t + \phi_{1})

$$

Your current would be:

$$

i(t) = \textbf{I}_{0} + \displaystyle \sum_{n=1}^{n \to \infty} \Big|\textbf{I}_{n} \Big| \cos(n\omega_{0} t + \phi_{2})

$$

This is due to the superposition that arises in linear circuits.