JD_PM

- 1,125

- 156

- Homework Statement

- Is the following statement true or false? If it is the former case, prove it. If it is later, give a counterexample.

Let ##n \in \aleph_0## and ##L:\Re^{n} \rightarrow \Re^{n}## be an injective linear mapping. Let ##A \in \Re^{n \times n}## be an invertible matrix. Then there is a basis ##\alpha## of ##\Re^{n}## and a basis ##\beta## of ##\Re^{n}## such that ##A = L_{\alpha}^{\beta}##

- Relevant Equations

- Please check out diagram

I know that to go from a vector with coordinates relative to a basis ##\alpha## to a vector with coordinates relative to a basis ##\beta## we can use the matrix representation of the identity transformation: ##\Big( Id \Big)_{\alpha}^{\beta}##.

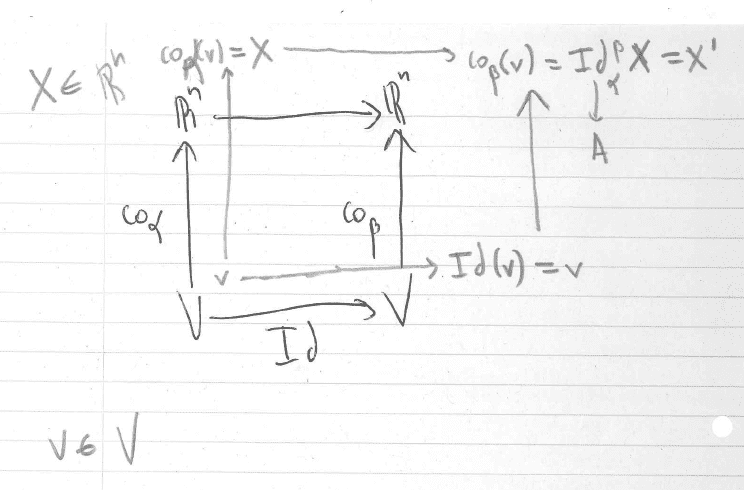

This can be represented by a diagram:

Thus note that the linear mapping we are interested in is ##A:X \rightarrow X'##, where:

$$A = \Big( Id \Big)_{\alpha}^{\beta}$$

I think that the statement is true but I think I should use the fact that ##A## is invertible somehow on the above equation in order to prove it. But how?

This can be represented by a diagram:

Thus note that the linear mapping we are interested in is ##A:X \rightarrow X'##, where:

$$A = \Big( Id \Big)_{\alpha}^{\beta}$$

I think that the statement is true but I think I should use the fact that ##A## is invertible somehow on the above equation in order to prove it. But how?

Last edited: