Master1022

- 590

- 116

- TL;DR

- What is the reasoning behind the minimum tone spacing for coherent FSK?

Hi,

I was reading through some online notes and was wondering: when dealing with coherent FSK, what is the minimum tone spacing and why?

I know that for non-coherent FSK, we can show that the minimum is: ## f_1 - f_0 = \frac{1}{T} ## where ## T ## is the symbol period. However, if we are now dealing with coherent FSK, how can I go about finding the minimum difference required (which will help me find the bandwidth)?

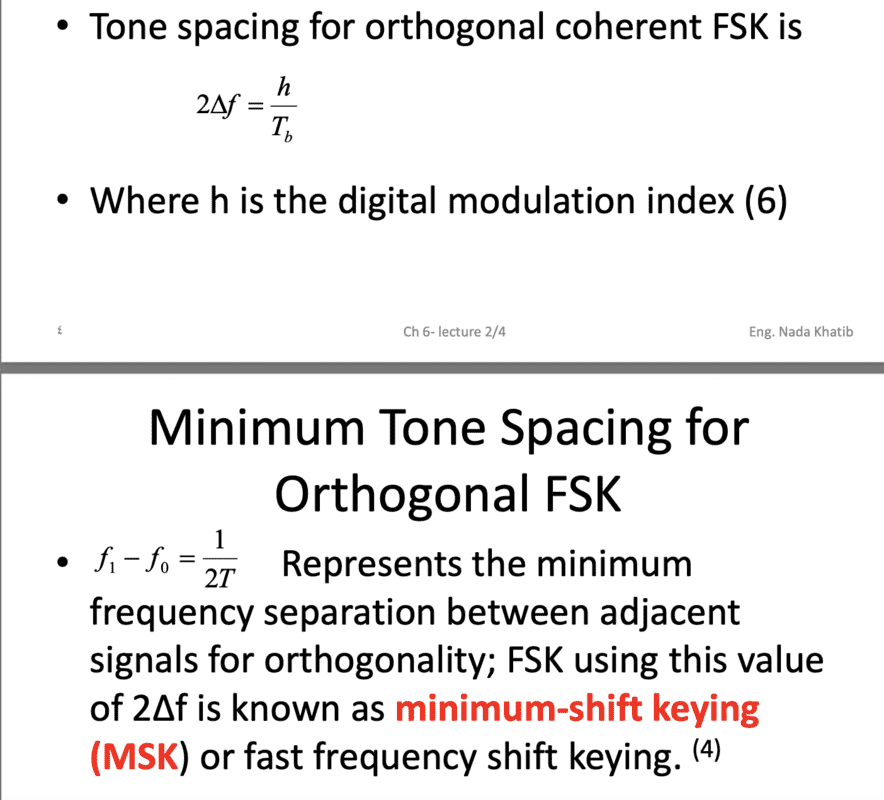

After a quick google search, the only reference I could find to the topic was in some online lecture notes (shown in picture below) which just simply stated that ## f_1 - f_0 = \frac{1}{2T} ## for the minimum without any explanation. I think I must be overlooking something quite obvious. What would be a good starting point for me to be able to derive/understand this property?

Thanks in advance for any help.

I was reading through some online notes and was wondering: when dealing with coherent FSK, what is the minimum tone spacing and why?

I know that for non-coherent FSK, we can show that the minimum is: ## f_1 - f_0 = \frac{1}{T} ## where ## T ## is the symbol period. However, if we are now dealing with coherent FSK, how can I go about finding the minimum difference required (which will help me find the bandwidth)?

After a quick google search, the only reference I could find to the topic was in some online lecture notes (shown in picture below) which just simply stated that ## f_1 - f_0 = \frac{1}{2T} ## for the minimum without any explanation. I think I must be overlooking something quite obvious. What would be a good starting point for me to be able to derive/understand this property?

Thanks in advance for any help.