TechGuy2016

- 10

- 0

I often create LED arrays, and have encountered a common issue where, as the LED load is increased, the total current requirement is not a linear factor as each branch (LED strip) is added to the circuit.

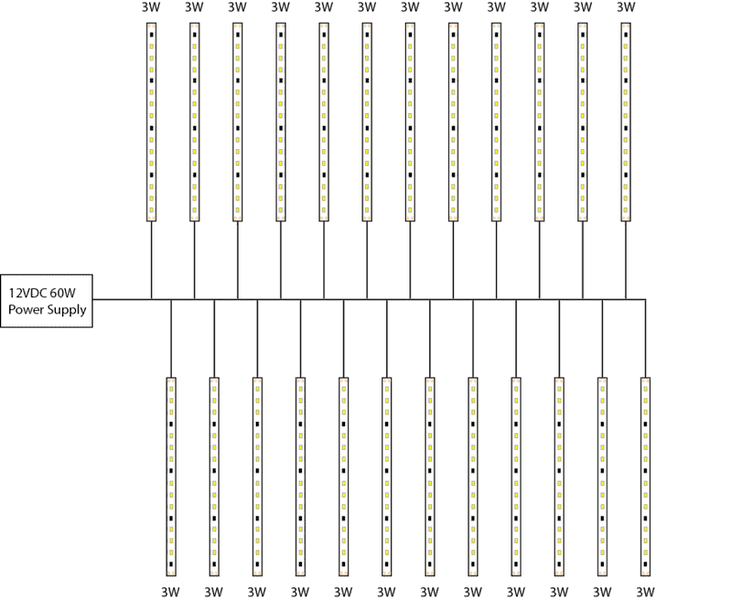

It is common to have many branches of LED strips on a 12VDC 60W power supply.

Power source: 12VDC 60W power supply

Each led strip is rated and measured at 3W, individually.

Here is the phenomenon in question:

As each LED strip (branch) is added to the circuit, the load is not a factor of the each branches power (3W) by the number of branches. The diagram shows 24 branches as an example. If one branch is connected, then it is in fact, measured to be 3W. Using a simple linear calculation, 24 branches x 3W is 72W. However, the actual 24 branch circuit draws only 3.88A which is 46.56W (at 12V).

I connected and measured from 1 to 58 strips and plotted the results. The resulting function is definitely very non-linear. I could place 58 of the LED strips on the circuit before I reached the 60W limit.

Does anyone know the Theorem or equation explaining this non-linear loading phenomenon?

I would greatly appreciate anyone's feedback on this. Thank you!

It is common to have many branches of LED strips on a 12VDC 60W power supply.

Power source: 12VDC 60W power supply

Each led strip is rated and measured at 3W, individually.

Here is the phenomenon in question:

As each LED strip (branch) is added to the circuit, the load is not a factor of the each branches power (3W) by the number of branches. The diagram shows 24 branches as an example. If one branch is connected, then it is in fact, measured to be 3W. Using a simple linear calculation, 24 branches x 3W is 72W. However, the actual 24 branch circuit draws only 3.88A which is 46.56W (at 12V).

I connected and measured from 1 to 58 strips and plotted the results. The resulting function is definitely very non-linear. I could place 58 of the LED strips on the circuit before I reached the 60W limit.

Does anyone know the Theorem or equation explaining this non-linear loading phenomenon?

I would greatly appreciate anyone's feedback on this. Thank you!