- #1

D_Laban

- 1

- 0

Hello to everyone!

First I would like to go through one simple example:

We have two bodies. One is wave source, other is wave receiver.

In this example they are positioned like this:

(source) O=====> (receiver) O=====>

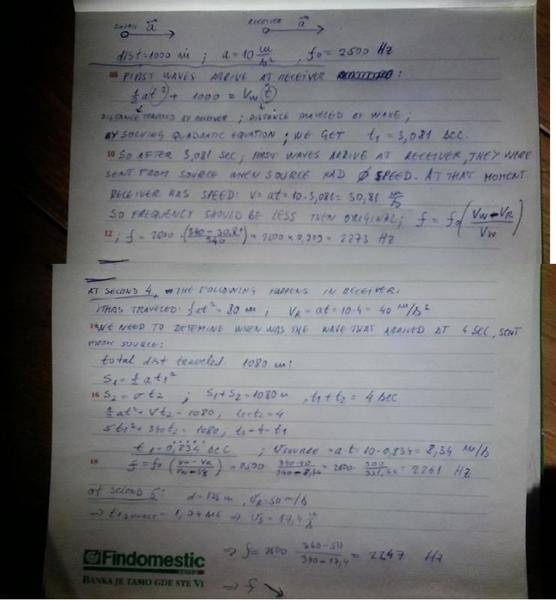

Distance between them is always 1000 m. Let's say that the waves are sound waves with the speed 340 m/s, and frequency 2500 Hz, both bodies have same acceleration, for example 10 m/s2, they move in same direction with same speed in any moment. They started with no motion at first moment. Their motion started same time as first waves were released from source...

So i calculated what happens as time passes, with frequency received by receiver…

So please check this and say if you agree or not…

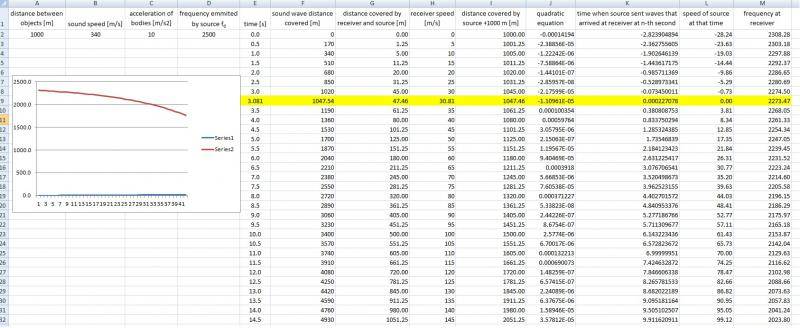

Then I made excel sheet that shows what happens as they continue...

So what I wanted to point out is, distance between source and receiver never changes ,but the frequency picked up by receiver keeps changing, compared to original frequency. In this case it decreases as time passes.

So speed and direction of source at the time it emits the waves, and the speed and direction of receiver when waves reach it, should be considered when we analyse Doppler shift noticed by receiver. (1)

Does this make sense to you, and if it does not, what could be mistakes in logic?

Anyway, I chose this example because this could be easily checked with simple experiment, with two falling bodies where one is hanging from another. Only in that case, change in sound speed in different altitude and air temperature and pressure should be considered.

Another example would be with two pendulums on long roaps that oscillate in phase. In that case sound frequency in receiver would oscilate..

So if we apply this principle (1) on light, we could say:

If we observe two distant galaxies, one is 100 million light years away, the other one is 10 million light years away, we see that the light that arrives from more distant one is more redshifted(lightwaves have lower frequencies) than from closer one, than one of the conclusions we could make is:

Galaxy that is 100 million light years away from us, 100 million years ago was moving away from us now, faster then galaxy that is 10 million years away, was moving 10 million years ago, from us now.

This could actualy mean that universe is slowing down its expansion instead of accelerating.

First I would like to go through one simple example:

We have two bodies. One is wave source, other is wave receiver.

In this example they are positioned like this:

(source) O=====> (receiver) O=====>

Distance between them is always 1000 m. Let's say that the waves are sound waves with the speed 340 m/s, and frequency 2500 Hz, both bodies have same acceleration, for example 10 m/s2, they move in same direction with same speed in any moment. They started with no motion at first moment. Their motion started same time as first waves were released from source...

So i calculated what happens as time passes, with frequency received by receiver…

So please check this and say if you agree or not…

Then I made excel sheet that shows what happens as they continue...

So what I wanted to point out is, distance between source and receiver never changes ,but the frequency picked up by receiver keeps changing, compared to original frequency. In this case it decreases as time passes.

So speed and direction of source at the time it emits the waves, and the speed and direction of receiver when waves reach it, should be considered when we analyse Doppler shift noticed by receiver. (1)

Does this make sense to you, and if it does not, what could be mistakes in logic?

Anyway, I chose this example because this could be easily checked with simple experiment, with two falling bodies where one is hanging from another. Only in that case, change in sound speed in different altitude and air temperature and pressure should be considered.

Another example would be with two pendulums on long roaps that oscillate in phase. In that case sound frequency in receiver would oscilate..

So if we apply this principle (1) on light, we could say:

If we observe two distant galaxies, one is 100 million light years away, the other one is 10 million light years away, we see that the light that arrives from more distant one is more redshifted(lightwaves have lower frequencies) than from closer one, than one of the conclusions we could make is:

Galaxy that is 100 million light years away from us, 100 million years ago was moving away from us now, faster then galaxy that is 10 million years away, was moving 10 million years ago, from us now.

This could actualy mean that universe is slowing down its expansion instead of accelerating.

Last edited: