SUMMARY

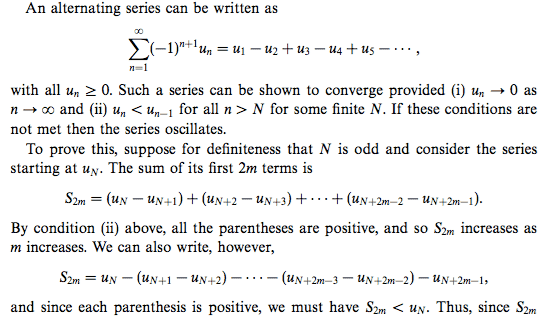

The discussion centers on the convergence of an alternating series defined by the sequence with the n-th term denoted as ##u_n##. It establishes that if the limit of the sum of the first 2m terms, ##S_{2m}##, approaches zero as N approaches infinity, the series converges. However, the proof is critiqued for lacking clarity, particularly in addressing the requirement that the terms must be alternating and decreasing in magnitude. The Sandwich Theorem is suggested as a method to demonstrate that the partial sums are bounded between two values converging to the same limit.

PREREQUISITES

- Understanding of alternating series

- Familiarity with limits and convergence criteria

- Knowledge of the Sandwich Theorem

- Basic concepts of sequences and series

NEXT STEPS

- Study the properties of alternating series and their convergence criteria

- Learn about the Sandwich Theorem and its applications in analysis

- Explore examples of sequences that illustrate convergence and divergence

- Review proofs related to convergence of series in mathematical analysis

USEFUL FOR

Mathematics students, educators, and anyone studying series convergence, particularly those interested in advanced calculus and real analysis.