Heidrun

- 6

- 0

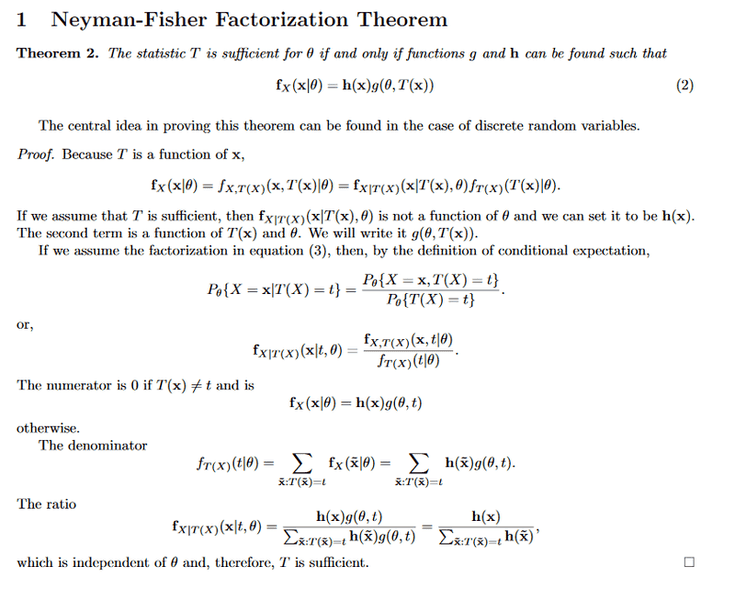

Hello. I have a question about a step in the factorization theorem demonstration.

1. Homework Statement

Here is the theorem (begins end of page 1), it is not my course but I have almost the same demonstration : http://math.arizona.edu/~jwatkins/sufficiency.pdf

Screenshot of it:

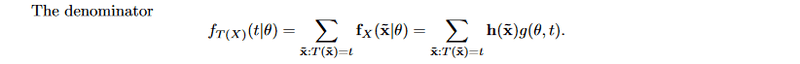

Could someone please explain me how to justify the first equality of that step?

I think a possible justification is because the sample is a sufficient statistic but it feels like it's not enough/not the right justification

1. Homework Statement

Here is the theorem (begins end of page 1), it is not my course but I have almost the same demonstration : http://math.arizona.edu/~jwatkins/sufficiency.pdf

Screenshot of it:

Homework Equations

Could someone please explain me how to justify the first equality of that step?

The Attempt at a Solution

I think a possible justification is because the sample is a sufficient statistic but it feels like it's not enough/not the right justification