saad87

- 83

- 0

I've been design a simple ohm-meter which can measure resistances in the range 0.001 to 100 Ohms. I am aiming for an accuracy of 3%.

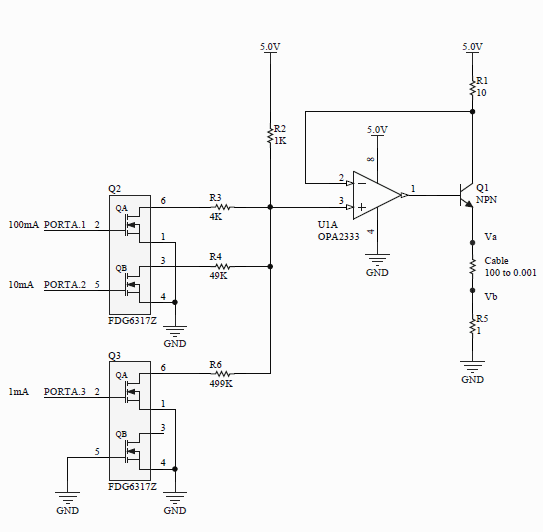

Here's the circuit (so far):

The circuit is basically a programmable current source which can source a current of 100, 10 or 1 mA. At junctions Va and Vb (right hand side of the circuit), I aim to connect a 500 gain instrumentation amplifier (monolithic). The issue is that I cannot connect Vb directly to ground as any rail-to-rail will have a limit on it's input. I believe a good in. amp will typically required it's input to be at least 100 mV above ground. The in. amp drives a 12-bit ADC.

To solve this, I inserted R5. However, at different currents R5 will have to be different. I'd like to drop approx. 100 mV across R5 just to be safe. Assume R5 is 100 Ohms. At a current of 1 mA, R5 will drop 100 mV. However, at a current of 100 mA, R5 will have to drop 10 V(!) - which is obviously not possible.

I have two solutions to this issue. I can either switch out R5 using additional MOSFETs or whatis perhaps simpler, is to remove R5 and it replace it with a schottky diode. I think a schottky would be better because it would have a lower forward voltage drop. The Vf would vary with the current but only slightly. As long as it's above 100 mV, I think we should be OK.

What solution should I go for? Will I be introducing any errors with the schottky?

Here's the circuit (so far):

The circuit is basically a programmable current source which can source a current of 100, 10 or 1 mA. At junctions Va and Vb (right hand side of the circuit), I aim to connect a 500 gain instrumentation amplifier (monolithic). The issue is that I cannot connect Vb directly to ground as any rail-to-rail will have a limit on it's input. I believe a good in. amp will typically required it's input to be at least 100 mV above ground. The in. amp drives a 12-bit ADC.

To solve this, I inserted R5. However, at different currents R5 will have to be different. I'd like to drop approx. 100 mV across R5 just to be safe. Assume R5 is 100 Ohms. At a current of 1 mA, R5 will drop 100 mV. However, at a current of 100 mA, R5 will have to drop 10 V(!) - which is obviously not possible.

I have two solutions to this issue. I can either switch out R5 using additional MOSFETs or whatis perhaps simpler, is to remove R5 and it replace it with a schottky diode. I think a schottky would be better because it would have a lower forward voltage drop. The Vf would vary with the current but only slightly. As long as it's above 100 mV, I think we should be OK.

What solution should I go for? Will I be introducing any errors with the schottky?