Elquery

- 66

- 10

- TL;DR

- Is there a difference between 'average' slope and 'typical' slope, and can both be calculated from the same limited information?

Hi all.

I am tasked with quantifying hiking trail grades (percent grade) and their 'typical' grade.

I am not sure if 'typical' is really a math term, but my first inclination was that it equates to 'average.' However, I now think there are two ways to approach this with different results.

Is it accurate to say that the average slope of a function is the rise over run of the straight line drawn between the beginning and end point?

In the case of trail grades, I would take the absolute value of slope values such that negative slopes translate into positive slope, because even a 'negative' slope is a grade as far as a hiker is concerned.

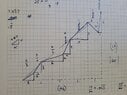

It is therefore possible to calculate this 'average slope' by dividing total elevation change (absolute value of all line segment rises) by horizontal trail distance (run). This is mathematically the same as taking the slope of each line segment (I am modeling this as a collection of straight line segments, no curves. See attached photo), and doing a weighted average based on the run of each segment. So you don't need to know the run of each segment; you only need to know the total change and the total horizontal run.

The issue is that in this application, it seems 'typical' grade should really account for the distance of the actual trail—in other words, the distance of the sloping line and not just the horizontal component (run). You cannot simply divide the total rise by the sloping line distance though; that doesn't give slope of any kind as far as I can tell. I know I can calculate the slope of each line segment and then do a weighted average weighted based on the linear distance (the hypotenuse of each segment) as opposed to weighted by the run. But this requires knowing the distance values of each of these segments and punching a long string of numbers out.

With the second definition of 'typical' slope, is there any way to calculate this value from only:

Or is the only way to weight these values based on knowing the distance of each unique slope segment?

Thanks for any thoughts!

I am tasked with quantifying hiking trail grades (percent grade) and their 'typical' grade.

I am not sure if 'typical' is really a math term, but my first inclination was that it equates to 'average.' However, I now think there are two ways to approach this with different results.

Is it accurate to say that the average slope of a function is the rise over run of the straight line drawn between the beginning and end point?

In the case of trail grades, I would take the absolute value of slope values such that negative slopes translate into positive slope, because even a 'negative' slope is a grade as far as a hiker is concerned.

It is therefore possible to calculate this 'average slope' by dividing total elevation change (absolute value of all line segment rises) by horizontal trail distance (run). This is mathematically the same as taking the slope of each line segment (I am modeling this as a collection of straight line segments, no curves. See attached photo), and doing a weighted average based on the run of each segment. So you don't need to know the run of each segment; you only need to know the total change and the total horizontal run.

The issue is that in this application, it seems 'typical' grade should really account for the distance of the actual trail—in other words, the distance of the sloping line and not just the horizontal component (run). You cannot simply divide the total rise by the sloping line distance though; that doesn't give slope of any kind as far as I can tell. I know I can calculate the slope of each line segment and then do a weighted average weighted based on the linear distance (the hypotenuse of each segment) as opposed to weighted by the run. But this requires knowing the distance values of each of these segments and punching a long string of numbers out.

With the second definition of 'typical' slope, is there any way to calculate this value from only:

- total elevation change

- total linear distance (sum of all segment's hypotenuse)

- total horizontal distance (run)

Or is the only way to weight these values based on knowing the distance of each unique slope segment?

Thanks for any thoughts!