Discussion Overview

The discussion centers on the relationship between entropy in information theory and thermodynamics, specifically questioning whether principles from information theory challenge the second law of thermodynamics. Participants explore the implications of entropy as it relates to random variables and the laws of physics, considering both classical and quantum perspectives.

Discussion Character

- Debate/contested

- Conceptual clarification

- Technical explanation

Main Points Raised

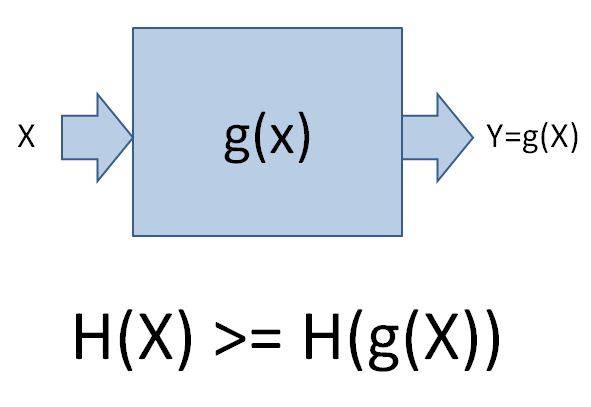

- One participant asserts that the entropy of functions of a random variable X is less than or equal to the entropy of X, questioning if this contradicts the second law of thermodynamics.

- Another participant argues that entropy typically increases unless energy is added to a system, suggesting a misunderstanding of the initial claim.

- A different viewpoint introduces the idea that the second law applies only when X is the sole argument of a function, proposing that a second argument representing randomness is necessary for a complete understanding.

- Further elaboration on the role of quantum randomness is presented, indicating that without measurements, there is no change in entropy or information, citing conservation of information in quantum mechanics.

- A later reply acknowledges a previous error, clarifying that the entropy law in information theory also applies to functions with multiple arguments, and discusses the distinction between physical entropy and information entropy.

- The definition of entropy in physics is described as the logarithm of the number of possible states with the same macroscopic description, suggesting that physical entropy can increase independently of information-theoretic considerations.

Areas of Agreement / Disagreement

Participants express differing views on the relationship between entropy in information theory and thermodynamics, with no consensus reached on whether the principles conflict or how they should be reconciled.

Contextual Notes

Participants note that the definitions of entropy in physics and information theory differ, which may contribute to the confusion and complexity of the discussion. There are also unresolved questions regarding the nature of randomness and its impact on entropy.