anandr

- 6

- 2

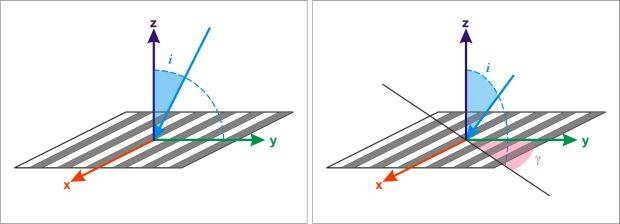

Diffraction on 1D grating is covered in many physics books. Usually they cover simple case when incident light is coming along the normal to the grating. Sometimes they present slightly more complicated case when incident light is tilted in the plane perpendicular to the stripes (left case on the image below).

Does anybody have an idea how to treat diffraction on a grating when incident light comes from arbitrary direction ( gamma ≠ 0 and i ≠ 0 , right case on the image below )?

Does anybody have an idea how to treat diffraction on a grating when incident light comes from arbitrary direction ( gamma ≠ 0 and i ≠ 0 , right case on the image below )?

I tried to split the incident wave vector k in two components: kx and kyz.

First part (kx) should diffract same way as in case of normal incidence (diffracted maxima will be symmetrically placed around the k across the grating stripes with diffraction angles Θ defined by d*sin(Θ)=n*λ ).

Second part (kyz) seems to be the case presented in left part of the image (these maxima are also symmetrically placed around the k but this time incident angle i is taken into account so d*(sin(Θ)+sin(i))=n*λ ). After that I just added the resulting diffracted wave vectors for each n to get the final diffracted wave vectors. Is this approach correct?

First part (kx) should diffract same way as in case of normal incidence (diffracted maxima will be symmetrically placed around the k across the grating stripes with diffraction angles Θ defined by d*sin(Θ)=n*λ ).

Second part (kyz) seems to be the case presented in left part of the image (these maxima are also symmetrically placed around the k but this time incident angle i is taken into account so d*(sin(Θ)+sin(i))=n*λ ). After that I just added the resulting diffracted wave vectors for each n to get the final diffracted wave vectors. Is this approach correct?