Dinoduck94

- 30

- 4

I've recently been interested in how much energy the Earth intercepts from the Sun; the answer, unsurprisingly was an astronomical amount, measuring quite easily into the ZetaWatts.

However, the maths that got me that answer got me thinking... you can use the same method to determine the amount of energy the Earth intercepts, from any star in the universe.

The maths is very straight forward, and you only need 3 bits of information about the star:

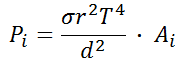

Where 'P(i)' is the intercepted Power measured in Watts; 'σ' is the Stefan Boltzmann Constant; 'r' is the radius of the star; 'T' is the surface temperature of the star (in Kelvin); 'd' is the distance from the star (in the same units as 'r'); and 'A(i)' is the intercepting area of the Earth (in metres).

What I was shocked to discover, is that if the Earth was in a magical sweet spot where it sat with direct view of all the stars within 20 light years (except LP 145-131 as I couldn't confirm it's radius), a staggering 21,346,503 Watts of Heat energy is intercepted by the Earth.

Now obviously in the grand scale of things, this is a minute fraction of what Sol provides (with us being so close), in fact it is equivalent to dotting 0.167 Watt heaters, every kilometer, around the world (wouldn't exactly keep us warm if the sun disappeared!)- But it's still an awe inspiring amount of energy provided from minute little freckles of light in the night sky.

The reason I am posting this thread, though, is to gain the knowledge from those more knowing than me; is there anything that I have missed with this?

Am I correct in saying that radiated infrared energy will travel at the speed of light, and will not dissipate until it hits something i.e. the Earth?

I have attached an excel sheet showing the findings.

From doing this I also discovered that VY Canis Majoris (one of the largest known stars), sitting 3900 light years from Earth, bombards us with 803,083.71 Watts on its own!

However, the maths that got me that answer got me thinking... you can use the same method to determine the amount of energy the Earth intercepts, from any star in the universe.

The maths is very straight forward, and you only need 3 bits of information about the star:

Where 'P(i)' is the intercepted Power measured in Watts; 'σ' is the Stefan Boltzmann Constant; 'r' is the radius of the star; 'T' is the surface temperature of the star (in Kelvin); 'd' is the distance from the star (in the same units as 'r'); and 'A(i)' is the intercepting area of the Earth (in metres).

What I was shocked to discover, is that if the Earth was in a magical sweet spot where it sat with direct view of all the stars within 20 light years (except LP 145-131 as I couldn't confirm it's radius), a staggering 21,346,503 Watts of Heat energy is intercepted by the Earth.

Now obviously in the grand scale of things, this is a minute fraction of what Sol provides (with us being so close), in fact it is equivalent to dotting 0.167 Watt heaters, every kilometer, around the world (wouldn't exactly keep us warm if the sun disappeared!)- But it's still an awe inspiring amount of energy provided from minute little freckles of light in the night sky.

The reason I am posting this thread, though, is to gain the knowledge from those more knowing than me; is there anything that I have missed with this?

Am I correct in saying that radiated infrared energy will travel at the speed of light, and will not dissipate until it hits something i.e. the Earth?

I have attached an excel sheet showing the findings.

From doing this I also discovered that VY Canis Majoris (one of the largest known stars), sitting 3900 light years from Earth, bombards us with 803,083.71 Watts on its own!