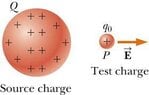

Why is the test charge always positive?

Click For Summary

Discussion Overview

The discussion centers around the use of positive test charges in physics, particularly in the context of electrostatics. Participants explore the reasons behind the common practice of using positive test charges, considering educational and conceptual implications.

Discussion Character

- Conceptual clarification, Debate/contested, Meta-discussion

Main Points Raised

- One participant questions why test charges are always considered positive.

- Another participant asserts that test charges can have arbitrary values, including negative ones.

- Some participants suggest that the use of positive test charges may be for consistency in teaching, as it simplifies comparisons in electrostatic scenarios.

- A participant mentions that using a negative test charge could complicate the understanding of the direction of the electric field vector relative to the force experienced by the charge.

- There is a suggestion that introducing negative test charges might be better suited for later stages of learning to avoid confusion.

Areas of Agreement / Disagreement

Participants express differing views on the necessity of using positive test charges, with some supporting the practice for educational simplicity while others argue for the validity of using negative test charges. The discussion remains unresolved regarding the best approach.

Contextual Notes

Participants note that the choice of test charge may depend on the context of teaching and the specific goals of the discussion, highlighting the importance of clarity in educational settings.

Similar threads

- · Replies 20 ·

- · Replies 11 ·

- · Replies 10 ·

High School

Understanding the electric field

- · Replies 16 ·

- · Replies 3 ·

High School

High voltage batteries and charge distribution

- · Replies 1 ·

- · Replies 3 ·

- · Replies 7 ·

- · Replies 11 ·

- · Replies 4 ·