BoltE

- 2

- 0

- TL;DR

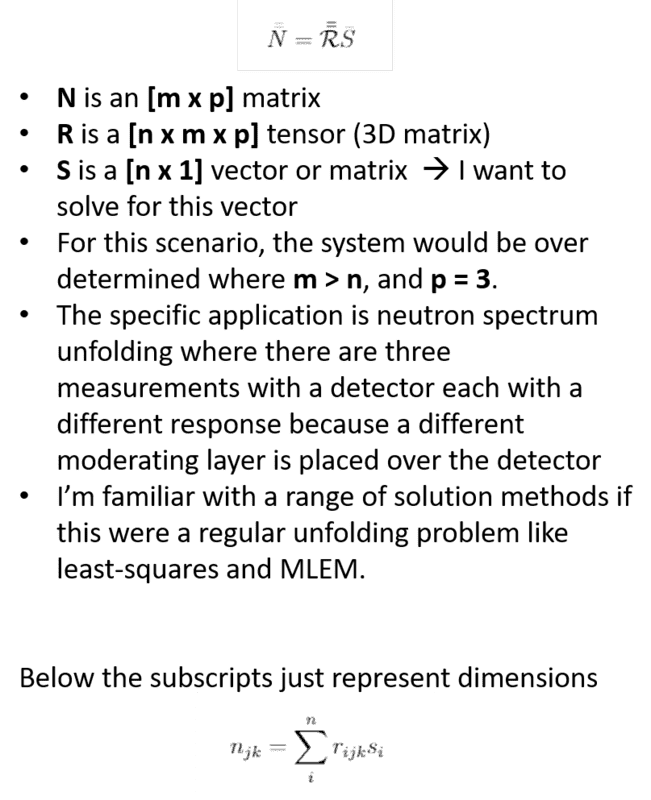

- I have three systems of equations in the form of Ax=b, where there are three different b-vectors, three different A-matrices, all of which use the same x-vector (A1x=b1, A2x=b2,A3x=b3). The goal is to solve for x. I can also write this as a tensor product: b_ij = sum_k (A_ijk x_k), where I would want to invert A to solve for X. I'm familar with regular linear systems where a is a 2D matrix and I could use a least-squares approach, MLEM, etc.

I would like to solve a system of systems of equations Ax=b where A is an n x m x p tensor (3D) matrix, x is a vector (n x 1), and b is a matrix (n x p). I haven't been able to find a clear walk-through of inverting a tensor like how one would invert a regular matrix to solve a system of linear equations. (or an iterative technique like MLEM).

Attached is a typed up version of the equations except with different variables where b = N, A = R, and x = S:

Attached is a typed up version of the equations except with different variables where b = N, A = R, and x = S: