ag123

- 32

- 5

hi all,

This isn't simply physics but is very much related. I'm trying to make an infrared thermometer or some means of remote temperature measurements for temperatures from 0 degree C to around 400 degree C

I started with looking up low cost silicon photo diodes / photo transistors e.g. on ebay etc.

I noted that most of them have a peak sensitivity range around 950nm and spectral response that looks as follows

https://en.wikipedia.org/wiki/Photodiode

Then I read up on black body radiation and Planck's law

https://en.wikipedia.org/wiki/Black-body_radiation

https://en.wikipedia.org/wiki/Planck's_law

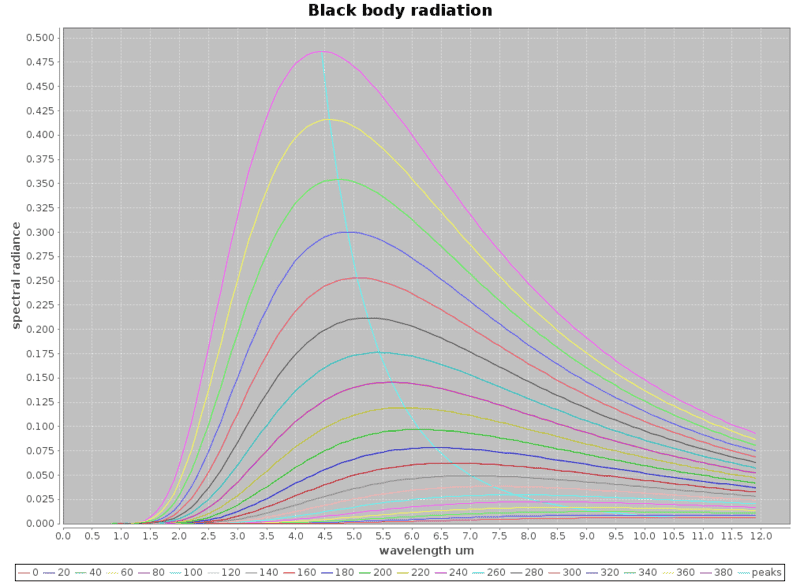

And to get a feel of what it would take: Using Planck's equation, I did a little program and plotted the black body radiation curves based on Planck's equation for the black body radiation radiance against wavelengths for the various temperatures. The curves near the bottom start from 0 deg C to around 380 deg C for every 20 degree C rise in temperatures. I get these curves which are rather nice.

Now I realized I've got a problem, silicon photo diodes can only detect well below 1.5um wavelengths which puts it outside the blackbody radiation ranges I'm trying to measure / detect

I started researching other means and run into thermopile detectors, e.g. ts-118

http://lusosat.org/hardware/200612916515751780.pdf

however, based on the spectral response, these thermopile detectors has an infrared filter that restricts radiation reaching the sensor to the range of 8-15 um, this is actually that around room temperatures or body temperatures (it is useful for a particular purpose)

The trouble is, if i use the thermopile sensor to measure the higher temperatures e.g. 300 deg C, the wavelengths narrowed significantly to around 4.5um, and a significant useful range of radiance energies could not be deployed for the measurements. While i'd think the thermopile detector would still be sufficiently sensitive to detect the higher temperatures, I'm limited to the sensitivity in the range of 8-15 um

I'm now wishing to use a different solution, similar to the nature of thermopile sensors which actually use the Seeback effect (https://en.wikipedia.org/wiki/Thermoelectric_effect#Seebeck_effect) to literally measure temperatures. i'd like to use some kind of temperature sensors e.g. a thermistor https://en.wikipedia.org/wiki/Thermistor

to measure the temperature at the probe. this temperature rise would be caused primarily by absorbing the black body radiation and my guess is it'd reach some equilibrium state with a stable temperature after a while.

the problem is how do i determine the temperature of the black body remotely from the temperature which i am able to measure at the thermistor/probe?

are there other (better) means to measure the temperature remotely (e.g. infrared etc)?

wikipedia has an article on pyrometers but is somewhat short on the physics / equations etc

https://en.wikipedia.org/wiki/Pyrometer

thanks in advance

This isn't simply physics but is very much related. I'm trying to make an infrared thermometer or some means of remote temperature measurements for temperatures from 0 degree C to around 400 degree C

I started with looking up low cost silicon photo diodes / photo transistors e.g. on ebay etc.

I noted that most of them have a peak sensitivity range around 950nm and spectral response that looks as follows

https://en.wikipedia.org/wiki/Photodiode

Then I read up on black body radiation and Planck's law

https://en.wikipedia.org/wiki/Black-body_radiation

https://en.wikipedia.org/wiki/Planck's_law

And to get a feel of what it would take: Using Planck's equation, I did a little program and plotted the black body radiation curves based on Planck's equation for the black body radiation radiance against wavelengths for the various temperatures. The curves near the bottom start from 0 deg C to around 380 deg C for every 20 degree C rise in temperatures. I get these curves which are rather nice.

Now I realized I've got a problem, silicon photo diodes can only detect well below 1.5um wavelengths which puts it outside the blackbody radiation ranges I'm trying to measure / detect

I started researching other means and run into thermopile detectors, e.g. ts-118

http://lusosat.org/hardware/200612916515751780.pdf

however, based on the spectral response, these thermopile detectors has an infrared filter that restricts radiation reaching the sensor to the range of 8-15 um, this is actually that around room temperatures or body temperatures (it is useful for a particular purpose)

The trouble is, if i use the thermopile sensor to measure the higher temperatures e.g. 300 deg C, the wavelengths narrowed significantly to around 4.5um, and a significant useful range of radiance energies could not be deployed for the measurements. While i'd think the thermopile detector would still be sufficiently sensitive to detect the higher temperatures, I'm limited to the sensitivity in the range of 8-15 um

I'm now wishing to use a different solution, similar to the nature of thermopile sensors which actually use the Seeback effect (https://en.wikipedia.org/wiki/Thermoelectric_effect#Seebeck_effect) to literally measure temperatures. i'd like to use some kind of temperature sensors e.g. a thermistor https://en.wikipedia.org/wiki/Thermistor

to measure the temperature at the probe. this temperature rise would be caused primarily by absorbing the black body radiation and my guess is it'd reach some equilibrium state with a stable temperature after a while.

the problem is how do i determine the temperature of the black body remotely from the temperature which i am able to measure at the thermistor/probe?

are there other (better) means to measure the temperature remotely (e.g. infrared etc)?

wikipedia has an article on pyrometers but is somewhat short on the physics / equations etc

https://en.wikipedia.org/wiki/Pyrometer

thanks in advance

Attachments

Last edited: