Discussion Overview

The discussion revolves around the use of convolution to represent the output of linear time-invariant (LTI) systems. Participants explore the mathematical and conceptual foundations of convolution, its implications for system behavior, and the relationship between input and output signals in various contexts.

Discussion Character

- Exploratory

- Technical explanation

- Conceptual clarification

- Debate/contested

Main Points Raised

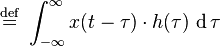

- Some participants question why the output of an LTI system depends on both the current input and previous inputs, suggesting that convolution models this relationship through an integral that accounts for all past inputs.

- Others point out that while convolution is a common representation for LTI systems, there exist systems that do not require convolution, prompting a discussion on the generality of convolution in describing LTI behavior.

- One participant proposes that the output can be expressed as a sum of contributions from past inputs, leading to a generalized form that transitions from summation to integration.

- Another viewpoint emphasizes the linearity of the system, arguing that convolution allows for independent handling of different frequency components without cross-modulation.

- Some participants provide intuitive explanations of convolution, likening it to the cumulative effect of responses to individual impulses, which can be visualized graphically.

- A participant introduces the idea of local linearity in contributions to the output, suggesting that if the relationship were nonlinear, the output would not remain linear with respect to the input.

- There is mention of specific forms of LTI systems, including differential equations, and how they relate to convolution through impulse response and linearity principles.

Areas of Agreement / Disagreement

Participants express various viewpoints on the necessity and implications of convolution in LTI systems. There is no consensus on whether convolution is the only or best representation for all LTI systems, and multiple competing views remain regarding the interpretation and application of convolution.

Contextual Notes

Some discussions highlight the limitations of mathematical proofs in providing physical insight, and there are unresolved questions about the relationship between different domains of input and output signals.

Who May Find This Useful

This discussion may be of interest to students and professionals in electrical engineering, signal processing, and systems theory, particularly those seeking to understand the conceptual underpinnings of convolution in LTI systems.