LMHmedchem

- 20

- 0

Hello,

This is a bit more complex than my previous post, but I don't think it qualifies for university level difficulty. Please move this to the appropriate forum if my assessment is not correct.

I have 4 vectors in three space where each vector has its tail at the same point (point B).

I have the angle between each vector and vBC (in radians).

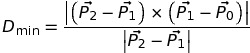

Each vector pair forming one of the angles above lies on a Euclidean plane. These planes intersect along vBC. I can calculate the plane intersection angles as follows.

For the intersection angle ∠ ABC ∩ DBC (angle of intersection between planes ABC and DBC, sorry I don't know the proper symbolic nomenclature for plane intersection angles).

1. take the vector rejection of vBA against vBC

2. take the vector rejection of vBD against vBC

3. calculate the angle between the two rejection vectors

I need to change the angle of intersection between two planes without changing any of the angles between vectors ∠ABC, ∠DBC, or ∠EBC. Changing ∠ ABC ∩ DBC would involve essentially rotating the tip of vBA in a circle around the axis of vBC while the tail of vBA stays at point B, but I don't know how to do that.

The methods I have seem for rotating a vector in 3D involve rotating the vector about one axis.

From stackexchange, a 3D rotation around the Z-axis would be,

To use such a method, I would have to translate my current coordinates so that vBC is aligned with one axis, make the 3D vector rotation, and then translate back. That seems overly involved. It seems as if I should be able to use something like the above using the coordinates of vBC.

Any suggestions would be appreciated,

LMHmedchem

This is a bit more complex than my previous post, but I don't think it qualifies for university level difficulty. Please move this to the appropriate forum if my assessment is not correct.

I have 4 vectors in three space where each vector has its tail at the same point (point B).

Code:

id x y z

B 0.000 0.000 0.000

vBA 0.183 -0.479 0.358

vBD 1.970 2.395 -0.524

vBE -3.798 -1.214 0.168

vBC -5.847 4.571 -1.137I have the angle between each vector and vBC (in radians).

Code:

∠ ABC = 2.466

∠ DBC = 1.570

∠ EBC = 0.989Each vector pair forming one of the angles above lies on a Euclidean plane. These planes intersect along vBC. I can calculate the plane intersection angles as follows.

For the intersection angle ∠ ABC ∩ DBC (angle of intersection between planes ABC and DBC, sorry I don't know the proper symbolic nomenclature for plane intersection angles).

1. take the vector rejection of vBA against vBC

2. take the vector rejection of vBD against vBC

3. calculate the angle between the two rejection vectors

Code:

∠ ABC ∩ DBC = 2.484

∠ ABC ∩ EBC = 0.674

∠ DBC ∩ EBC = 3.125I need to change the angle of intersection between two planes without changing any of the angles between vectors ∠ABC, ∠DBC, or ∠EBC. Changing ∠ ABC ∩ DBC would involve essentially rotating the tip of vBA in a circle around the axis of vBC while the tail of vBA stays at point B, but I don't know how to do that.

The methods I have seem for rotating a vector in 3D involve rotating the vector about one axis.

From stackexchange, a 3D rotation around the Z-axis would be,

Code:

|cos θ −sin θ 0| |x| |x cos θ − y sin θ| |x'|

|sin θ cos θ 0| |y| = |x sin θ + y cos θ| = |y'|

| 0 0 1| |z| | z | |z'|To use such a method, I would have to translate my current coordinates so that vBC is aligned with one axis, make the 3D vector rotation, and then translate back. That seems overly involved. It seems as if I should be able to use something like the above using the coordinates of vBC.

Any suggestions would be appreciated,

LMHmedchem

Last edited: