Homework Help Overview

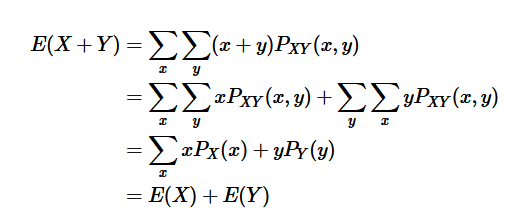

The discussion revolves around proving a property of expected values in the context of discrete random variables, specifically that the expected value of a linear combination of random variables can be expressed in terms of their individual expected values. The original poster expresses uncertainty about the transition between steps in a proof involving summations and joint distributions.

Discussion Character

- Conceptual clarification, Mathematical reasoning, Assumption checking

Approaches and Questions Raised

- Participants explore the relationship between joint and marginal distributions, with some attempting to clarify the mathematical steps involved in the proof. Questions arise regarding the application of the law of total probability and the structure of the summations.

Discussion Status

There is an active exploration of the concepts of joint and marginal distributions, with participants providing examples and seeking clarification on specific steps in the proof. Some guidance has been offered regarding the mathematical relationships, but no consensus has been reached on the original poster's confusion.

Contextual Notes

The original poster notes a lack of clarity in their instructor's explanations, indicating a need for a deeper understanding of the foundational concepts involved in the problem.