Finding Niches for Publishable Undergraduate Research

Table of Contents

What ‘Publishable’ Means

Undergraduate interest in research is a good thing; it’s even better if they aspire to publish their work for review and consideration from a broader audience. First, we should consider what it means to be publishable.

Usually, “publishable” means a paper contains a novel and interesting result in either theory or experiment that is more likely than not to be correct.

The novel” is a bit easier to understand objectively: it means the same result has not been published previously.

“Interesting” is more subjective. Often in the search for a “novel,” scientists (including undergrads) go off into the weeds because accessible theories and experiments that have not been previously published are more likely in areas where no one has cared enough to work very hard. This tends to make them less “interesting.”

Challenges for Undergraduates

Undergrads struggle with research ideas because they often tend to assume their work needs to be within the domain of new fundamental science of the sort that would be suitable for the Physical Review when often their skills and scientific maturity have not yet really empowered them for that level of contribution. As mentors of a lot of undergrads (and high school) research, we’ve found that several other niches work well with the skill sets and scientific maturity more common among undergraduates (and high school students):

Inventing new instruments and techniques (or revisiting the usefulness of existing ones with faster/cheaper technology)

Student-built inexpensive devices

Device for Underwater Laboratory Simulation of Unconfined Blast Waves

One of our students made a veritable cottage industry from inventing new, inexpensive laboratory devices for conducting blast wave research, and he’ll enter college in the fall with 6 peer-reviewed papers. The key here is to find a field where the development of new instruments is within the capabilities of the student.

One of our students made a veritable cottage industry from inventing new, inexpensive laboratory devices for conducting blast wave research, and he’ll enter college in the fall with 6 peer-reviewed papers. The key here is to find a field where the development of new instruments is within the capabilities of the student.

Revisiting computational techniques

Shock Tube Design for High Intensity Blast Waves for Laboratory Testing of Armor and Combat Materiel

Explicit numerical integration was too slow to be interesting or useful for computing Fourier transforms in the first decades after computers came into wide use, but this paper asked the question of whether there was any advantage to revisiting an abandoned technique now that computers were fast enough to do it “the old fashioned way.” There is lots of room for an experimental/computational approach investigating the benefits of different computational approaches that may be simple tweaks on tried and true methods or methods that were left behind in the past as too slow for the computers of that generation.

Low-cost measurement approaches

A More Accurate Fourier Transform

I think there will always be a niche for achieving comparable measurement accuracy with instrumentation that is 1% to 10% of the cost of the state-of-the-art equipment used by well-funded world-class operations. This paper demonstrates an approach to measuring drag coefficients with inexpensive hobbyist equipment.

Accurate Measurements of Free Flight Drag Coefficients with Amateur Doppler Radar

Ballistics and barrel friction

A rifle is one of the simplest forms of the internal combustion engine, and measuring the friction of a bullet in the barrel was one of the outstanding unsolved problems in internal ballistics for many decades. This method was discovered in a student project by reanalyzing data acquired in a different project on bullet stability.

Measuring Barrel Friction in the 5.56mm NATO

The problem of predicting bullet penetration and tissue damage from first principles is one of the most important unsolved problems in terminal ballistics. Addressing those issues is beyond the skill set of most undergraduates, but the development of experimental methods to collect the data for testing and refining new theoretical approaches is well within their abilities – combining first-year physics and calculus in very practical ways.

Bullet Retarding Forces in Ballistic Gelatin by Analysis of High Speed Video

Novel experiments that are interesting because of environmental applications

Environmental motivation

Environmental applications make any project more interesting. The strongest trend in ballistics in the last 20 years is getting the lead out to reduce human exposure and environmental impacts. The industry has lots of promising new technologies and products, and the results of well-designed independent testing will always get their fair share of attention.

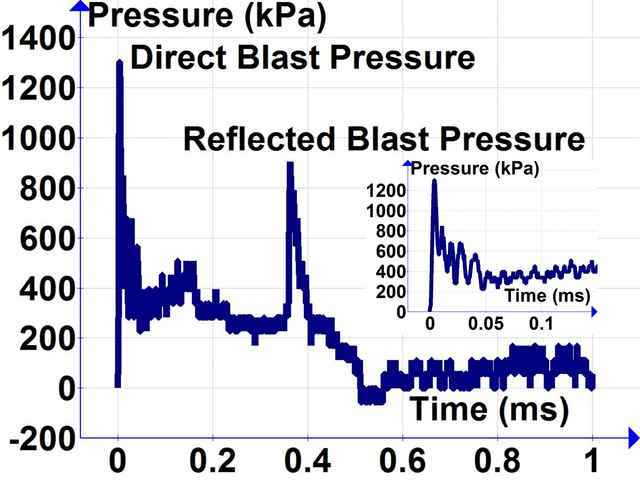

High-speed measurement of firearm primer blast waves

Researchers made a big splash a few years ago demonstrating the effectiveness of magnetic hooks in reducing shark bycatch. This result had potential applications in longline fishing but depended on the unproven assumption that magnets would affect the catch rate of elasmobranches (sharks and rays) but not teleosts (bony fishes). The experiment was simple: find a magnet whose field was comparable to the earth’s magnetic field at 0.5 m, attach it to some hooks, go fishing, and record the catch rates on the magnetic hooks and a non-magnetic control group. Lots of species left to test …

Novel experiments that are interesting because of educational applications

Educational experiment examples

The potential research projects here run the spectrum from new and interesting possible undergraduate laboratories to testing the specifications of lab research gear to just having fun. This project was born when the student realized a transparent tube and a high-speed video camera could accurately measure what happens inside of potato cannons.

Studying the Internal Ballistics of a Combustion Driven Potato Cannon using High-speed Video

This project was born when a student realized a high-speed video camera was a great tool for measuring deflagration velocities. With the wider availability and lower prices of high-speed cameras, there is a lot of other fuel-air and oxy-fuel combinations that can be tested in a wide array of geometries. In addition to the educational interest, there are important questions in chemical kinetics and deflagration to detonation transitions that can be answered with relatively simple experiments.

Measuring Deflagration Velocity in Oxy-Acetylene with High-Speed Video

High-speed video is a great experimental tool because it allows accurate determination of position vs. time of a visual signal. But there are many cases where the kinematics can be determined with a microphone and appropriate sound signal. Think of what Galileo could have done with a sound card.

An Acoustic Demonstration of Galileo’s Law of Falling Bodies

Echo-based measurement of the speed of sound

Finding mistakes in published papers and writing comments pointing them out

Spotting errors in literature

Unless a student has really keen error detection skills and voluminous reading habits, they may not catch any mistakes in published papers. But most well-read faculty members and active scientists know where the bodies are buried and can point students to the low-hanging fruit. This paper responds to a “peer-reviewed” paper with both fundamental errors in the statistics as well as exaggerated claims in the abstract.

My wife found the original errors when mentoring a student on a related project. We didn’t have the time to follow up on it at the time, so we set it aside until we had students looking for a project. They did a great job tracking down and documenting the mistakes. High school math and common sense were all that was required.

Errors in Length-weight Parameters at FishBase.org

Policy and public trust

If you see something, say something – or find a student to track down the details and write up the paper. I don’t know what bothers me more, the original mistake, or the fact that it still has not been fixed nearly four YEARS after we pointed it out. We can “March for Science” until we are blue in the face, but if science cannot really demonstrate the “self-correction” that it claims, large segments of the public will remain justified in “denying” claims from parties that prove themselves less than trustworthy.

When public policy is concerned, there is often something of a shell game where the predictions get more press than a retrospective comparison between predictions and experiments. Since the publication of this student paper, I am happy to report that the scientists making their predictions have improved their models and the resulting accuracy of their predictions.

Predictions Wrong Again on Dead Zone Area – Gulf of Mexico Gaining Resistance to Nutrient Loading

Review/hypothesis papers bringing together different fields that are related, but not well connected in the literature

Bridging disciplines

The ideas for these papers require a broad knowledge of the literature in related fields, suggesting the genesis of these hypotheses more likely lies with faculty mentors than with students. But many (perhaps even most) active researchers have some hypotheses swirling around in the back of their minds that a bit of student prodding can bring forward. Many faculty have neither time nor interest to do all the literature work and writing to produce a coherent paper, but they tend to be happy enough when free labor appears and is willing to do the harder work in response to some brainstorming sessions, throwing some logical diagrams and outlines on a whiteboard, and other legwork to get hypotheses and under-appreciated connections into print for colleagues to consider.

Our paper on nutrient loading and red snapper production was born from reading about the red snapper management debate combined with closely following the literature relating to “dead zones” in the Gulf of Mexico purportedly resulting from nutrient loading of farm fertilizers flowing down the Mississippi River. We were perplexed, because fishing on Louisiana’s Gulf coast is among the best in the United States, and we knew from first-hand experience that those waters are teeming with life and anything but a “dead zone.” The light bulb went on when reading a thesis from LSU that observed fisheries biomass was highly correlated with proximity to the mouth of the Mississippi River.

Nutrient Loading Increases Red Snapper Production in the Gulf of Mexico

Our magnetic shark deterrent paper is just one of several cases where biologists had stalled in progress due to insufficient ongoing mastery of freshman physics. Consequently, the literature had one basic hypothesis (sharks can detect magnets), but experimental designs to distinguish possible magnetoreception mechanisms were lacking, and papers favoring one mechanism over another were more the result of the confirmation biases of the authors that clearly supported or exclusionary experimental evidence. There was plenty of room for a good undergraduate physics student to articulate the strongest contenders: electromagnetic induction in saltwater, direct magnetoreception with macroscopic magnetic materials, and direct magnetoreception with microscopic magnetic materials (biogenic magnetite). We didn’t solve the problem, but at least we articulated the choices clearly to better support improved experimental designs. (For example, dependence on conductivity or current speeds would favor electromagnetic induction.)

Review of Magnetic Shark Deterrents: Hypothetical Mechanisms and Evidence for Selectivity

Testing products to compare measured values with product specifications

Verifying product specifications

There may be greater interest than we have explored in testing whether laboratory equipment meets its product specifications. Is the thermometer, balance, voltmeter, power meter, spectrum analyzer, etc. as accurate as is claimed? Every sensor in the Vernier catalog is a potential project. Most attentive instructors in freshman physics labs will have a good idea of what gear is as accurate as claimed, and what gear falls short. We’ve taken a bit different approach, testing products marketed to hobbyists, sportsmen, military, and law enforcement.

Testing Estes Thrust Claims for the A10-PT Rocket motor

Comparing Measured Fluorocarbon Leader Breaking Strength with Manufacturer Claims

More Inaccurate Specifications of Ballistic Coefficients

Comparing Advertised Ballistic Coefficients with Independent Measurements

Testing validity of commonly used equations with little published data supporting how they are used

Motivation to test common formulas

Most equations in science have some area of applicability where they have been validated as accurate. But over time, usage often expands far beyond the “fine print” relating to the assumptions and conditions where the equations are valid. Experimental tests of these equations to explore their validity in areas of ongoing application can be of great interest. A great habit of mind for any scientist (student or not) is to ask, “Where is the data supporting that well-known equation, and does the data support the broad usage of how that equation is commonly employed?”

Some models reach widespread use because they are easy to apply and available rather than because they are rigorous or consistently make accurate predictions. In the rush to provide some estimate, people with more engineering than scientific mindsets will use the best equation available and run with it rather than giving due consideration to the validation and expected accuracy. When we started blast research in 2007, we noticed wide use of the acoustic impedance model of blast wave transmission in designing protective equipment and interpreting experiments. We could find no data supporting how it was being used, so we designed and executed appropriate experiments in both air blasts and underwater blasts.

A Test of the Acoustic Impedance Model of Blast Wave Transmission (in the air)

Ideas for the next two student papers came from considering experimental possibilities to make use of regular travel between Colorado (high elevation > 7000 ft) and Louisiana (sea level). Students brainstormed ways to conduct one-day experiments with existing equipment so they ended up doing scholarly searches for formulas purporting to predict effects relating to air pressure, air density, or altitude. There are lots of formulas predicting these effects, the challenge was to find examples that were under-supported with experimental data.

Altitude and air-density experiments

The formula suggesting the proportionality of aerodynamic drag to air density is in nearly every introductory physics textbook. But many fail to clarify that it is well known that the drag coefficient is not constant, but depends on the Reynolds number, which, in turn, depends on air density. The assertion of the independence of drag coefficient from air density for supersonic projectiles is more subtle – being buried in formulas published by Robert McCoy of the Army Ballistics Research Laboratory at Aberdeen Proving Ground in Maryland. Digging into the experimental support for those formulas showed that the data supported the independence of drag coefficient from Reynolds number (over the applicable range of projectile diameters, not in an absolute sense), and independence from Reynolds number was used to infer independence from air density. However, all the supporting experimental data was gathered at the sea level facilities in Maryland, so the air density range was too limited to provide direct support for the independence of the drag coefficient from air density.

The formula for the dependence of rocket motor thrust on ambient pressure was found on a NASA website. Due diligence in literature searches suggested an absence of published experimental support for the formula. No literature search is perfect, so it was unclear if there was supporting data published in an obscure book or journal inaccessible from the available libraries and search engines, or if there was supporting data sitting unpublished but available to NASA and Department of Defense rocket scientists. In either case, the difficulty in finding supporting data motivated a nice experiment that could be conducted with relatively inexpensive hobbyist rocket motors.

Altitude Dependence of Rocket Motor Performance

This is probably the niche that requires the most background work and guidance from a mentor to identify because the idea to test how the formula is being used usually originates with the recognition of an ABSENCE of supporting data. Gaining confidence that there is an absence of supporting data in the literature requires an extremely thorough background literature search. But note that in 3 or 4 of the cases above, the new (and relatively simple) experimental result showed that the application of the well-known formula was inappropriate. Formulas without supporting data are wrong a lot of the time.

Takeaway for mentors

Our niches are unlikely to produce significant advances in FUNDAMENTAL physics. The skills and resources for significant advances in FUNDAMENTAL physics are often outside of the scope of abilities of undergrads. But there is a lot of good and solid science to be done in the niches we find useful. Most of the discussion among my physics colleagues would not center on whether these papers are “publishable” (since they are all published), but on whether they are “physics” of the sort suitable for undergrad research. Each institution sets its standards on that. But one can certainly have some fun and accomplish some solid research with a Myth Busters mindset.

I grew up working in bars and restaurants in New Orleans and viewed education as a path to escape menial and dangerous work environments, majoring in Physics at LSU. After being a finalist for the Rhodes Scholarship I was offered graduate research fellowships from both Princeton and MIT, completing a PhD in Physics from MIT in 1995. I have published papers in theoretical astrophysics, experimental atomic physics, chaos theory, quantum theory, acoustics, ballistics, traumatic brain injury, epistemology, and education.

My philosophy of education emphasizes the tremendous potential for accomplishment in each individual and that achieving that potential requires efforts in a broad range of disciplines including music, art, poetry, history, literature, science, math, and athletics. As a younger man, I enjoyed playing basketball and Ultimate. Now I play tennis and mountain bike 2000 miles a year.

I have started tutoring a highschool student on a gifted & talented program.

I am going to get him to investigate the design and performance of a yagi antenna.

Plenty of things to optimise but I can't think of anything novel about it.

Any ideas. Will also post on its own thread to get ideas.My son often points out (sometimes with sarcasm) that the path to novelty in well researched areas is adding qualifiers. With over 28k google scholar hits on Yagi antenna, I suspect that may be the case here. The trick with achieving novelty through qualifiers is not going so far off into the weeds that the project still addresses something interesting to readers who actually care. My son's sarcasm in practice usually suggests that a given case has added qualifiers in a way that renders the idea uninteresting.

In the Yagi antenna case, the recent trends are to smaller wavelengths (microwaves and mm waves), antenna arrays, and printed antennas. Smaller wavelengths add both computational and experimental challenges as designs become more sensitive to dimensional variations and lack of precision in realizing a design in practice since some dimensions can no longer be considered small relative to the operational wavelength. An additional challenge is that as wavelengths decrease, the costs of accurate equipment for performing experiments tends to rise. The cost and complexity of modeling software also tends to increase.

One approach for a student new to the field of Yagi antennas (or RF in general) might be to test whether some existing products meet their claimed specifications rather than trying to jump in and improve existing designs. There are several 2.4 GHz Yagi antennas on the market for extended range Wi-Fi applications. If the right equipment is available, it shouldn't be too hard to design some simple experiments to determine whether or not a few models meet their specifications, and (if not) how far out of spec different models are. Pointing out problems in existing work is often lower hanging fruit for people entering a new field than coming up with new, better designs.

I have started tutoring a highschool student on a gifted & talented program.

I am going to get him to investigate the design and performance of a yagi antenna.

Plenty of things to optimise but I can't think of anything novel about it.

Any ideas. Will also post on its own thread to get ideas.

Nice article! We just had Senior Design Day here at the U of A, where all the seniors present the projects they've been working on all year. I'm not sure how many of these would qualify as "Research", but there were definitely some interesting projects. I talked for a bit with one of the seniors whose project was to estimate the size of particles in a solution by looking at their Brownian motion. It appeared to be pretty successful.

Fantastic idea for an article. Would love to see if anybody has similar ideas for mathematics research, (although I would have liked to see it about 4 years ago when I was trying to do undergrad research). I have some ideas but little time to elaborate them now.When I worked at the Air Force Academy, the Dept of Mathematical Sciences took a broad view on what fulfilled their "senior research" course requirements. I mentored a couple of their senior projects – one was working toward a three dimensional (two inputs, one output) cumulative probability function for standard weight in fish that would allow computing a standard weight equation with fewer data points than existing methods; another was an applied method to better understand rocket motor performance. I'm fairly certain that the committee would have approved just about any applied project a senior wanted to do that was heavy in math, regardless of the application.

A lot of very applied math also pops up in the Math category at ISEF-affiliated state and regional science fairs. Projects that might otherwise compete in Animal Sciences, Environmental Science, Biomedical and Health Sciences, etc. often compete in Mathematics if the data analysis is sufficiently intricate. Last year they added a new "Computational Biology and Bioinformatics" category to provide a place for projects that often competed in Mathematics.

When we work with students in brainstorming student research projects, we usually touch on a variety of projects that one can group under the general category of "Numerical Experiments." The idea of these is that the student formulates a hypothesis on which computational or analysis approach might be better for some situation, with a clear definition of what better means in the context: faster, more accurate, simpler, etc. Then one conducts a numerical experiment to test the hypothesis. The student may create data using a spreadsheet with known good inputs plus added Gaussian noise, and then conduct the numerical experiments with different analysis techniques trying to recover the known good inputs.

There are also lots of questions in chaos and dynamics of complex systems that can be answered with numerical experiments that simply numerically integrate the system of ODEs describing the system. Computers are fast enough these days that the numerical integration can be done with Runge-Kutta methods, so the numerical experiments are a matter of exploring phase space to determine how much of it is chaotic and varying system parameters to observe the transition from order to chaos as the knobs are turned. As a physicist, I tend to find Hamiltonian systems the most interesting, but I'm sure real math professors can suggest a variety of interesting non-Hamiltonian systems.

Good idea when it would be scheduled might be practical problem IMO.

Fantastic idea for an article. Would love to see if anybody has similar ideas for mathematics research, (although I would have liked to see it about 4 years ago when I was trying to do undergrad research). I have some ideas but little time to elaborate them now.