Why Can’t My Computer Do Simple Arithmetic?

The first computer I owned was an Apple IIe computer, with a CPU that ran at slightly over 1 Megahertz (MHz), and with 64 Kilobytes (KB) of RAM, together with two 5 1/4″ floppy drives that could each store 140 Kilobytes (KB) of data.

My current computer (which costs about the same as the Apple computer) is vastly more powerful. It has a CPU that runs each of its four cores at 3.40 Gigahertz (GHz), 10 Gigabytes (GB) of RAM, and two hard drives with a total capacity of 1.5 Terabytes (TB). The increases in CPU clock speed, RAM size, and disk storage represent many orders of magnitude compared to my old Apple.

Even though the computer I have now is light years ahead of my first computer, it cannot do some pretty simple arithmetic, such as adding a few decimal fractions. Or maybe I should say, that the usual programming tools that are available aren’t up to this simple task. The following example is a program written in C, that adds 1/10 to itself for a total of ten terms. In other words, it carries out this addition: 1/10 + 1/10 + 1/10 + 1/10 + 1/10 + 1/10 + 1/10 + 1/10 + 1/10 + 1/10 (ten terms). You might think that the program should arrive at a final value of 1, but that’s not what happens. In this Insights article, I’ll explain why the sum shown above doesn’t result in 1.

#include<stdio.h>

int main()

{

float a = .1;

float b = .25; // Not used, but will be discussed later

float c = 0.0;

for (int i = 0; i < 10; i++)

{

c += a;

}

printf("c = %.14f\n", c);

return 0;

}Here’s a brief explanation of this code, if you’re not familiar with C. Inside the main() function, three variables — a, b, and c — are declared as float (four-byte floating point) numbers and are initialized to the values shown. The for loop runs 10 times, each time adding 0.1 to an accumulator variable c, which starts off initialized to zero. In the first iteration, c is reset to 0.1, in the second iteration it is reset to 0.2, and so on for ten iterations. The printf function displays some formatted output, including the final value of c.

The C code shown above produces the following output.

c = 1.00000011920929

People who are new to computer programming are usually surprised by this output, which they believe should turn out to be exactly 1. Instead, what we see is a number that is slightly larger than 1, by .00000011920929. The reason for this discrepancy can be explained by understanding how many programming languages store real numbers in a computer’s memory.

Table of Contents

How floating-point numbers are stored in memory

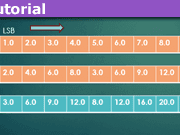

Compiler vendors for most modern programming languages adhere to the IEEE-754 standard for floating-point arithmetic (IEEE floating point). For float numbers in C or C++ or REAL or REAL(4) numbers (four-byte floating-point numbers), the standard specifies one bit for the sign, eight bits for the exponent, and 23 bits for the mantissa. This representation is similar to scientific notation (for example, as in Avogadro’s Number, ##6.022 × 10^{23}##), except that the base is 2 instead of 10. This means that the decimal (base-10) number 1.5 would be stored in the form of something like ##1.1_2 × 2^0##.

Here the exponent (on 2) is 0, and the mantissa is ##1.1_2##, with the subscript 2 indicating this is a binary fraction, not a decimal fraction. The places to the right of the “binary” point represent the number of halves, fourths, eighths, sixteenths, and so on, so ##1.1_2## means 1 + 1(1/2), or 1.5.

One wrinkle I haven’t mentioned yet is that the exponent is not stored as-is; it is stored “biased by 127.” That is, 127 is added to the exponent that is stored. When you retrieve the value that represents the exponent, you have to “unbias” it by subtracting 127 from it to get the actual exponent.

The last point we need to take into account is that, with few exceptions, “binary” fractions are stored in a normalized form — as 1.xxx…, where each x following the “binary” point represents either a 0 bit or a 1 bit. Since these numbers all start with a 1 digit to the left of the “binary” point, the IEEE standard doesn’t bother to store this number.

The IEEE 754 standard for 32-bit floating-point numbers groups the 32 bits as follows, with bits numbered from 31 (most significant bit) down to 0 (least significant bit), left to right. The standard also specifies how 64-bit numbers are stored, as well.

SEEE EEEE EMMM MMMM MMMM MMMM MMMM MMMM

The bits have the following meanings:

- S – bit 31 – sign bit (0 for positive, 1 for negative)

- E – bits 30 through 23 – exponent bits (biased by 127)

- M – bits 22 through 0 – mantissa

Since there are only 23 bits for the mantissa (or 24 if you count the implied bit for normalization), we’re going to run into trouble in either of these cases:

- The mantissa contains a bit pattern that repeats endlessly.

- The mantissa doesn’t terminate within the available 23 bits.

Some floating-point numbers have exact representations in memory…

If you think back to when you first learned about decimal fractions, you might remember that some fractions have nice decimal forms, such as 1/5 being equal to 0.2 and 3/4 is equal to 0.75. Other fractions have decimal forms that are repeated endlessly, such as 1/3 = .333…, and 5/6 = 0.8333… The same is true for numbers stored as binary fractions, with some numbers having terminating representations, others having groups of digits that repeat, and still others with no terminating or repeating pattern.

The program at the beginning of this article had the following variable definition that wasn’t used. My reason for including this variable was to be able to find the internal representation of 0.25.

float b = .25;

It turns out that .25 is one of the floating-point numbers whose representation in memory is exact. If you notice that .25 is ##0 × \frac 1 2 + 1 × \frac 1 4##, then as a binary fraction this would be ##.01_2##. Subscript 2 is to emphasize that this is a base-2 fraction, not a decimal fraction.

In quasi-scientific notation, this would be ##.01 × 2^0##, but this is not in the normalized form with a leading digit of 1. If we move the “binary” point one place to the right, making the mantissa larger by a factor of 2, we have to counter this by decreasing the exponent by 1, to get ##.1 × 2^{-1}##. Moving the binary point one more place to the right gets us this normalized representation: ##1.0 × 2^{-2}##.

This is almost how the number is stored in memory.

When I wrote the program that is presented above, I used the debugger to examine the memory where the value .25 was stored. Here is what I found: 3E 80 00 00 (Note: The four bytes were actually in the reverse order, as 00 00 80 3E.)

The four bytes shown above are in hexadecimal, or base-16. Hexadecimal, or “hex” is very easy to convert to or from base-2. Each hex digit represents four binary bits. In the number above, ##3 = 0011_2##, ##E = 1101_2##, and ##8 = 1000_2##.

If I rewrite 3E 80 00 00 as a pattern of 32 bits, I get this:

0011 1111 0000 0000 0000 0000 0000 0000

- The red bit at the left is the sign bit, 0 for a positive number, and 1 for a negative number.

- The 8 blue bits are the biased exponent, ##7D_{16}##, or ##125_{10}##. If we unbias this (by subtracting 127), we get -2.

- The 23 orange bits on the right are the mantissa, all zero bits in this case. Remember that there is an implied 1 digit that isn’t shown, so the mantissa, in this case, is ##1.000 … 0##.

This work shows us that 3E 80 00 00 is the representation in memory of ##+1.0 × 2^{-2}##, which is the same as .25. For both the decimal representation (0.25) and the binary representation (##+1.0 × 2^{-2}##), the mantissa terminates, which means that 0.25 is stored in the exact form in memory.

… But other floating-point numbers have inexact representations

One such value whose representation isn’t exact is 0.1. When I looked for the representation in memory of 0.1, I found this four-byte hexadecimal number:

3D CC CC CD

Here’s the same number, written as a 32-bit bit pattern:

0011 1101 1100 1100 1100 1100 1100 1101

- The red bit at the left is the sign bit, 0 for a positive number, and 1 for a negative number.

- The 8 blue bits are the biased exponent, ##7B_{16}##, or ##123_{10}##. If we unbias this (by subtracting 127), we get -4.

- The 23 orange bits on the right are the mantissa. Dividing the mantissa into groups of four bits, and tacking an extra 0 bit to the right to make a complete hex digit, the mantissa is 1001 1001 1001 1001 1001 1010, which can be interpreted as the hexadecimal fraction ##1.99999A_{16}##. (The leading 1 digit is implied.) It’s worth noting that the true bit pattern for 0.1 should have a mantissa of 1001 1001 1001 1001 1001 …, repeating endlessly. But because the infinitely long and repeating bit pattern won’t fit in the available 23 bits, there is some rounding that takes place in the last group of digits.

##1.99999A_{16}## means ##1 + \frac 9 {16^1} + \frac 9 {16^2} + \frac 9 {16^3} + \frac 9 {16^4} + \frac 9 {16^5} + \frac {10} {16^6}## =1.6000000238418500000, a result I got using a spreadsheet.

Putting together the sign, exponent, and mantissa, we get we see that the representation of 0.1 in memory is ##+1.6000000238418500000 × 2^{-4}##, or 0.100000001490, truncated at the 12th decimal place.

Conclusion

So…. to summarize what went wrong with my program, what I thought I was adding (0.1) and what was being added (0.100000001490) weren’t the same numbers. Instead of getting a nice round 1 for my answer, what I got was a little larger than 1.

We can minimize this problem to some extent by using 64-bit floating-point types (by declaring variables of type double in C and C++ and of type DOUBLE PRECISION or REAL(8) in Fortran), but doing so doesn’t eliminate the problem pointed out in this article.

Some programming languages, including Python and the .NET Framework, provide a Decimal type that yields correct arithmetic for a broad range of values. Any programmer whose application performs financial calculations that must be precise down to the cents place should be aware of the shortcomings of standard floating-point types, and consider using external libraries or built-in types, if available, that handle decimal numbers correctly.

Former college mathematics professor for 19 years where I also taught a variety of programming languages, including Fortran, Modula-2, C, and C++. Former technical writer for 15 years at a large software firm headquartered in Redmond, WA. Current associate faculty at a nearby community college, teaching classes in C++ and computer architecture/assembly language.

I enjoy traipsing around off-trail in Olympic National Park and the North Cascades and elsewhere, as well as riding and tinkering with my four motorcycles.

“Possibly it can, I am trying to figure out just what your question is about. If it is a PC( maybe a MAC also) then click the START button in the lower left, then ALL PROGRAMS, next ACCESSORIES and there should be a “Calculator” choice. Within it a choice probably called VIEW will show several different models of hand calculators that you click thru to run.”

You missed the point of my article, which is this — if you write a program in one of many programming languages (such as C or its derivative languages, or Fortran, or whatever) to do some simple arithmetic, you are likely to get an answer that is a little off. It doesn’t matter whether you use a PC or a Mac. The article discusses why this happens, based on the way that floating point numbers are stored in the computer’s memory.

Possibly it can, I am trying to figure out just what your question is about. If it is a PC( maybe a MAC also) then click the START button in the lower left, then ALL PROGRAMS, next ACCESSORIES and there should be a “Calculator” choice. Within it a choice probably called VIEW will show several different models of hand calculators that you click thru to run.

“Mark44 submitted a new PF Insights post

[URL=’https://www.physicsforums.com/insights/cant-computer-simple-arithmetic/’]Why Can’t My Computer Do Simple Arithmetic?[/URL]

[IMG]https://www.physicsforums.com/insights/wp-content/uploads/2016/01/computermath-80×80.png[/IMG]

[URL=’https://www.physicsforums.com/insights/cant-computer-simple-arithmetic/’]Continue reading the Original PF Insights Post.[/URL]”

Early computers used BCD (binary coded decimal), including the first digital computer, the Eniac. IBM 1400 series were also decimal based. IBM mainframes since the 360 include support for variable length fixed point BCD, and variable length BCD (packed or unpacked) is a native type in COBOL. Intel processors have just enough support for BCD instructions to allow programs to work with variable length BCD fields (the software would have to take care of fixed point issues).

As for binary floating point compares, APL (A Programming Language), created in the 1960’s, has a program adjustable “fuzz” variable used to set the compare tolerance for floating point “equal”.

There are fractional libraries that represent rational numbers as integer fractions, maintaining separate values for the numerator and denominator . Some calculators include fractional support. Finding common divisors to reduce the fractions after an operation is probably done using Euclid algorithm, so this type of math is significantly slower.

“I assume the symbols you enter e.g “Sum[…]” is manipulated by Wolfram which will call its already implemented methods done in C/C++ to compute the “sum” as requested. The result will be sent back to Wolfram to be displayed as an output.”

That wouldn’t necessarily imply that the C/C++ code is using primitive floating point types. I don’t know about Wolfram, but I think a good Math package would allow you to choose.

“Mathematica is an implementation of the Wolfram Language. It’s not written in the language it implements.

Depending on how you enter an expression, Mathematica may use machine representation of numbers. For example, if you enter Sum[0.1, {i, 1, 10}] – 1 into Mathematica, you’ll get ##-1.11022times10^{-16}##, but if you enter the sum the way Mark did, Sum[1/10, {i, 1, 10}] – 1, the result will be 0.”

I assume the symbols you enter e.g “Sum[…]” is manipulated by Wolfram which will call its already implemented methods done in C/C++ to compute the “sum” as requested. The result will be sent back to Wolfram to be displayed as an output.

“Mathematica is written in [URL=’https://en.wikipedia.org/wiki/Wolfram_Language’]Wolfram Language[/URL], C/C++ and Java.”

Mathematica is an implementation of the Wolfram Language. It’s not written in the language it implements.

Depending on how you enter an expression, Mathematica may use machine representation of numbers. For example, if you enter [b]Sum[0.1, {i, 1, 10}] – 1[/b] into Mathematica, you’ll get ##-1.11022times10^{-16}##, but if you enter the sum the way Mark did, [b]Sum[1/10, {i, 1, 10}] – 1[/b], the result will be 0.

“Until now, nothing I could find in the C and C++ standard libraries can do correct computation with floats. I don’t understand why the committee have done nothing about this even though there are already external libraries to do this, some of which are for free online.”

What, exactly, do you mean by “correct computation with floats”?

Strictly speaking, no computer can ever do correct computations with the reals. The number of things a Turing machine (which has infinite memory) can do is limited by various theories of computation. On the other hand, [URL=’https://en.wikipedia.org/wiki/Almost_all’]almost all[/URL] of the real numbers are not computable. The best that can be done with a Turing machine is to represent the computable numbers. The best that can be done with a realistic computer (which has finite memory and a finite amount of time in which to perform computations) is to represent a finite subset of the computable numbers.

As for why the C and C++ committees haven’t made an arbitrary precision this part of the standard,

YAGNI: You Ain’t Gonna Need It.

“Mathematica does rational arithmetic, so it gets it exact: Sum[1/10,{i,10} ] = 1

;-)”

Mathematica is written in [URL=’https://en.wikipedia.org/wiki/Wolfram_Language’]Wolfram Language[/URL], C/C++ and Java.

As the link states, Wolfram only deals with [URL=’https://en.wikipedia.org/wiki/Symbolic_computation’]symbolic computation[/URL], [URL=’https://en.wikipedia.org/wiki/Functional_programming’]functional programming[/URL], and [URL=’https://en.wikipedia.org/wiki/Rule-based_programming’]rule-based programming[/URL]. So arithmetic and other computational math problem solvers are computed and handled in C or C++, whose main aim is also to boost the software performance.

[php]float a = 1.0 / 10;

float sum = 0;

for (int i = 0; i < 10; i++) { sum += a; } std::cout << sum << std::endl;//Output 1[/php]

The simplest example of a malfunction I can think of is 1/3+1/3+1/3=0.99999999. The main thing I remember from way back when (in my early teens) was that my computer had 8 decimal digits similar to a calculator, and the exponential 10 times for the number of decimal places followed by “E”. The oddity that sticks out is that I remember trying to comprehend a 5 byte scheme that it used.

But regardless you have to compromise in some direction giving up speed in favor of accuracy or does the library system “skim” through easier values to increase efficiency? (I mean the newest system(s)) I’m guessing there are different implementations of the newest standards? I’m almost clueless how it works!

Another is sine tables, had to devise my own system to increase precision there, too! (nevermind found this:)

“The standard also includes extensive recommendations for advanced exception handling, additional operations (such as [URL=’https://en.wikipedia.org/wiki/Trigonometric_functions’]trigonometric functions[/URL]), expression evaluation, and for achieving reproducible results.”

from here: [URL]https://en.wikipedia.org/wiki/IEEE_floating_point[/URL]

“It’s not a problem with the standard, it’s a limitation of how accurate you can represent arbitrary floating point numbers (no matter the representation). I believe that the common implementations can exactly represent powers of 2 correct?”If the fractional part is the sum of negative powers of 2, and the smallest power of 2 can be represented in the mantissa, then yes, the representation is exact. So, for example, 7/8 = 1/2 + 1/4 + 1/8 is represented exactly.

“Maybe it’s time to review/modify/improve the IEEE-754 standard for floating-point arithmetic (IEEE floating point).”

It’s not a problem with the standard, it’s a limitation of how accurate you can represent arbitrary floating point numbers (no matter the representation). I believe that the common implementations can exactly represent powers of 2 correct?

One application where I used this, is when using the additive blending through the GPU pipeline, to compute a reduction. Integers were not supported in OpenGL for this, so I instead chose a very small power of 2, and relied on the fact that it and it’s multiples could be exactly represented using IEEE floating point specification, allowing me to effectively do an exact summation/reduction purely through GPU hardware.

“There was a joke that Intel changed the name to Pentium because they used the first Pentium to add 100 to 486 and got the answer 485.9963427…”

That cracked me up!

“The Pentium would have been 80586, but I guess the marketing people got involved and changed to Pentium. If it was the first model, that was the one that had the division problem where some divisions gave an incorrect answer out in the 6th or so decimal place. It cost Intel about $1 billion to recall and replace those bad chips. I think that was in ’94, not sure.”

There was a joke that Intel changed the name to Pentium because they used the first Pentium to add 100 to 486 and got the answer 585.9963427…

“Remember the Pentium FDIV bug?”

I definitely do! I wrote a small x86 assembly program that checked your CPU to see if it was a Pentium, and, if so, used the FDIV instruction to do one of the division operations that was broken. It then compared the computed result against the correct answer to see if they were the same.

I sent it to Jeff Duntemann, Editor in Chief of PC Techniques magazine. He published it in the Feb/Mar 1995 issue. I thought he would publish it as a small article (and send me some $). Instead he published in their HAX page, as if it had been just some random code that someone had sent in. That wouldn’t have been so bad, but he put a big blurb on the cover of that issue, “Detect Faulty Pentiums! Simple ASM Test”

“Remember the Pentium FDIV bug?”

That was half my life ago! No I don’t remember it specifically… nor do I remember using anything that might have had it, once windows 95 came out I went through several older computers before buying a new dell 10 years ago (P4 2.4ghz), and haven’t programmed much since…

This is from code by Doug Gwyn mostly. FP compare is a PITA because you cannot do a bitwise compare like you do with int datatypes.

You also cannot do this reliably either:

[code]

if (float_datatype_variable==double_datatype_variable)

[/code]

where you can otherwise test equality for most int datatypes: char, int, long with each other.

[code]

#include

#include

#include

// compile with gcc -std=c99 for fpclassify

inline int classify(double x) // filter out bad numbers correct for inexact, INT_MIN is the error return;

{

switch(fpclassify(x))

{

case FP_INFINITE:

errno=ERANGE;

return INT_MIN;

case FP_NAN:

errno=EINVAL;

return INT_MIN;

case FP_NORMAL:

return 2;

case FP_SUBNORMAL:

case FP_ZERO:

return 0;

}

return FP_NORMAL; //default

}

// doug gwyn’s reldif function

// usage: if(reldif(a, b) <= TOLERANCE) ... #define Abs(x) ((x) < 0 ? -(x) : (x)) #define Max(a, b) ((a) > (b) ? (a) : (b))

double maxulp=0; // tweek this into TOLERANCE

inline double reldif(double a, double b)

{

double c = Abs(a);

double d = Abs(b);

int rc=0;

d = Max(c, d);

d = (d == 0.0) ? 0.0 : Abs(a – b) / d;

rc=classify(d);

if(!rc) // correct almost zeroes to zero

d=0.;

if(rc == INT_MIN )

{ // error return

errno=ERANGE;

d=DBL_MAX;

perror(“Error comparing values”);

}

return d;

}

// usage: if(reldif(a, b) <= TOLERANCE) ... [/code]

“As an addendum – somebody may want to consider how to compare as equal 2 floating point numbers. Not just FLT_EPSILON or DBL_EPSILON which are nothing like a general solution.”

This isn’t anything I’ve ever given a lot of thought to, but it wouldn’t be difficult to compare two floating point numbers [U]in[/U] [U]memory[/U] (32 bits, 64 bits, or more), as their bit patterns would be identical if they were equal. I’m not including unusual cases like NAN, denormals, and such. The trick is in the step going from a literal such as 0.1, to its representation as a bit pattern.

As a guess, how about ROI? The Fujitsu Sparc64 M10 (has 16 cores), according to my friendly local Oracle peddler, supports it by writing the library onto the chip itself. Anyway one chip costs more than you and I make in a month, so it definitely is not a commodity chip. The z9 cpu like the ones in Power PC’s supports decimal, too.

So in a sense some companies “decimalized” in hardware. Other companies saw it as a way to lose money – my opinion. BTW this problem has been around forever. Hence other formats: Oracle internal math is BCD with 32? decimals, MicroFocus COBOL is packed decimals with decimals = 18 per their website

[URL]http://supportline.microfocus.com/documentation/books/sx20books/prlimi.htm[/URL]

Until now, nothing I could find in the C and C++ standard libraries can do correct computation with floats. I don’t understand why the committee have done nothing about this even though there are already external libraries to do this, some of which are for free online.

I also don’t know why Microsoft only includes part of their solution in a Decimal class (System.Decimal) that can be used for only 28-29 significant digits as the number’s precision even though I believe they can extend it to 1 zillion digits (can a string length be that long to verify its output accuracy anyway :biggrin: ?).

“Thanks [USER=301881]@SteamKing[/USER] I type with my elbows, it is slow but really inaccurate.”

So, a compromise then? What you lack in accuracy, you make up for in speed. :wink:

Thanks [USER=301881]@SteamKing[/USER] I type with my elbows, it is slow but really inaccurate.

“[USER=147785]@Mark44[/USER] [URL]https://software.intel.com/en-us/articles/intel-decimal-floating-point-math-library[/URL] claims IEEE-755-2008 support”

I think you mean IEEE 754 – 2008 support.

Computers can do extended precision arithmetic, they just need to be programmed to do so. How else are we calculating π to a zillion digits, or finding new largest primes all the time? Even your trusty calculator doesn’t rely on “standard” data types to do its internal calculations.

Early HP calculators, for example, used 56-bit wide registers in the CPU with special decimal encoding to represent numbers internally (later calculator CPUs from HP expanded to 64-bit wide registers), giving about a 10-digit mantissa and a two-digit exponent:

[URL]http://www.hpmuseum.org/techcpu.htm[/URL]

The “standard” data types (4-byte or 8-byte floating point) are a compromise so that computers can crunch lots of decimal numbers relatively quickly without too much loss of precision doing so. In situations where more precision is required, like calculating the zillionth + 1 digit of π, different methods and programming altogether are used.

“[USER=147785]@Mark44[/USER] [URL]https://software.intel.com/en-us/articles/intel-decimal-floating-point-math-library[/URL] claims IEEE-755-2008 support”

Thanks, jim, I’ll take a look at this.

[USER=528145]@jerromyjon[/USER] Michael Kahan consulted and John Palmer et al at Intel worked to create the 8087 math coprocessor, which was released in 1979. You most likely would have had to have an IBM motherboard with a special socket for it for the 8087. This AFAIK the first commodity FP math processor. It was the basis for original IEEE-754-1985 standard and the IEEE Standard for Radix-Independent Floating-Point Arithmetic (IEEE-754-1987).

With the 80486, a later Intel x86 processor marked the start of these cpus with an integrated math coprocessor. Still have ’em in there. Remember the Pentium FDIV bug? [URL]https://en.wikipedia.org/wiki/Pentium_FDIV_bug[/URL]

.

As an addendum – somebody may want to consider how to compare as equal 2 floating point numbers. Not just FLT_EPSILON or DBL_EPSILON which are nothing like a general solution.

[USER=147785]@Mark44[/USER] [URL]https://software.intel.com/en-us/articles/intel-decimal-floating-point-math-library[/URL] claims IEEE-754-2008 support

Edit 1/30/16

“The Pentium would have been 80586, but I guess the marketing people got involved and changed to Pentium.”

Found this on wikipedia: “The i486 does not have the usual 80-prefix because of a court ruling that prohibits trademarking numbers (such as 80486). Later, with the introduction of the [URL=’https://en.wikipedia.org/wiki/Pentium_(brand)’]Pentium brand[/URL], Intel began branding its chips with words rather than numbers.”

“I think that was in ’94, not sure.”

I think that was about the same time, I think it was a pentium 1, 25 Mhz intel chip with no math co-pro but it had a spot on the board for it. Perhaps it was just a 80486… can’t even remember the computer model!

“It was a pentium whatever number I forget but I was only programming up to the 386 instruction set at that time…”The Pentium would have been 80586, but I guess the marketing people got involved and changed to Pentium. If it was the first model, that was the one that had the division problem where some divisions gave an incorrect answer out in the 6th or so decimal place. It cost Intel about $1 billion to recall and replace those bad chips. I think that was in ’94, not sure.

“I’m 99% sure it didn’t have hardware support for floating point operations.”

That’s what I thought at first 68B09E was the coco3 was the last one now that I remember, second guessed myself.

“The 80386 didn’t have any hardware floating point instructions.”

It was a pentium whatever number I forget but I was only programming up to the 386 instruction set at that time… I printed out the instruction set on that same old fanfold paper stack and just started coding.

“I certainly remember the days when you’d expect an integer and get “12.00000001”. I think Algodoo still has that “glitch”! I can’t even remember if my first computer had floating point operations in the CPU, it was a Tandy (radio shack) color computer 2 64kb 8086 1 Mhz processor.

[/quote]I don’t think it had an Intel 8086 cpu. According to this wiki article, [URL]https://en.wikipedia.org/wiki/TRS-80_Color_Computer#Color_Computer_2_.281983.E2.80.931986.29[/URL], the Coco 2 had a Motorola MC6809 processor. I’m 99% sure it didn’t have hardware support for floating point operations.[QUOTE=jerromyjon]

When I graduated to 80386 the floating point wasn’t precise enough so I devised a method to use 2 bytes for the interger (+/- 32,767) and 2 bytes for the fractions (1/65,536ths). It was limited in flexibility but exact and quite fast!”

You must have had the optional Intel 80387 math processing unit or one of its competitors (Cyrix and another I can’t remember). The 80386 didn’t have any hardware floating point instructions.

“It was when personal computers started to really get big, and compilers could compile and run your program in about a minute or so, that I could see there was somthing to this programming thing.”

My first computer was so slow I would crunch the code for routines in machine code in my head and just type a data string of values to poke into memory through basic because even the compiler was slow and buggy… its the object oriented programming platforms that was when I really saw the “next level something” for programming.

I certainly remember the days when you’d expect an integer and get “12.00000001”. I think Algodoo still has that “glitch”! I can’t even remember if my first computer had floating point operations in the CPU, it was a Tandy (radio shack) color computer 2 64kb 8086 1 Mhz processor. When I graduated to 80386 the floating point wasn’t precise enough so I devised a method to use 2 bytes for the interger (+/- 32,767) and 2 bytes for the fractions (1/65,536ths). It was limited in flexibility but exact and quite fast!

“Good Insight. It brings back memories of the good old days (bad old days?) when we had no printers, screens or keyboards. I had to learn to read and enter 24 bit floating point numbers in binary, using 24 little lights and 24 buttons. It was made easier by the fact that almost all the numbers were close to 1.0.”

The first programming class I took was in 1972, using a language named PL-C. I think the ‘C’ meant it was a compact subset of PL-1. Anyway, you wrote your program and then used a keypunch machine to punch holes in a Hollerith (AKA ‘IBM’) card for each line of code, and added a few extra cards for the job control language (JCL). Then you would drop your card deck in a box, and one of the computer techs would eventually put all the card decks into a card reader to be transcribed onto a tape that would then be mounted on the IBM mainframe computer. Turnaround was usually a day, and most of my programs came back (on 17″ wide fanfold paper) with several pages of what to me was gibberish, a core dump, as my program didn’t work right. With alll this rigamarole, programming didn’t hold much interest for me.

Even as late as 1980, when I took a class in Fortran at the Univ. of Washington, we were still using keypunch machines. It was when personal computers started to really get big, and compilers could compile and run your program in about a minute or so, that I could see there was somthing to this programming thing.

“Great post, Mark. I was thinking of doing this very thing. Saved me from making a noodle of myself…. Thanks for that. FWIW decimal libraries from Intel correctly do floating point decimal arithmetic. The z9 power PC also has a chip that supports IEEE-754-2008 (decimal floating point) as does the new Fujitsu SPARC64 M10 with “software on a chip”. Cool idea.

Cool post, too.”

Thanks, Jim, glad you enjoyed it. Do you have any links to the Intel libraries, or a name? I’d like to look into that more.

“Good insight !

Quickly fix [URL=’https://en.wikipedia.org/wiki/Avogadro_constant’]Avogadro[/URL] !”

##6.022 × 10^{23}## right? I fixed it.

“Mark44 submitted a new PF Insights post

[URL=’https://www.physicsforums.com/insights/cant-computer-simple-arithmetic/’]Why Can’t My Computer Do Simple Arithmetic?[/URL]

”

Good insight !

Quickly fix [URL=’https://en.wikipedia.org/wiki/Avogadro_constant’]Avogadro[/URL] !

Mathematica does rational arithmetic, so it gets it exact: Sum[1/10,{i,10} ] = 1 ;-)

Maybe it's time to review/modify/improve the IEEE-754 standard for floating-point arithmetic (IEEE floating point).

What C will do with that is do the computations as doubles but store the result in a float. Floats save no time. They are an old-fashioned thing in there to save space.Numerical analysis programmers learn to be experts in dealing with floating point roundoff error. Hey, you can't expect a computer to store an infinite series, which is the definition of a real number.There are packages that do calculations with rational numbers, but these are too slow for number crunching.

You may find this very helpful:https://docs.oracle.com/cd/E19957-01/806-3568/ncg_goldberg.html

Great post, Mark. I was thinking of doing this very thing. Saved me from making a noodle of myself…. Thanks for that. FWIW decimal libraries from Intel correctly do floating point decimal arithmetic. The z9 power PC also has a chip that supports IEEE-754-2008 (decimal floating point) as does the new Fujitsu SPARC64 M10 with "software on a chip". Cool idea.Cool post, too.

Good Insight. It brings back memories of the good old days (bad old days?) when we had no printers, screens or keyboards. I had to learn to read and enter 24 bit floating point numbers in binary, using 24 little lights and 24 buttons. It was made easier by the fact that almost all the numbers were close to 1.0.

Great!!Just learnt something new today. Thanks for sharing!!

The same problem happens in decimal, for example if you used 1/3 instead. You could use rational numbers instead but this is why you should not do if (a == 1.0) because it may be pretty close to 1.0 but not actually 1. So you need to do if (abs(a – 1.0) < 0.00001), I wish there was an approximately equals function built in. The other problem with floats is that once you get above 16 million you can only store even values, then multiples of 4 past 32 million, etc.

I learned a lot with this Insight!