When Simple Geometry Unveils Deep Math

Table of Contents

Introduction

It is a remarkable fact that consideration of very elementary concepts in geometry often leads quickly into deep and unexpected mathematical terrain. In high school and even college, such complexities are usually treated cursorily or omitted altogether, in order to avoid bogging down coverage of the essentials. In this article, we delve, at least a bit, into some of the fascinating areas inextricably linked to even the simplest geometric figures.

The Number Line and Infinity

Consider the (non-negative) number line, familiar from grade school and used in high school analytic geometry:

Certainly, it includes the whole numbers, also known as “integers,” as shown above. When you think about it, that already puts us in the deep territory, because there is an infinite number of integers.

Furthermore, we know that there are numbers on this line, fractions, between the integers. In fact, if n is an integer, the sequence of fractions ##n+\frac 1 2,~n+\frac 1 3,~n+\frac 1 4,~n+\frac 1 5,…## shows that there are an infinite number of fractions between any two integers and that there are fractions arbitrarily close to any integer. These facts rather puzzled the ancient Greeks (see, for example, Zeno’s Dichotomy Paradox.)

In general, a fraction is any ratio ##\frac n m##, where n and m are integers. Fractions are thus more formally called “rational” numbers. (The integers are included as rational numbers since m can be 1.) It is easy to show that between any two rational numbers, no matter how close, there is an infinite number of other rational numbers. Pretty amazing to think about!

The Square and Irrationals

More weirdness arises as soon as we consider a very simple geometric figure, the square. Suppose a square’s sides each have length 1. What is the length of the square’s diagonal, that is, a line connecting its opposite corners? A simple computation using that old friend from high school, the Pythagorean Theorem, shows that the diagonal has length ##\sqrt 2## (i.e., the “square root of 2,” the number which when multiplied by itself gives 2.)

##\sqrt 2## is clearly somewhere between 1 and 2 on the number line. However, one can prove that ##\sqrt 2## is not a rational number! (Actually, there are many easy proofs of this fact; see this Physics Forums tutorial.) So what kind of bizarre number is it? The ancients were aware of this mystery, as well, and it really freaked them out! (The legend of Hippasus illustrates their reaction.)

There are actually many numbers that are not rational. Not surprisingly, they are known as “irrational” numbers. In fact, it turns out that between any two numbers (rational or irrational) on the number line, no matter how close, there are an infinite number of irrational numbers! Yes, there’s room for those, too!

Irrationals are perfectly bona fide numbers; however, we can’t write out their values in any simple way. Instead, we must specify them as the limit of an infinite sequence of rational numbers, usually as the “value” of an infinite rational sum (or “series.”) For example, according to Wikipedia’s article on the square root of 2, one such sum is:$$\sqrt 2\,={\frac {1}{2}}+{\frac {3}{8}}+{\frac {15}{64}}+{\frac {35}{256}}+{\frac {315}{4096}}+{\frac {693}{16384}}+\cdots$$However, we normally express an irrational number as a particular kind of infinite rational sum, i.e., the familiar decimal expansion. For example,$$\begin{align} \nonumber\sqrt 2 & = 1+\frac 4 {10}+\frac 1 {100}+\frac 4 {1000}+\frac 2 {10000}+\frac 1 {100000}+\frac 3 {1000000}+\frac 5 {10000000}+\cdots \\\nonumber\\\nonumber & = 1.4142135… \end{align}$$(Rational numbers can also be written this way. For instance, ##\frac {29} {11} = 2.63636363…## However, rational decimals always end in a repeating pattern of digits, which is never true of irrationals.)

Of course, although such representations are infinite, we can obtain the value to any desired precision by simply carrying out the expansion far enough. To put it another way, we approximate an irrational number with a rational number that is close to it. That ratio can be as close to the irrational as we like, assuming we are willing to expend the effort to calculate it, but (by definition!) we can never get all the way to the irrational, itself.

Nowadays, because numbers like ##\sqrt 2## are introduced early in secondary school and we’re used to computing their (approximate) values easily with digital devices, we may not stop to think how strange and intriguing irrational numbers truly are.

The Circle and Transcendentals

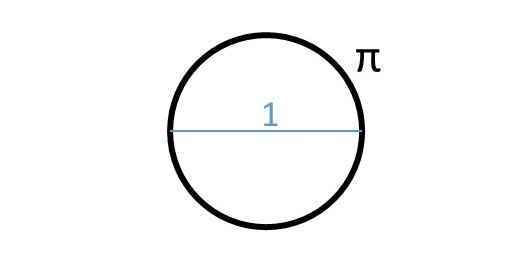

Returning to geometry, let’s look at the simplest figure of all: a circle. If its diameter has a length 1, what is its circumference? Of course, it’s that famous number we call ##\pi## (pi), whose decimal expansion begins ##3.14159…## Perhaps it comes as no surprise that ##\pi## is an irrational number. But remarkably, this fact was not proved until the 18th century!

Obviously, all rational numbers are algebraic. Until the 19th century, it was an open question whether or not all irrational numbers are algebraic, as well. Eventually, it was proved that non-algebraic numbers do exist. Such numbers are called “transcendental,” and they are extremely hard to find. In 1882, it was proved that ##\pi## is transcendental! As it happens, that other famous number known as “e” was found to be transcendental slightly earlier, in 1873.

To this day, it is not known whether such simple combinations as ##\pi+e## or ##\pi\cdot{e}## are transcendental — or even whether they are irrational! Yet, incredibly, it was established in the 1870’s that there are actually more transcendental numbers than algebraic numbers on the number line!

The Ellipse and — a Surprise!

Although ##\pi## is a strange number, at least the geometric formulas in which it appears are often quite simple. For example, consider a circle with radius r:

You may recall that the circle’s area is ##\pi{r^2}## and its circumference is ##2\pi{r}##.

What about an ellipse? (Click here for definition.) Below we picture an ellipse with semi-axes ##r_1## and ##r_2## (sort of the equivalent of radii):

It is well-known that the ellipse has area ##\pi{r_1}{r_2}##, a formula analogous to the circle’s. So one might suppose that the ellipse’s circumference would be (averaging the “radii”): ##2\pi\frac {r_1+r_2} 2 = \pi(r_1+r_2)##. Unfortunately, it turns out this is not the case.

The astonishing truth is that there is no formula, at all, for the circumference of an ellipse! At least, not an ordinary (“closed form”) one. Nevertheless, various expressions for the value have been devised that involve an infinite series. Here is one example: $$\pi (r_1+r_2)\left[1+\sum _{n=1}^{\infty }\left({\frac {(2n-3)!!}{2^{n}n!}}\right)^{2}\left(\frac {r_1-r_2} {r_1+r_2}\right)^{2n}\right]$$So much for simple geometry!

Using the language of calculus, a slightly more elegant way of writing the circumference of an ellipse is: $$4{r_1}\int _{0}^{\pi /2}{\sqrt {1-\left(1-{\frac {{r_2}^2} {{r_1}^2}}\right)\sin ^{2}\theta }}\,\,\ d\theta$$However, this integral cannot be directly evaluated. In fact, the study of such “elliptic integrals,” which began in the 18th century, eventually led to whole new areas of mathematics, such as “elliptic functions” and “elliptic curves.” These are active and fruitful subjects of research even today, although they no longer have much to do with actual ellipses. But at this point, I’m getting way out of my depth…!

B.S. in Mathematics, M.S. in Mathematics, M.S. in Computer Science.

I enjoy mathematics, physics, astronomy, and natural history. I was thrilled to discover Physics Forums, a place where I can learn more about these subjects and share my enthusiasm with other like-minded people around the world!

Is there a proof that the perimeter of an ellipse cannot be written in terms of elementary functions or is it that nobody has found the solution and it is an open problem?Great question! Unfortunately, I don't know the answer. Every reference I can find simply asserts that there is no elementary formula without giving any justification. If someone can comment with insight into this question, I would definitely be interested to hear it.

Thanks for this interesting remark. My personal bias, for which I have no evidence, is that the "correct" math is continuous, although we use it to model a universe that is likely discrete.Is there a proof that intergral of perimeter of an ellipse is can't not be written in terms of elementary functions or is it that nobody has found the solutions and it is a open problem ?

Thanks for this interesting remark. My personal bias, for which I have no evidence, is that the "correct" math is continuous, although we use it to model a universe that is likely discrete.Is there a proof that intergral of perimeter of an ellipse is can't not be written in terms of elementary functions or it is that nobody has found the solutions and it is a open problem ?

[QUOTE="Svein, post: 5691165, member: 538805"]Big fleas have little fleas,Upon their backs to bite 'em,And little fleas have lesser fleas,and so, ad infinitum.“Since humor is allowed, here is one of Bertrand Russell's favorites from his childhood. What is mind? No matter.What is matter? Never mind.

[QUOTE="trilobite, post: 5691117, member: 594722"]Thanks for this interesting remark. My personal bias, for which I have no evidence, is that the "correct" math is continuous, although we use it to model a universe that is likely discrete.”Big fleas have little fleas,Upon their backs to bite 'em,And little fleas have lesser fleas,and so, ad infinitum.

[QUOTE="David Reeves, post: 5686458, member: 608525"]But in the back of my mind is the digital physics of Zuse, in which the most correct math is discrete, because our universe is a process carried out on some kind of cosmic grid. But as Zuse pointed out that raises its own problems, including a clash with relativity theory.”Thanks for this interesting remark. My personal bias, for which I have no evidence, is that the "correct" math is continuous, although we use it to model a universe that is likely discrete.

[QUOTE="David Reeves, post: 5690059, member: 608525"]It's even easy to construct an ellipse, using two pins and a string. Then I can take another string and make it follow the curve of the ellipse. I can measure the circumference by straightening out the string and using a ruler. So why is the math difficult?”That's an excellent question, but I don't have an answer. It's one thing to live in a physical universe that is hard to understand, but as my article points out, "mysteries" lurk even in the ideal world of rudimentary geometry (squares, circles, ellipses!) It would be "nice" if the square root of 2 and pi were good old rational numbers. (Nice, but maybe boring!)

[QUOTE="David Reeves, post: 5686458, member: 608525"]I enjoyed this article very much. I would like to give it 5 stars. But when I try to do so, I am asked to "login in" even though I am already logged in. Do I lack the necessary membership level?”That used to happen to me, too (not that long ago.) For some reason, the feature works for me now. I don't think it has to do with membership level, but I have no idea why this happens.

[QUOTE="David Reeves, post: 5686458, member: 608525"]You are so right when you mention how our school math class glosses over certain things. Your example of the ellipse is very good. It seemed so simple when I learned the formula. It's even easy to construct an ellipse, using two pins and a string. I can measure the circumference by straightening out the string and using a ruler. So why is the math difficult?”Sorry, I left out an importance sentence, which I was thinking but failed to write down, so my reply makes no sense. This should read:It's even easy to construct an ellipse, using two pins and a string. Then I can take another string and make it follow the curve of the ellipse. I can measure the circumference by straightening out the string and using a ruler. So why is the math difficult?

I enjoyed this article very much. I would like to give it 5 stars. But when I try to do so, I am asked to "login in" even though I am already logged in. Do I lack the necessary membership level? You are so right when you mention how our school math class glosses over certain things. Your example of the ellipse is very good. It seemed so simple when I learned the formula. It's even easy to construct an ellipse, using two pins and a string. I can measure the circumference by straightening out the string and using a ruler. So why is the math difficult?As I understand math history, the whole point of the ratio is that we can divide any length exactly into a given number of unit lengths. That is what we mean by measurement. We should be able to find a unit length which can be used to divide both the diameter and the circumference. My solution is to follow the rules and come up with the "correct" answer. But in the back of my mind is the digital physics of Zuse, in which the most correct math is discrete, because our universe is a process carried out on some kind of cosmic grid. Meanwhile, I just rely on the trusty old equation for the ellipse, just as I learned it in school.

No I haven't. Will read through those chapters some time.

[QUOTE="SSequence, post: 5684959, member: 601088"]Well I am aware of it and I have sort of flipped through it (reading a few paragraphs/pages here and there).”I do not think that is enough. Have you seen the chapter where he introduces the "programming languages" FLooP, BLooP and GLooP?

Well I am aware of it and I have sort of flipped through it (reading a few paragraphs/pages here and there).It seems to go into fair detail about what creativity could mean from a psychological perspective (a terms which I am using in a very broad sense including several categories — but would go off topic to get into detail). I certainly like reading about that sort of stuff but certainly that isn't my main interest. My main interest is the mechanical perspective.It just so happens that psychological perspective (using the word "psychological" in a slightly loose sense) alsois the one that relates directly to variety of practical applications while the mechanical perspective comes closer to maths (seemingly without much application). Anyway this is perhaps somewhat off topic.——–One point that is somewhat obvious (but could be mentioned) is that wikipedia mentions that all "algebraic numbers are computable". I am assuming (someone correct this if the interpretation is wrong) that this can be interpreted as "all real algebraic numbers have a computable decimal representation". Taking that previous point to be true, the original post mentions that it isn't known that pi.e or pi+e are transcendental or irrational. Now if someone showed that the decimal representation of these numbers isn't computable then it would follow that answer to former questions is yes.

[QUOTE="SSequence, post: 5681833, member: 601088"]First define any partial recursive/computable function f:N→N (where N={0,1,2,3,….}) as whatever a simple C-like program (to use a concrete example) of the following type could calculate:int f (int x) {// insert some program code}To define any partial recursive/computable function f:N x N→N whatever a simple C-like program of the following type could calculate:int f (int x, int y) {// insert some program code}and so on. Whenever the program is stuck in a loop and doesn't get to the "return" command, it is assumed that the function f isn't returning any value for that particular input (and hence the function f is considered partial in that case).”Again, visit "Gödel, Escher, Bach" by Douglas Hofstadter. He discusses these concepts at length.

[QUOTE="trilobite, post: 5684173, member: 594722"]Thanks for describing your interesting (and ambitious!) project. [By the way, for some reason your post does not appear in "Discuss in the Community", at least not for me. Fortunately, I spotted it in Insights by scrolling down below my article, although usually I can't see any of the comments there, at all!]”Thanks. Actually I thought perhaps I was posting in the wrong place, since it does not directly address your own post. (I'm new here). Perhaps it will be moved where people can see it more easily.

[QUOTE="Jimster41, post: 5681651, member: 517770"]How wrong is it to cartoon it this way: A computable number is an example of a specific symbol that is repeatably accessible through some finite set of (repeatable?) symbolic steps. A non-computable number is one that cannot be specified (because specifying it would show it is computable).w.t.h?”Well it would seem that it certainly depends how you define the words involved really. But, for example, many mathematicians (though not all) would agree that the definitions of reals (in post#8) described in examples (1), (2) and (3) are "well-specified", "well-defined", "well-described" (whatever you wanna call it) but they will say that those real numbers are not computable.Perhaps when you used the word specified, you meant in the sense of being able to specify through a reasonably intuitive process? In that case the answer would depend on what one's opinion is regarding Church's Thesis.For how to define precisely a computable real in decimal representation? That would be pretty easy.First define any partial recursive/computable function f:N→N (where N={0,1,2,3,….}) as whatever a simple C-like program (to use a concrete example) of the following type could calculate:int f (int x) {// insert some program code}To define any partial recursive/computable function f:N x N→N whatever a simple C-like program of the following type could calculate:int f (int x, int y) {// insert some program code}and so on…..Obviously here it is assumed that the variables of type "int" are always greater than or equal to 0 (so negative values can't be taken as input or returned). As for what type of commands to include, you could really get away with absolutely minimal commands/control structures (but with more commands/structures its easier to write bigger and more understandable programs).Having defined a partial computable function f:N→N, you can define a computable real, in decimal representation, as any total computable/recursive function of one variable for which: 0 ≤ f(n) ≤ 9 for all n > 0

Nice first Insight @trilobite!

Problems arise even from simple high school geometry. Here is my example. Keep in mind, this is not my main area of expertise.I have been working on a physics-related AI project, and part of this project was developing an expert system. An expert system's two main modules are known as the knowledge base and the inference engine.I decided to work on the inference engine first. I developed an automated theorem prover in LISP. This ATP uses first order predicate logic (FOPL).After solving a few toy problems as a test, I turned my attention to Euclid's Element of Geometry. Since high school I regarded this as the quintessential book of infallible deductive logic. So I was shocked when I ran into difficulties in the very first proposition of Book 1. In this proposition, Euclid constructs an equilateral triangle in a very simple way. There seems no doubt that his proof is valid. Until, that is, one demands strict use of FOPL, with an acceptable set of axioms. By acceptable axioms I mean "self-evident truths" concerning what we now call Euclidean space.Unfortunately, Euclid makes some steps in his first proof which are not justified, based on his initial set of axioms. It's very clear that what he is doing is mixing deductive logic, with statements based on looking at a diagram, and applying our common-sense notions concerning geometrical objects in 2D space. Yet I would say his proof is valid. I can perceive its truth. This tells me that at least for some cases, proof in the sense of strict formal logic is not required. So I say Euclid was doing the right thing by taking a practical approach. Now my belief in logic as the ultimate verifier of mathematical truth begins to fade a bit.I knew beforehand that some of the earlier geometry theorem proving programs ran into difficulties, but I wanted to see for myself. I assumed that there must be some way of proving all of Euclid using FOPL, provided one had a sufficient set of axioms for each proof. Perhaps the old timers did not have powerful enough computers to handle this task? Perhaps their funding ran out? Or their expertise was demanded for more important problems?My conclusion was not what some logicians would accept. I decided that Euclid was right, and that trying to fit every one of his proofs into the narrow confines of FOPL is perhaps not a useful approach. But I was still looking for the automated proof. Should I use a higher order logic? That raises other issues, such as the lack of any generally accepted automated proof method, comparable to proof by resolution for FOPL.I looked into other techniques, such as found in the old PLANNER system. But PLANNER was never fully developed, in the sense of Hewitt's original proposal. I also tried PROLOG and ran into various problems. So I developed my own variation of backward chaining that works well. It runs in acceptable time using a LISP interpreter running on my PC. I decided to go ahead with FOPL because I saw no practical alternative.Now the problem was the axioms I needed for each proof. I do not like my axioms. They are too complicated.I looked in as many places as I could for someone who had actually published a complete automated proof, using FOPL, of Euclid, beginning with a relatively small number of simple axioms. Does it exist?People talk about axioms sets of Hilbert, Tarski, and Birkhoff. But has anyone actually used one of these axiom sets and proved every one of the propositions of Euclid? Or have they come up with a published set of axioms and proofs of their own? A paper that only presents a small number of proofs does not impress me, because I can do the same thing quite easily. Also, I want a print-out from the program, not the human transcription.I found out recently that in 2003 Meikle and Fleurot looked into Hilbert's geometry, as presented in his Grundlagen, and discovered some flaws. I'm not surprised. Even geniuses make mistakes. That is one reason we try to develop automated theorem provers and proof verifiers.Far from giving up on this issue, it's something that still fascinates me. If nothing else, I have a FOPL theorem prover that works, and indeed without relying on recursion with backtracking. I won't say it's the fastest.The other module is the knowledge base. When I look into how to represent math and physics knowledge, it's a more difficult problem than developing a FOPL prover. Of course it's often stated that we need second order logic. But as I said, I was beginning with what could be done using just FOPL.On a more positive note, my favorite moment in this work was when my theorem prover made the constructions needed for Book I Proposition I. People might ask a human who solves this, "how did you come up with that idea?" Some would say it's human creativity or imagination. Or is it? In fact, it's just logic. Working only from the original axiom set, which of course excludes all the results of later proofs, what method do you have to prove that two lines are equal? The method my theorem prover came up with was that two lines are equal if they are both radii of the same circle. That is exactly what Euclid does.But originally in the problem there is only a line, and no circles. I needed to add a construction axiom. Now the prover can constructs the circles. But it clearly has no "imagination" or "insight" or whatever other humanistic term you may use. It's just searching through many possibilities until it finds one that works.My AI project has turned out to be much harder than I thought it would be. It's not like Chess programming, which I have studied. It was realized decades ago that creating a Chess program which could beat the world champion was only a matter of a faster processor and more memory. This could be seen by extrapolating a graph that showed the Chess rating vs. the hardware factors. I think this is because Chess is actually a simple problem, compared to geometry.In conclusion, I think it's remarkable that for thousands of years humans taught Euclid's geometry as the best example of strict deductive reasoning. His reasoning method seems simple, so that even a young student can understand it. Euclid uses simple logic, and computers are logic machines. Yet creating a programmatic "Euclid in a box" turns out to be a challenging problem.

Thanks for describing your interesting (and ambitious!) project. [By the way, for some reason your post does not appear in “Discuss in the Community”, at least not for me. Fortunately, I spotted it in Insights by scrolling down below my article, although usually I can’t see any of the comments there, at all!]

[QUOTE="SSequence, post: 5680025, member: 601088"]In other words there is no marker as to when the values f(0), f(1), f(2) etc. end. What I mean here is that suppose we had f(0)=10, f(1)=6, f(2)=99, ….. then the first few decimal places will be like:0.10699…..Is the answer of computability of this real number independent of the function F? If it is, then is this real number always computable or always uncomputable?”All of that above is a lot to chew on and very interesting…How wrong is it to cartoon it this way: A computable number is an example of a specific symbol that is repeatably accessible through some finite set of (repeatable?) symbolic steps. A non-computable number is one that cannot be specified (because specifying it would show it is computable). w.t.h?A related question (at least in my head) Is the set of algebra's (ways of specifying something) considered closed somehow or are there considered to be potentially infinitely many algebras?

[QUOTE="trilobite, post: 5679990, member: 594722"]I guess I meant "not easily understandable." But this is not an area I know a whole lot about. I would be interested to see an easily understandable non-computable number, if you have an example.”Well there are many examples that one can easily give of course. I should perhaps describe a few that are more instructive.The main elementary idea is that the set of all programs can be seen as a decidable subset of strings generated from a limited set of symbols say S (for example keys on keyboard or any other well-chosen finite set). The main condition is that S must have a finite number of symbols in it. For example, a typical example of S (for a typical limited programming language) could be:S={A–Z, a–z, 0-9, +, -, *, =}One then, for example, considers a kind of 1-1 correspondence*** function between the set of strings (formed using symbols of S) that form valid programs and the set of natural numbers N={0,1,2,3,4,5,….}. The number that is assigned to each program is called its index.Now think of a limited programming language that just takes one or more integers as inputs and gives a single integer as output.Now here are several examples of uncomputable real numbers:(1) 0. h(1) h(2) h(3) h(4) h(5) …..That is the real number whose digit at n-th decimal place is equal to h(n). Here we have h:N→N as:h(x) = H(x,x)where H:N x N→N is defined as:H(x,y) = Given a program whose index is x and to which input given is the single integer y return 1 if the program eventually halts and 0 if it runs forever*** Actually 1-1 correspondence is of course not strictly necessary but this would be besides the point.

[QUOTE="SSequence, post: 5679761, member: 601088"]However, it is not quite clear what you meant with the last sentence though. It is quite easy to demonstrate specific examples of uncomputable real numbers. Of course just given a description it might not be easy at all to show that the given real number is uncomputable (and this is perhaps what you meant).”I guess I meant "not easily understandable." But this is not an area I know a whole lot about. I would be interested to see an easily understandable non-computable number, if you have an example.[QUOTE="SSequence, post: 5679761, member: 601088"]The category of algebraic/transcendental numbers seems to be an interesting one. What is the simplest (and also precise) definition of algebraic numbers?”The standard definition is that a number is algebraic if it is the root of a polynomial with rational coefficients. However, this wording was not very useful for the purposes of my article, so I chose a slightly different description.

[QUOTE="trilobite, post: 5676985, member: 594722"]I believe that a computable number is one that can be computed to any desired number of decimal places by a finite number of operations. Usually, this is expressed in terms of a computer program that accomplishes the required calculation. The interesting thing is that the computable numbers are countable, essentially (I think) because there are only a countable number of computer programs. Since the real numbers are uncountable, this means that not only do non-computable numbers exist, but that "most" numbers are non-computable! However, as with transcendentals, actually exhibiting a non-computable number is not easy.”Assuming LEM and assuming that decimal representation for real numbers is used, whatever you have written should be correct. However, it is not quite clear what you meant with the last sentence though. It is quite easy to demonstrate specific examples of uncomputable real numbers. Of course just given a description it might not be easy at all to show that the given real number is uncomputable (and this is perhaps what you meant).—–The category of algebraic/transcendental numbers seems to be an interesting one. What is the simplest (and also precise) definition of algebraic numbers?

[QUOTE="Jimster41, post: 5676931, member: 517770"]care to explain a bit what the def of a non-computable number is?”I believe that a computable number is one that can be computed to any desired number of decimal places by a finite number of operations. Usually, this is expressed in terms of a computer program that accomplishes the required calculation. The interesting thing is that the computable numbers are countable, essentially (I think) because there are only a countable number of computer programs. Since the real numbers are uncountable, this means that not only do non-computable numbers exist, but that "most" numbers are non-computable! However, as with transcendentals, actually exhibiting a non-computable number is not easy.I hope that is an accurate and understandable answer. Robert, can you confirm (or correct) this explanation?

care to explain a bit what the def of a non-computable number is?

[QUOTE="Robert Smart, post: 5673070, member: 604644"]We should perhaps mention the boundary between computable numbers (includes e and pi) and non-computable numbers. Since there are only a countable number of computable numbers there are much more non-computable numbers. The Mathematics you get into with this is near the boundary with Philosophy. To me the non-computable numbers don't exist [disagree: show me one]. However they give us a model of the real line that is logically consistent and helps us to think clearly about it.”Yes, that's a fascinating topic! Thanks for mentioning it.

We should perhaps mention the boundary between computable numbers (includes e and pi) and non-computable numbers. Since there are only a countable number of computable numbers there are much more non-computable numbers. The Mathematics you get into with this is near the boundary with Philosophy. To me the non-computable numbers don't exist [disagree: show me one]. However they give us a model of the real line that is logically consistent and helps us to think clearly about it.