Blockworld and its Foundational Implications: Delayed Choice and No Counterfactual Definiteness

In parts 1 and 2 of this 5-part Insights series, I explained the blockworld (BW) implication of special relativity. In part 3, I introduced general relativity (GR) and brought BW to bear on general relativistic cosmology to ‘explain’ what puzzles so many people about big bang cosmology, i.e., the origin of the universe. The bottom line there was that we just have to accept that reality is best understood adynamically in spatiotemporally holistic fashion, giving up[1] “the assumption that the [Newtonian Schema] way we humans solve physics problems must be the way the universe actually operates.” In part 4, I used BW’s spatiotemporally global view per the Lagrangian Schema to resolve paradoxes associated with closed timelike curves created by the spatiotemporally local, dynamic view of the Newtonian Schema. Here in the fifth and final part of this series, we will see that delayed choice and no counterfactual definiteness, two aspects of quantum nonlocality resulting from quantum entanglement, strongly suggest a spatiotemporally global view of reality. Delayed choice and no counterfactual definiteness will be explained by two examples below. Quantum nonlocality is used to mean slightly different things in the foundations community, but here I use it as defined in Wikipedia[2], “the phenomenon by which measurements made at a microscopic level contradict a collection of notions known as local realism that are regarded as intuitively true in classical mechanics.” Local realism will be explained below in the context of the second example. Let us begin with a simple delayed choice experiment done by Zeilinger.

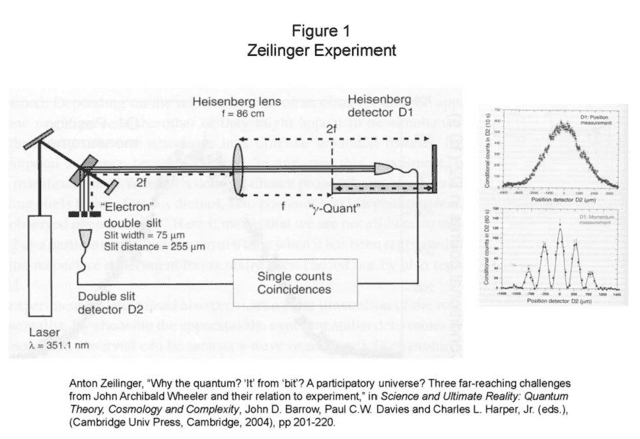

The Zeilinger experiment is shown in Figure 1 (reference therein). The mystery of this experimental outcome does not require any familiarity with the formalism of quantum mechanics (QM). A laser beamed into a crystal produces a pair of entangled photons. “Entangled” simply means that a measurement outcome on one of the photons (at detector D1 here) is inextricably related to the outcome of a measurement on the other photon (at detector D2 here), as we will soon see. One of the photons proceeds down through the double slit to detector D2 while its entangled partner travels to the right through a lens to detector D1. The experimentalist can decide whether to locate D1 at a distance of one focal length (f, the “momentum measurement”) or two focal lengths (2f, the “position measurement”) behind the lens (Figure 1). The photon detected at D2 then produces two different corresponding patterns, i.e., the upper right pattern (labeled “D1: Position measurement”) and the lower right pattern (labeled “D1: Momentum measurement”). That the photon at D2 acts like a particle (upper outcome) or a wave (lower outcome) depending on how its entangled partner is measured at D1 is interesting, but that’s not the conundrum. Notice that the distance from the photon source to D2 is much shorter than the distance from the photon source to D1. The two photons are emitted at the same time traveling at the same speed, so the first photon to reach a detector is at D2. But, the photon’s behavior at D2 is supposedly determined by the experimentalist’s choice of where to locate D1[3]. How does the photon at D2 know where D1 will be placed? Doesn’t the experimentalist have a real (delayed) choice that can be made after the photon has been detected at D2 and before the other photon has been detected at D1? If causal influences must proceed from past to future and the outcomes at D1 and D2 are causally related, then we have to conclude that the photon at D2 determines the experimentalist’s choice of where to put D1, thus our conundrum. QM doesn’t care about “choice,” it simply predicts the correlation in the outcomes without regard for which outcome occurs first.

The reason we have this confusion over the interpretation of QM is that we are seeking to understand the quantum state of affairs in spacetime while the Hamiltonian (dynamical) formalism of QM is one of time-evolved states in configuration space. This fact concerned Einstein and he wrote[4], “I cannot seriously believe in it [QM] because the theory cannot be reconciled with the idea that physics should represent a reality in time and space, free from spooky actions at a distance.” In other words, QM without further interpretation simply provides the probabilities of various experimental outcomes in various experimental configurations without regard for a subsequent explanation of the phenomena in spacetime. If we think of QM explanation along the lines of

Why = {What, Where, How}

we have the dynamical explanatory system (dES) given by:

dES = {initial state, configuration space, Schrödinger’s equation}

and we have the adynamical explanatory system (aES) given by:

aES = {fundamental ontological element, BW, adynamical global constraint}.

We can get an adynamical explanatory system for QM using the Lagrangian (adynamical) formalism for QM called the Feynman path integral, since it resides in spacetime. Here is how Feynman describes it[5]:

In the customary view, things are discussed as a function of time in very great detail. For example, you have the field at this moment, a different equation gives you the field at a later moment and so on; a method, which I shall call the Hamiltonian method. We have, instead [the action] a thing that describes the character of the path throughout all of space and time. … From the overall space-time point of view of the least action principle, the field disappears as nothing but bookkeeping variables insisted on by the Hamiltonian method.

The Feynman path integral is an adynamical global constraint in the BW, so all we need to complete our adynamical explanatory system for QM is a fundamental ontological element. Exactly what ontological element is distributed in that region of spacetime per the probability amplitude of the Feynman path integral is underdetermined by the formalism, so it can be chosen per the desiderata of the particular adynamical QM interpretation being pursued. So, in summary, why do we see these mysterious outcomes in Zeilinger’s experiment? The spatiotemporal distribution of fundamental ontological elements, which account for the outcomes in the spacetime configuration of Zeilinger’s experiment, follows from the adynamical global constraint. The outcomes are only mysterious when you try to formulate a dynamical counterpart to this adynamical explanation. Again, as with the GR examples, we have to accept that the adynamical explanation in the BW is fundamental and this is just one of those cases that doesn’t possess a (reasonable) corresponding dynamical explanation in the mechanical universe. No counterfactual definiteness is another such violation of our dynamical experience based in “local realism.” Next we’ll look at a simple example of no counterfactual definiteness due to Hardy as explained by Mermin.

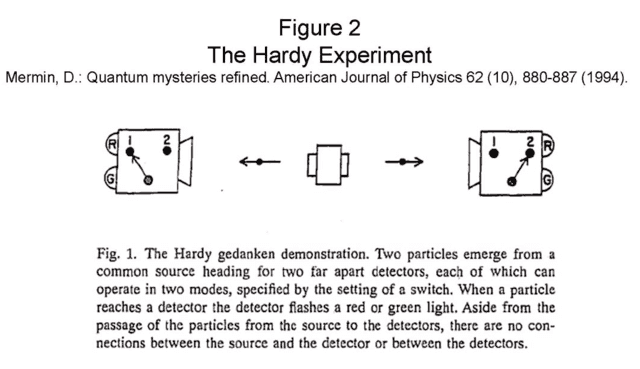

The Hardy experiment is shown and explained in Figure 2 (reference therein). As with the Zeilinger experiment, you don’t have to be familiar with the formalism of QM to appreciate this mysterious experimental outcome. In experimental trials (called “runs”) where the detector settings are not the same, i.e., left detector set at 1 and right detector set at 2 (“12” for short) or the converse “21,” you never see both detector 1 and detector 2 outcomes of green (“GG” for short). So, 12GG and 21GG never happen. The other two facts are that you occasionally see 22GG and you never see 11RR. Ok, so what’s so mysterious about that? Well, let’s try to explain the situation for the particles in a 22GG run. The particles don’t ‘know’ how they will be measured in any given run, in fact the detector settings can be chosen an instant before the particles arrive at their respective detectors. And, assuming the measurements are spacelike separated, no information about the setting and outcome at one detector can reach the other detector before the particle is measured there, i.e., the measurements are said to be “local.” Thus, the particles don’t know how they will be measured when they are emitted and they can’t transmit that information to each other during the run. So, it seems the possible outcomes with respect to each possible detector setting must be coordinated to prevent the proscribed outcomes 12GG, 21GG, and 11RR. Mermin calls the coordination of outcomes “instruction sets” and the fact that the particles have definite properties corresponding to possible measurements whether they are carried out or not is called “realism” or counterfactual definiteness. The combination of these assumptions is often called “local realism.” Both assumptions seem reasonable, locality because the temporal order of spacelike separated events is frame dependent according to special relativity, which would seem to rule out superluminal information transfer, and realism because the particles don’t ‘know’ how they will be measured until the last moment and they have to avoid proscribed outcome combinations. So, let’s continue with the analysis. Since the result is 22GG we know the particles were both 1X2G at emission (X = R or G). We know particle 1 wasn’t 1G2G, because had the detectors been set to 12 we would’ve gotten a 12GG outcome and that never happens. Likewise, particle 2 couldn’t be 1G2G or a setting of 21 would’ve produced a 21GG outcome which never happens. Thus, both particles must’ve been 1R2G in order to give the 22GG outcome and rule out the possibility of a 12GG or a 21GG outcome. But both particles being 1R2G can’t be right either, because then a setting of 11 would’ve produced a 11RR outcome which never happens. So, what are the states of the particles when they are emitted in a run giving 22GG? We’ve exhausted all the logical possibilities and none of them work!

There are only conjectural answers to that question in the foundations community (thus, the conundrum), but most seem to agree that there is no counterfactual definiteness. If there is no counterfactual definiteness, how does one account for the 22GG outcome of the Hardy experiment? Again, the mystery arises because we’re looking for a Newtonian Schema (dynamical) explanation, i.e., a spatiotemporally local, time-evolved story. In such a story, the state of each of the particles is time-evolved instant by instant from emission to detection, so we’re simply asking for an instant by instant account of the particles in this experiment, as I tried unsuccessfully to provide above. But, if reality is fundamentally a BW whose observed patterns are governed by an adynamical global constraint[6], then the observed patterns in Hardy’s experiment result from some fundamental ontological element distributed in spacetime per the Feynman path integral of QM. We can’t observe the particles between emission and detection, QM tells us and we find experimentally that additional detectors introduced between the source and detectors already in place will change the final outcomes. Thus, we’re forbidden from acquiring such intermediate information without changing the experimental configuration, i.e., the BW pattern. The adynamical global constraint (Feynman path integral) therefore doesn’t have to say anything about counterfactual definiteness, it only has to account for the actual spatiotemporal experimental configuration and outcomes. Therefore, in the BW view, we’re not concerned with counterfactual outcomes, i.e., BW patterns that aren’t ‘there’.

Before concluding the series, I should emphasize that an adynamical global constraint in a BW is not superdeterminism. In superdeterminism, the experimentalist’s decisions are determined by some ‘force’ or ‘influence’, presumably per the laws of physics. That is, the reason for the measurement settings is strictly determined by some cause propagated from past to future. Superdeterminism would be a very Newtonian Schema account of our proposed Lagrangian Schema Universe.

So, to conclude this series of Insights on the BW and its foundational implications, let me emphasize the take home message. The puzzle, paradoxes, and conundrums introduced in parts 3, 4 and 5 (respectively) were all found to be the result of our dynamical bias, i.e., our desire to explain phenomena via a spatiotemporally local, time-evolved story. In every case, we found the mystery disappeared when the corresponding theory was viewed as providing an adynamical global constraint in the BW. In GR, the adynamical global constraint is Einstein’s equations (or the Lagrangian counterpart) and in QM it’s the Feynman path integral. Therefore, physics may be telling us that reality is fundamentally a BW governed by an adynamical global constraint, rather than a time-evolved story governed by dynamical laws per our anthropocentric bias. Price & Wharton may have said it best when they wrote[7]:

In putting future and past on an equal footing, this kind of approach is different in spirit from (and quite possibly formally incompatible with) a more familiar style of physics: one in which the past continually generates the future, like a computer running through the steps in an algorithm. However, our usual preference for the computer-like model may simply reflect an anthropocentric bias. It is a good model for creatures like us, who acquire knowledge sequentially, past to future, and hence find it useful to update their predictions in the same way. But there is no guarantee that the principles on which the universe is constructed are of the sort that happen to be useful to creatures in our particular situation.

Physics has certainly overcome such biases before – the Earth isn’t the center of the universe, our sun is just one of many, there is no preferred frame of reference. Now, perhaps there’s one further anthropocentric attitude that needs to go: the idea that the universe is as “in the dark” about the future as we are ourselves.

While the BW with an adynamical global constraint easily dispels the puzzle, paradoxes and conundrums of modern physics, it is not mandated by the phenomena. However, any foundational explanation of these phenomena will almost certainly revolutionize our “mechanistic worldview,” so I close with this quote by DeWitt[8]:

In the past, fundamental new discoveries have led to changes – including theoretical, technological, and conceptual changes – that could not even be imagined when the discoveries were first made. The discovery that we live in a universe that, deep down, allows for [quantum nonlocality] strikes me as just such a fundamental, important new discovery. … If I am right about this, then we are living in a period that is in many ways like that of the early 1600s. At that time, new discoveries, such as those involving Galileo and the telescope, eventually led to an entirely new way of thinking about the sort of universe we live in. Today, at the very least, the discovery of [quantum nonlocality] forces us to give up the Newtonian view that the universe is entirely a mechanistic universe. And I suspect this is only the tip of the iceberg, and that this discovery, like those in the 1600s, will lead to a quite different view of the sort of universe in which we live.

1. Wharton, K.: The Universe is not a Computer. In Questioning the Foundations of Physics, A. Aguirre, B. Foster and Z. Merali (Eds), pp. 177-190, Springer (2015) http://arxiv.org/abs/1211.7081. This essay won third prize in the 2012 FQXi essay contest.

2. https://en.wikipedia.org/wiki/Quantum_nonlocality

3. Another example of this type of experiment is Kim, Y., Yu, R., Kulik, S.P., Shih, Y.H., and Scully, M.: A Delayed Choice Quantum Eraser. Physical Review Letters 84, pp. 1-5 (2000)

https://arxiv.org/pdf/quant-ph/9903047.pdf

4. Born, M., Einstein, A., & Born, I.: The Born Einstein Letters: correspondence between Albert Einstein and Max and Hedwig Born from 1916 to 1955 with commentaries by Max Born. Translated by Irene Born. Macmillan Press (1971), p. 158.

5. R.P. Feynman Nobel Lecture: In: Brown, L.M. (ed.) Feynman’s Thesis: A New Approach to Quantum Theory. World Scientific Press (2005).

6. I should note that both EEs and their Lagrangian counterpart are formulated in 4D spacetime, so we can easily think of both as adynamical global constraints. In contrast, the NS approach to N-particle systems in QM (Schrödinger’s equation) is formulated in configuration space which is 3N-dim and therefore does not map unambiguously into 4D spacetime. The LS version of QM (Feynman’s path integral) is formulated in 4D spacetime, so the LS approach is preferred for the BW view of QM.

7. Price, H., & Wharton, K.: Dispelling the Quantum Spooks – a Clue that Einstein Missed? (2013) http://arxiv.org/abs/1307.7744

8.DeWitt, R.: Worldviews: An Introduction to the History and Philosophy of Science. Blackwell Publishing (2004), p 304.

Note: Comments for this post have been disabled. Please show your feedback by using the star rating at the top of the article and or contacting the author personally.

PhD in general relativity (1987), researching foundations of physics since 1994. Coauthor of “Beyond the Dynamical Universe” (Oxford UP, 2018) and “Einstein’s Entanglement” (Oxford UP, 2024).